nemonik / Hands On Devops

Labels

Projects that are alternatives of or similar to Hands On Devops

1. Preface

This class is under near constant development. It's backlog is here https://github.com/nemonik/hands-on-DevOps/projects/1

2. DevOps

A hands-on DevOps course covering the culture, methods and repeated practices of modern software development involving Vagrant, VirtualBox, Ansible, Kubernetes, K3s, MetalLB, Traefik, Docker-Compose, Docker, Taiga, GitLab, Drone CI, SonarQube, Selenium, InSpec, Alpine 3.10, Ubuntu-bionic, CentOS 7...

A reveal.js presentation written to accompany this course can found at https://nemonik.github.io/hands-on-DevOps/.

This course will

- Discuss DevOps,

- Have you spin up a DevOps toolchain and development environment, and then

- Author two applications and their accompanying pipelines, the first a continuous integration (CI) and the second a continuous delivery (CD) pipeline.

After this course, you will

- Be able to describe and have hands-on experience DevOps methods and repeated practices (e.g., use of Agile methods, configuration management, build automation, test automation and deployment automation orchestrated under a CICD orchestrator), and why it matters;

- Address challenges transitioning to DevOps methods and repeated practices;

- Have had hands-on experience with Infrastructure as Code( Vagrant and Ansible ) to provision and configure an entire DevOps Factory (i.e. a toolchain and development environment) on VirtualBox including Docker Registry, Taiga, GitLab, Drone CI, and SonarQube;

- Have had hands-on experience authoring code to include authoring and running automated tests in a CICD pipeline all under Configuration Management to ensure an application follows style, adheres to good coding practices, builds, identify security issues, and functions as expected;

- Have had hands-on experience with

- using Infrastructure as Code (IaC) in Vagrant and Ansible;

- creating and using Kanban board in Taiga;

- code configuration in git and GitLab;

- authoring code in Go;

- using style checkers and linters;

- authoring a Makefile;

- various commands in Docker (e.g., building a container image, pushing a container into a registry, creating and running a container);

- authoring a pipeline for Drone CI;

- using Sonar Scanner CLI to perform static analysis;

- authoring security test in InSpec;

- author an automated functional test in Selenium;

- authoring a dynamic security test in OWASP Zap; and

- using container platform to author and scale services;

- Have had hands-on experience authoring code to include authoring and running automated tests in a CICD pipeline all under Configuration Management to ensure an application follows style, adheres to good coding practices, builds, identify security issues, and functions as expected.

We will be spending most of the course hands-on working with the tools and in the Unix command line making methods and repeated practices of DevOps happen, so as to grow an understanding of how DevOps actually works. Although, not necessary I would encourage you to pick up a free PDF of The Linux Command Line by William Shotts if you are no familiar wit the Linux command line.

Don't fixate on the tools used, nor the apps we develop in the course of learning how and why. How and why is far more important. This course like DevOps is not about tools although we'll be using them. You'll spend far more time writing code. (Or at the very least cutting-and-pasting code.)

3. Author

- Michael Joseph Walsh [email protected], [email protected]

4. Copyright and license

See the License file at the root of this project.

5. Prerequisites

The following skills would be useful in following along but aren't strictly necessary.

What you should bring:

- Managing Linux or Unix-like systems would be tremendously helpful, but not necessary, as we will be living largely within the terminal.

- A basic understanding of Vagrant, Docker, and Ansible would also be helpful, but not necessary.

6. Table of Contents

- 1. Preface

- 2. DevOps

- 3. Author

- 4. Copyright and license

- 5. Prerequisites

- 6. Table of Contents

-

7. DevOps unpacked

- 7.1. What is DevOps?

- 7.2. What DevOps is not

- 7.3. To succeed at DevOps you must

- 7.4. If your effort doesn't

- 7.5. Conway's Law states

- 7.6. DevOps is really about

- 7.7. What is DevOps culture?

- 7.8. How is DevOps related to the Agile?

- 7.9. How do they differ?

- 7.10. Why?

- 7.11. What are the principles of DevOps?

- 7.12. Much of this is achieved

- 7.13. What is Continuous Integration (CI)?

- 7.14. How?

-

7.15. CI best practices

- 7.15.1. Utilize a Configuration Management System

- 7.15.2. Automate the build

- 7.15.3. Employ one or more CI services/orchestrators

- 7.15.4. Make builds self-testing

- 7.15.5. Never commit broken

- 7.15.6. Stakeholders are expected to pre-flight new code

- 7.15.7. The CI service/orchestrator provides feedback

- 7.16. What is Continuous Delivery?

- 7.17. But wait. What's a pipeline?

- 7.18. How is a pipeline manifested?

- 7.19. What underlines all of this?

- 7.20. But really why do we automate err. code?

- 7.21. Monitoring

- 7.22. Crawl, walk, run

- 8. Reading list

-

9. Now the hands-on part

- 9.1. Configuring environmental variables

- 9.2. VirtualBox

- 9.3. Git Bash

- 9.4. Retrieve the course material

-

9.5. Infrastructure as code (IaC)

- 9.5.1. Hashicorp Packer

-

9.5.2. Vagrant

- 9.5.2.1. Vagrant documentation and source

- 9.5.2.2. Installing Vagrant

-

9.5.2.3. The Vagrantfile explained

- 9.5.2.3.1. Modelines

- 9.5.2.3.2. Setting extra variables for Ansible roles

- 9.5.2.3.3. Automatically installing and removing the necessary Vagrant plugins

- 9.5.2.3.4. Inserting Proxy setting via host environmental variables

- 9.5.2.3.5. Inserting enterprise CA certificates

- 9.5.2.3.6. Auto-generate the Ansible inventory file

- 9.5.2.3.7. Mounting the project folder into each vagrant

- 9.5.2.3.8. Build a Vagrant Box

- 9.5.2.3.9. Configuring the Kubernetes cluster vagrant(s)

- 9.5.2.3.10. Provisioning and configuring the development vagrant

- 9.5.3. Ansible

- 9.6. The cloud-native technologies underlying the tools

-

9.7. The long-running tools

- 9.7.1. Taiga, an example of Agile project management software

- 9.7.2. GitLab CE, an example of configuration management software

- 9.7.3. Drone CI, an example of CICD orchestrator

- 9.7.4. SonarQube, an example of a platform for the inspection of code quality

- 9.7.5. PlantUML Server, an example of light-weight documentation

-

9.8. Golang helloworld project

- 9.8.1. Create the project's backlog

- 9.8.2. Create the project in GitLab

- 9.8.3. Setup the project on the development Vagrant

- 9.8.4. Author the application

- 9.8.5. Align source code with Go coding standards

- 9.8.6. Lint your code

- 9.8.7. Build the application

- 9.8.8. Run your application

- 9.8.9. Author the unit tests

- 9.8.10. Automate the build (i.e., write the Makefile)

- 9.8.11. Author Drone-based Continuous Integration

- 9.8.12. The completed source for helloworld

-

9.9. Golang helloworld-web project

- 9.9.1. Create the project's backlog

- 9.9.2. Create the project in GitLab

- 9.9.3. Setup the project on the development Vagrant

- 9.9.4. Author the application

- 9.9.5. Build and run the application

- 9.9.6. Run gometalinter.v2 on application

- 9.9.7. Fix the application

- 9.9.8. Author unit tests

- 9.9.9. Perform static analysis (i.e., sonar-scanner) on the command line

- 9.9.10. Automate the build (i.e., write the Makefile)

- 9.9.11. Dockerize the application

- 9.9.12. Run the Docker container

- 9.9.13. Push the container image to the private Docker registry

- 9.9.14. Configure Drone to execute your CICD pipeline

- 9.9.15. Add Static Analysis (SonarQube) step to pipeline

- 9.9.16. Add the build step to the pipeline

- 9.9.17. Add container image publish step to pipeline

- 9.9.18. Add container deploy step to pipeline

- 9.9.19. Add compliance and policy automation (InSpec) test to the pipeline

- 9.9.20. Add automated functional test to pipeline

- 9.9.21. Add DAST step (OWASP ZAP) to pipeline

- 9.9.22. All the source for helloworld-web

- 9.10. Additional best practices to consider around securing containerized applications

- 9.11. Microservices

- 9.12. Using what you've learned

- 9.13. Shoo away your vagrants

- 9.14. That's it

7. DevOps unpacked

7.1. What is DevOps?

DevOps (a clipped compound of the words development and operations) is a software development methodology with an emphasis on a reliable release pipeline, automation, and stronger collaboration across all stakeholders with the goal of delivery of value in close alignment with business objectives into the hands of users (i.e., production) more efficiently and effectively.

Ops in DevOps gathers up every IT operation stakeholders (i.e., cybersecurity, testing, DB admin, infrastructure and operations practitioners -- essentially, any stakeholder not commonly thought of as directly part of the development team in the system development life cycle).

Yeah, that's the formal definition.

In the opening sentences of Security Engineering: : A Guide to Building Dependable Distributed Systems — Third Edition, author Ross Anderson defines what a security engineer is

Security engineering is about building systems to remain dependable in the face of malice, error, or mischance. As a discipline, it focuses on the tools, processes, and methods needed to design, implement, and test complete systems, and to adapt existing systems as their environment evolve.

The words security engineering could be replaced in the opening sentence with each one of the various stakeholders (e.g., development, quality assurance, technology operations).

The point I'm after is everyone is in it to collectively deliver dependable software.

Also, there is no need to overload the DevOps term -- To Dev wildcard (i.e., *) Ops to include your pet interest(s), such as, security, test, whatever... to form DevSecOps, DevTestOps, DevWhateverOps... DevOps has you covered.

7.2. What DevOps is not

About the tools.

There are countless vendors out there, who want to sell you their crummy tool.

7.3. To succeed at DevOps you must

Combine software development and information technology operations in the systems development life cycle with a focus on collaboration across the life cycle to deliver features, fixes, and updates frequently in close alignment with business objectives.

If the effort cannot combine both Dev and Ops in collaboration with this focus the effort will most certainly fail.

7.4. If your effort doesn't

grok (i.e, Understand intuitively) what DevOps is in practice and have performed the necessary analysis of the existing culture and a strategy for how to affect a change the effort again will likely fail.

I say this because the culture is the largest influencer over the success of both Agile and DevOps and ultimately the path taken (i.e., plans made.)

7.5. Conway's Law states

Any organization that designs a system (defined broadly) will produce a design whose structure is a copy of the organization's communication structure.

From "How Do Committees Invent?"

Followed with

Ways must be found to reward design managers for keeping their organizations lean and flexible.

This was written over 50 years ago.

If your communication structure is broke, so shall your systems be.

7.6. DevOps is really about

Providing the culture, methods and repeated practices to permit stakeholders to collaborate.

7.7. What is DevOps culture?

culture noun \ ˈkəl-chər

the set of shared attitudes, values, goals, and practices that characterizes an institution or organization

I love when a word means precisely what you need it to mean.

With the stakeholders sharing the same attitudes, values, goals, using the same tools, methods and repeated practices for their particular discipline you have DevOps Culture.

7.7.1. We were taught the requisite skills as children

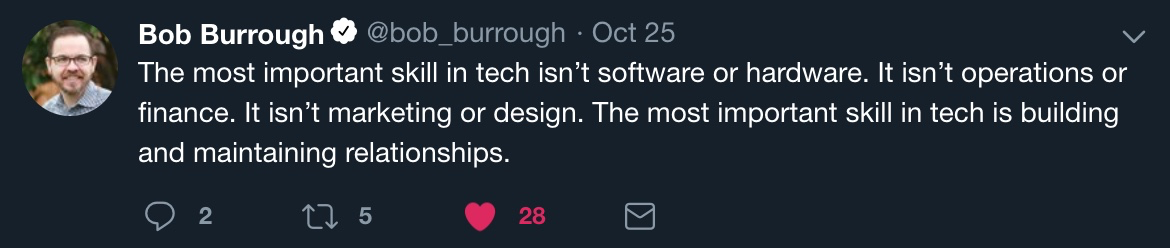

7.7.2. Maintaining relationships is your most important skill

7.7.3. Be quick... Be slow to...

7.7.4. The pressures of social media

7.8. How is DevOps related to the Agile?

Agile Software Development is an umbrella term for a set of methods and practices based on the values and principles expressed in the Agile Manifesto.

For Agile, solutions evolve through collaboration between self-organizing, cross-functional teams utilizing the appropriate practices for their context.

DevOps builds on this.

7.9. How do they differ?

While DevOps extends Agile methods and practices by adding communication and collaboration between

- development,

- security,

- quality assurance, and

- technology operations

functionaries as stakeholders into the broader effort to ensure software systems are delivered in a reliable, low-risk manner.

7.10. Why?

In Agile Software Development, there is rarely an integration of these individuals outside the immediate application development team with members of technology operations (e.g., network engineers, administrators, testers, security engineers.)

7.11. What are the principles of DevOps?

As DevOps matures, several principles have emerged, namely the necessity for product teams to:

- Apply holistic thinking to solve problems,

- Develop and test against production-like environments,

- Deploy with repeatable and reliable processes,

- Remove the drudgery and uncertainty through automation,

- Validate and monitor operational quality, and

- Provide rapid, automated feedback to the stakeholders

7.12. Much of this is achieved

Through the repeated practices of Continuous Integration (CI) and Continuous Delivery (CD) often conflated into simply "CI/CD" or "CICD".

WARNING: After tools, CICD is the next (albeit mistakenly) thing thought to be the totality of DevOps.

7.13. What is Continuous Integration (CI)?

It is a repeated Agile software development practice lifted specifically from Extreme programming, where members of a development team frequently integrate their work to detect integration issues as quickly as possible thereby shifting discovery of issues "left" (i.e., early) in the software release.

7.14. How?

Each integration is orchestrated through a CI service/orchestrator (e.g., Jenkins CI, Drone CI, GitLab Runners, Concourse CI) that essentially assembles a build, runs unit and integration tests every time a predetermined trigger has been met; and then reports with immediate feedback.

7.15. CI best practices

7.15.1. Utilize a Configuration Management System

For the software's source code, where the mainline (i.e., master branch) is the most recent working version, past releases held in branches, and new features not yet merged into the mainline branch worked in their own branches.

7.15.2. Automate the build

By accompanying build automation (e.g., Gradle, Apache Maven, Make) alongside the source code.

7.15.3. Employ one or more CI services/orchestrators

To perform source code analysis via automating formal code inspection and assessment.

7.15.4. Make builds self-testing

In other words, ingrain testing by including unit and integration tests (e.g., Spock, JUnit, Mockito, SOAPUI, go package Testing) with the source code to be executed by the build automation to be executed by the CI service.

7.15.5. Never commit broken

Or untested source code to the CMS mainline or otherwise risk breaking a build.

7.15.6. Stakeholders are expected to pre-flight new code

Prior to committing source code in their own workspace.

7.15.7. The CI service/orchestrator provides feedback

On the success or fail of a build integration to all its stakeholders.

7.16. What is Continuous Delivery?

It is a repeated software development practice of providing a rapid, reliable, low-risk product delivery achieved through automating all facets of building, testing, and deploying software.

7.16.1. Extending Continuous Integration (CI)

With additional stages/steps aimed to provide ongoing validation that a newly assembled software build meets all desired requirements and thereby is releasable.

7.16.2. Consistency

Is achieved through delivering applications into production via individual repeatable pipelines of ingrained system configuration management and testing

7.17. But wait. What's a pipeline?

A pipeline automates the various stages/steps (e.g., Static Application Security Testing (SAST), build, unit testing, Dynamic Application Security Testing (DAST), secure configuration acceptance compliance, integration, function and non-functional testing, delivery, and deployment) to enforce quality conformance.

7.18. How is a pipeline manifested?

Each delivery pipeline is manifested as Pipeline as Code (i.e., software automation) accompanying the application's source code in its version control repository.

7.19. What underlines all of this?

I and the community of practice argue DevOps will struggle without ubiquitous access to shared pools of software configurable system resources and higher-level services that can be rapidly provisioned (i.e., cloud).

Although, it is actually possible to DevOps on mainframes The video is in the contect of continuous delivery, but read between the lines.

7.20. But really why do we automate err. code?

In 2001, I think Larry Wall in his 1st edition of Programming Perl book put it best with "We will encourage you to develop the three great virtues of a programmer:

laziness,

impatience, and

hubris."

The second edition of the same book provided definitions for these terms

7.20.1. Why do I mention Larry Wall?

Well...

Once you have established yourself as an icon in your field it is important that you pay tribute to some of the great legends that came before you. This kind of gesture will create the illusion that you’re still humble and serve as a preemptive strike against anyone who has noticed what a callus and delusional ass you have become.

From the opening monologue to the Blue Man Group’s I Feel Love https://www.youtube.com/watch?v=8vBKI3ya-l0

I kid, but in all serious the sentiment of this seminal, nearly twenty-something year-old book still holds true.

Let me explain.

7.20.2. Laziness

The quality that makes you go to great effort to reduce overall energy expenditure. It makes you write labor-saving programs that other people will find useful, and document what you wrote so you don't have to answer so many questions about it. Hence, the first great virtue of a programmer._ (p.609)

7.20.3. Impatience

The anger you feel when the computer is being lazy. This makes you write programs that don't just react to your needs, but actually anticipate them. Or at least pretend to. Hence, the second great virtue of a programmer._ (p.608)

7.20.4. Hubris

Excessive pride, the sort of thing Zeus zaps you for. Also, the quality that makes you write (and maintain) programs that other people won't want to say bad things about. Hence, the third great virtue of a programmer._ (p.607)

7.20.5. We automate for

- Faster, coordinated, repeatable, and therefore more reliable deployments.

- Discover bugs sooner. Shifting their discovery left in the process.

- To accelerates the feedback loop between Dev and Ops (Again, Ops is everyone not typically considered part of the development team.)

- Reduce tribal knowledge, where one group or person holds the keys to how things get done. Yep, this is about making us all replaceable.

- Reduce shadow IT (i.e., hardware or software within an enterprise that is not supported by IT. Just waiting for its day to explode.)

7.21. Monitoring

Once deployed, the work is done, right?

So, that improvements can be gauged and anomalies detected.A development team's work is not complete once a product leaves CICD and enters production; especially, under DevOps where the development team includes members of ops (e.g., security and technology operations).

7.21.1. The primary metric

Is working software, but this is not the only, measurement. The key to successful DevOps is knowing how well the methodology and the software it produces are performing. Is the software truely dependable?

7.21.2. An understanding of performance

Is achieved by collecting and analyzing data produced by environments used for CICD and production.

7.21.3. Establish a baseline performance

So, that improvements can be gauged and anomalies detected.

7.21.4. Set reaction thresholds

To formulate and prioritize reactions weighting factors, such as, the frequency at which an anomaly arises and who is impacted.

7.21.5. Reacting

Could be as simple as operations instructing users through training to not do something that triggers the anomaly, or more ideally, result in an issue being entered into the product's backlog culminating in the development team delivering a fix into production.

7.21.6. Gaps in CICD

Are surfaces through monitoring resulting in for example additional testing for an issue discovered in prodcuction.

Yep. News flash. DevOps will not entirely stop all bugs or vulnerabilities from making it into production, but this was never the point.

7.21.7. Eliminating waste

Through re-scoping of requirements, re-prioritizing of a backlog, or the deprecation of unused features. Again, all surfaced through monitoring.

7.22. Crawl, walk, run

7.22.1. Ultimately, DevOps is Goal

- With DevOps one does not simply hit the ground running.

- One must first crawl, walk and then ultimately run as you embrace the necessary culture change, methods, and repeated practices.

- Collaboration and automation are expected to continually improve so to achieve more frequent and more reliable releases.

8. Reading list

AntiPatterns: Refactoring Software, Architectures, and Projects in Crisis William J. Brown, Raphael C. Malveau, Hays W. "Skip" McCormick, and Thomas J. Mowbray ISBN: 978-0-471-19713-3 Apr 1998

Continuous Delivery: Reliable Software Releases through Build, Test, and Deployment Automation (Addison-Wesley Signature Series (Fowler)) David Farley and Jez Humble ISBN-13: 978-0321601919 August 2010

The DevOps Handbook: How to Create World-Class Agility, Reliability, and Security in Technology Organizations Gene Kim Jez Humble, Patrick Debois, and John Willis ISBN-13: 978-1942788003 October 2016

Accelerate: The Science of Lean Software and DevOps: Building and Scaling High Performing Technology Organizations Nicole Forsgren PhD, Jez Humble, and Gene Kim ISBN-13: 978-1942788331 March 27, 2018

Site Reliability Engineering: How Google Runs Production Systems 1st Edition Betsy Beyer, Chris Jones, Jennifer Petoff, and Niall Richard Murphy ISBN-13: 978-1491929124 April 16, 2016 Also, available online at https://landing.google.com/sre/book/index.html

Release It!: Design and Deploy Production-Ready Software 2nd Edition Michael T. Nygard ISBN-13: 978-1680502398 January 18, 2018

The SPEED of TRUST: The One Thing That Changes Everything Stephen M .R. Covey ISBN-13: 978-1416549000 February 5, 2008 The gist of the book can be found at SlideShare https://www.slideshare.net/nileshchamoli/the-speed-of-trust-13205957

RELATIONSHIP TRUST: The 13 Behaviors of High-Trust Leaders Mini Session Franklin Covey Co. https://archive.franklincovey.com/facilitator/minisessions/handouts/13_Behaviors_MiniSession_Handout.pdf

How to Deal With Difficult People Ujjwal Sinha Oct 25, 2014 The SlideShare can be found here https://www.slideshare.net/abhiujjwal/how-2-deal-wid-diiclt-ppl

Leadership Secrets of the Rouge Warrior: A Commando's Guide to Success Richard Marcinko w/ John Weisman ISBN-13: 978-0671545154 June 1, 1996

Security Engineering: A Guide To Building Dependable Distributed Systems Ross Anderson ISBN-13: 978-0470068526 April 14, 2008 The second edition of this book can be downloaded in whole from https://www.cl.cam.ac.uk/~rja14/book.html and Mr Anderson has released chapters from his 3rd edition under development.

How Do Committees Invent? Melvin E. Conway Copyright 1968, F. D. Thompson Publications, Inc. http://www.melconway.com/Home/Conways_Law.html

The Pragmatic Programmer: Your Journey To Mastery, 20th Anniversary Edition (2nd Edition) David Thomas and Andrew Hunt ISBN-13: 978-0135957059 September 23, 2019

9. Now the hands-on part

In this class, you will spin up a development and toolchain environment.

NOTE

- This class makes use of NOTE sections to call out things that are important to know or to drop a few tidbits. Reading these notes may save you some aggravation.

- This diagrams used in this document are authored in PlantUML. Each diagram is authored in a PlantUML domain-specific language (DSL) and can be found in a separate file in the plantuml folder in the root of the project. The class project makes use of GitHub worflow to render each file into a scalable vector graphic (SVG) placing each in the diagrams folder also at the root of the class project.

PlantUML source for this diagram

9.1. Configuring environmental variables

If your environment makes use of an HTTP proxy or SSL inspection, you will need to configure environment variables for this class.

On Mac OS X or *NIX environments

The following set_env.sh BASH script is included in the root of the project and can be used to configure the UNIX environment variables, but must be adjusted for your specific environment.

#!/usr/bin/env bash

# Copyright (C) 2019 Michael Joseph Walsh - All Rights Reserved

# You may use, distribute and modify this code under the

# terms of the the license.

#

# You should have received a copy of the license with

# this file. If not, please email <[email protected]>

# run in shell via

#

# ```

# . ./set_env.sh

# ```

#

# will set proxy setting to the the hard-cded value on line 36.

# Modify for your environment.

#

# ```

# . ./set_env.sh no_proxy

# ```

#

# will unset all proxy related environmental variables.

set_proxy=true

if [ $# -ne 0 ]; then

args=("[email protected]")

if [[ $args[1] = "no_proxy" ]]; then

set_proxy=false

fi

fi

if [[ $set_proxy = true ]]; then

export PROXY=http://gatekeeper.mitre.org:80

echo "Setting proxy environment varaibles to $PROXY"

export proxy=$PROXY

export HTTP_PROXY=$PROXY

export http_proxy=$PROXY

export HTTPS_PROXT=$PROXY

export https_proxy=$PROXY

export ALL_PROXY=$PROXY

export NO_PROXY="127.0.0.1,localhost,.mitre.org,.local,192.168.0.9,192.168.0.10,192.168.0.11,192.168.0.206,192.168.0.10,192.168.0.202,192.168.0.203,192.168.0.204,192.168.0.205"

export no_proxy=$NO_PROXY

else

echo "Unsetting proxy environment varaibles"

unset PROXY

unset proxy

unset HTTP_PROXY

unset http_proxy

unset HTTPS_PROXY

unset https_proxy

unset NO_PROXY

unset no_proxy

unset ALL_PROXY

fi

export CA_CERTIFICATES=http://employeeshare.mitre.org/m/mjwalsh/transfer/MITRE%20BA%20ROOT.crt,http://employeeshare.mitre.org/m/mjwalsh/transfer/MITRE%20BA%20NPE%20CA-3%281%29.crt

echo "Setting CA_CERTIFICATES environment variable to $CA_CERTIFICATES"

export VAGRANT_ALLOW_PLUGIN_SOURCE_ERRORS=0

echo "Setting VAGRANT_ALLOW_PLUGIN_SOURCE_ERRORS to $VAGRANT_ALLOW_PLUGIN_SOURCE_ERRORS"

# Force the use of the vagrant cacert.pem file

echo "unsetting CURL_CA_BUNDLE and SSL_CERT_FILE environment variables"

unset CURL_CA_BUNDLE

unset SSL_CERT_FILE

When in the root of the project, the script can be executed in the terminal session via

. ./set_env.sh

If you're doing this class on your MITRE Life cycle running OS X while on the MITRE network you will NOT want to set any proxy related environmental variable. So, you will want to execute this script in this manner

. ./set_env.sh no_proxy

If you have no HTTP proxy and no SSL inspection to be concerned about (such as running the class off of MITRE's corporate network), the alternative is to execute unset.sh BASH script to unset all these values:

#!/usr/bin/env bash

# Copyright (C) 2019 Michael Joseph Walsh - All Rights Reserved

# You may use, distribute and modify this code under the

# terms of the the license.

#

# You should have received a copy of the license with

# this file. If not, please email <[email protected]>

# run in shell via

#

# ```

# . ./unset.sh

# ```

unset no_proxy

unset NO_PROXY

unset ALL_PROXY

unset PROXY

unset proxy

unset https_proxy

unset http_proxy

unset HTTP_PROXY

unset HTTPS_PROXY

unset ftp_proxy

unset FTP_PROXY

unset ca_certificates

unset CA_CERTIFICATES

Execute in terminal session via

. ./unset.sh

On Windows

If you are on Windows perform the following to set environmental variable adjusting for your environment:

- In the Windows taskbar, enter

edit the system environment variablesintoSearch Windowsand select the icon with the corresponding name. - The

Systems Propertywindow will likely open in the background, so you will likely need to go find it and bring it forward. - In the

Systems Property'sAdvancedtab selectEnvironment Variables...button. - In

Environment Variableswindows that opens, underUser variables for...pressNew ...to open aNew User Variablewindow, enter eachVariable Nameand and its respectiveValuefor each pair in the table below

| Variable Name | Value |

|---|---|

| proxy | http://gatekeeper.mitre.org:80 |

| http_proxy | http://gatekeeper.mitre.org:80 |

| https_proxy | http://gatekeeper.mitre.org:80 |

| no_proxy | 127.0.0.1,localhost,.mitre.org,.local,192.168.0.9,192.168.0.10,192.168.0.11,192.168.0.206,192.168.0.10,192.168.0.202,192.168.0.203,192.168.0.204,192.168.0.205 |

| CA_CERTIFICATES | http://pki.mitre.org/MITRE%20BA%20Root.crt,http://pki.mitre.org/MITRE%20BA%20NPE%20CA-3%281%29.crt |

| VAGRANT_ALLOW_PLUGIN_SOURCE_ERRORS | 0 |

If you're on MITRE Institute Lab PC you will want to set all of these variables.

If you're doing this class on your MITRE Life cycle running Windows (I have yet to verify a Windows MITRE Life cycle.) you will likely NOT want to set the proxy, http_proxy, https_proxy, no_proxy or any proxy related environmental variables. You will only need to set the CA_CERTIFICATES and VAGRANT_ALLOW_PLUGIN_SOURCE_ERRORS environment variables.

NOTE

- The certificate URLs need to be encoded for parentheses to work.

- On Windows, you may inadvertently cut-and-paste blank space characters (e.g., tabs, spaces) and the subsequent Ansible automation may fail.

9.2. VirtualBox

You will need to install VirtualBox, a general-purpose full virtualizer for x86 hardware.

The class has been verified to work with VirtualBox 6.1.14. Newer version may or may not work.

9.2.1. Installing VirtualBox

For the MITRE Institute class when I teach it, it is assumed VirtualBox is installed, but below are the instructions for installing it on Windows 10.

- Open your browser to https://www.virtualbox.org/wiki/Downloads

- Click

Windows hostslink underVirtualBox 6.1.14 platform packages. - Find and click the installer to install.

You will also need to turn off Hyper-V, Virtual Machine Platform, Windows Sandbox and Windows Subsystem for Linux if installed.

- Click Windows

Startand then typeturn Windows features on or offinto the search bar. - Select the icon with the corresponding name.

- This will open the

Windows Featurespage and then unselect theHyper-V,Virtual Machine Platform,Windows Hypervisor Platform,Windows SandboxandWindows Subsystem for Linuxcheckboxes if enabled and then clickOkay.

The same site has the Mac OS X download. The installation is less involved.

If you're using Linux use your package manager. For example, to install on Arch Linux one would use sudo pacman -Syu virtualbox.

NOTE

- On Windows 10, if you are running into a VirtualBox that is partially working -- Generally, just flaking out. -- check to make sure you have turned off all the features listed above.

9.3. Git Bash

Git Bash is git packaged for Windows with bash (a command-line shell) and a collection of other, separate *NIX utilities, such as, ssh, scp, cat, find and others compiled for Windows.

9.3.1. Installing Git Bash

If you are on Windows, you'll need to install git.

- Download from https://git-scm.com/download/win

- Click the installer.

- Click

nextuntil you reach theConfiguring the line ending conversionspage selectCheckout as, commit Unix-style line endings. - Then

next,next,next... - Don't open git-bash from the final window as it will not have the environmental variables set. Go onto step-6.

- On the Windows task bar, enter

gitintoSearch Windowsthen selectGit Bash. UseGit Bashinstead ofCommandorPowershell.

On OS X, git can be installed via Homebrew or you can install the Git client directly https://git-scm.com/download/mac.

9.4. Retrieve the course material

If you are reading this on paper and have nothing else, you only have a small portion of the class material. You will need to download the class project containing all the automation to spin up a DevOps toolchain and development virtual machines, etc.

In a shell, for the purposes of the MITRE Institute class, this means in Git Bash, clone the project from https://github.com/nemonik/hands-on-DevOps.git via git like so:

git -c http.sslVerify=false clone https://github.com/nemonik/hands-on-DevOps.git

Output will resemble (i.e., will not be precisely the same):

Cloning into 'hands-on-DevOps'...

remote: Enumerating objects: 52, done.

remote: Counting objects: 100% (52/52), done.

remote: Compressing objects: 100% (39/39), done.

remote: Total 5236 (delta 21), reused 28 (delta 11), pack-reused 5184

Receiving objects: 100% (5236/5236), 75.60 MiB | 13.40 MiB/s, done.

Resolving deltas: 100% (1578/1578), done.

9.5. Infrastructure as code (IaC)

This class uses Infrastructure as code (IaC) to set up the class environment (i.e., two or more virtual machines that will later be referred to as "vagrants".) IaC is the process of provisioning, and configuring (i.e., managing) computer systems through code, rather than directly manipulating the systems by hand (i.e., through manual processes).

This class uses Vagrant and Ansible IaC frameworks and the following sections will unpack each.

9.5.1. Hashicorp Packer

This class uses Packer, a command-line utility that can be used for many use cases, but I primarily use to build EC2 and VirtualBox machine images. In the case of VirtualBox to create Vagrant box. In this class, if you select to use the nemonik/alpine310 base box in the ConfigurationVars module defined in configuration_vars.rb file at the root of the project, then Terraform will be invoked to create the nemonik/alpine310 box, and then this image will be used to create the nemonik/devops_alpine310 box on which all the vagrants will be based. If you select ubuntu/bionic64 or centos/7 these boxes will be retrieved from Hashicorp and then used to build their respective boxes: nemonik/devops_bionic64 and nemonik/devops_7 on top of which the class' vagrants are built.

9.5.1.1. Packer document and source

Packer's documetnation can be found at https://packer.io/docs/.

Its canonical (i.e., authoritative) source can be found at https://github.com/hashicorp/terraform.

9.5.1.2. Installing Packer

Retrieved the installationation executable for your machine's operating system from here

https://releases.hashicorp.com/packer/1.5.1/

If you're on OS X you want https://releases.hashicorp.com/packer/1.5.1/packer_1.5.1_darwin_amd64.zip and if you're on Windows you want https://releases.hashicorp.com/packer/1.5.1/packer_1.5.1_windows_amd64.zip.

You may also be able to install Packer via your Linux operating system's package manager.

Installing on OS X

To install on OS X simply unpack and copy to /user/local/bin/packer as root for example

cd ~/Downloads

unzip ~/Downloads/packer_1.5.1_darwin_amd64.zip

Archive: /Users/mjwalsh/Downloads/packer_1.5.1_darwin_amd64.zip

inflating: packer

sudo cp ~/Downloads/packer /usr/local/bin

packer version

Packer v1.5.1

Installing on Windows

To install on Windows:

- Right click the downloaded zip file.

- Extract all.

- Enter

C:\Program Files\packer_1.5.1_windows_amd64as the path. - Extract.

- Continue (Give admin permission.)

- In the Windows taskbar, enter

envintoSearch Windowsand selectedit the system environment variables. - In the

Systems Property'sAdvancedtab selectEnvironment Variables...button. - In

Environment Variableswindows that opens, inUser variables for...select the Path variable then selectEdit ...to open aEdit environment variablewindow. - Select

newand enterC:\Program Files\packer_1.5.1_windows_amd64.

9.5.1.3. Packer project explained

The terraform project to build nemonik/alpine310 box is found in box/packer-alpine310 looks like so:

PlantUML source for this diagram

The folders and files have the following purpose:

-

alpine310.json- is the packer template used to orchestrate the creation of thenemonik_alpine310.box. It defines the number of CPUs, the memory, the ISO used to install the VM's OS, the root password, the automation to install the OS, the scripts to call to further configure, and the box to result -

build_box.sh- is a bash shell script used to run the Packer build -

configs- a folder, holds the Vagrantfile template used in post processing the VM -

vagrantfile.tpl- is the template used in post processing the VM -

http- a folder, holds theanswerfilewgetinto the VM -

answer- holds all the answers provide tosetup-alpineto configure the VM -

isos- a folder,is a cache that holds the ISOs downloaded to install Alpine, so that they don't have to be repeatedly downloaded -

nemonik_alpine310.box- the vagrant box created by Packer. -

packer_cache- a folder, is a packer cache used to speed production of the box -

remove_box.sh- a bash script used to remove thenemonik_alpine310.boxfrom Vagrant -

configure- a folder, holding the shells script used to configure the VM -

configure.sh- the script to configure the VM past what is directed inbuilders'boot_commandinalpine310.json

The bulk of the work is in alpine310.json, http/answer and scripts/configure.sh.

The contents of the alpine310.json:

{

"description": "Build Alpine 3.10 x86_64 vagrant box",

"variables": {

"vm_name": "alpine-3.10.0-x86_64",

"cpus": "1",

"memory": "1024",

"disk_size": "61440",

"iso_local_url": "isos/x86_64/alpine-virt-3.10.0-x86_64.iso",

"iso_download_url": "http://dl-cdn.alpinelinux.org/alpine/v3.10/releases/x86_64/alpine-virt-3.10.0-x86_64.iso",

"iso_checksum": "b3d8fe65c2777edcbc30b52cde7f5ae21dff8ecda612d5fe7b10d5c23cda40c4",

"iso_checksum_type": "sha256",

"root_password": "vagrant",

"ssh_username": "root",

"ssh_password": "vagrant"

},

"provisioners": [

{

"type": "shell",

"execute_command": "/bin/sh -ux '{{.Path}}'",

"script": "scripts/configure.sh"

}

],

"builders": [

{

"type": "virtualbox-iso",

"headless": false,

"vm_name": "{{user `vm_name`}}",

"format": "ova",

"guest_os_type": "Linux26_64",

"guest_additions_mode": "disable",

"disk_size": "{{user `disk_size`}}",

"iso_urls": [

"{{user `iso_local_url`}}",

"{{user `iso_download_url`}}"

],

"iso_checksum": "{{user `iso_checksum`}}",

"iso_checksum_type": "{{user `iso_checksum_type`}}",

"http_directory": "http",

"communicator": "ssh",

"ssh_username": "{{user `ssh_username`}}",

"ssh_password": "{{user `ssh_password`}}",

"ssh_wait_timeout": "10m",

"shutdown_command": "/sbin/poweroff",

"boot_wait": "30s",

"boot_command": [

"root<enter><wait>",

"ifconfig eth0 up && udhcpc -i eth0<enter><wait10>",

"wget http://{{ .HTTPIP }}:{{ .HTTPPort }}/answers<enter><wait>",

"setup-alpine -f $PWD/answers<enter><wait5>",

"{{user `root_password`}}<enter><wait>",

"{{user `root_password`}}<enter><wait>",

"<wait10><wait10><wait10>",

"<wait10><wait10><wait10>",

"<wait10><wait10><wait10>",

"<wait10><wait10><wait10>",

"y<enter>",

"<wait10><wait10><wait10>",

"<wait10><wait10><wait10>",

"<wait10><wait10><wait10>",

"<wait10><wait10><wait10>",

"mount /dev/sda2 /mnt<enter>",

"echo 'PermitRootLogin yes' >> /mnt/etc/ssh/sshd_config<enter>",

"umount /dev/sda2<enter>",

"sync<enter>",

"reboot<enter>"

],

"hard_drive_interface": "sata",

"vboxmanage": [

[

"modifyvm",

"{{.Name}}",

"--memory",

"{{user `memory`}}"

],

[

"modifyvm",

"{{.Name}}",

"--cpus",

"{{user `cpus`}}"

],

[

"modifyvm",

"{{.Name}}",

"--rtcuseutc",

"on"

]

]

}

],

"post-processors": [

[

{

"type": "vagrant",

"vagrantfile_template": "configs/vagrantfile.tpl",

"output": "nemonik_alpine310.box"

}

]

]

}

Packer's builders' boot_command's execution is very time sensitive, so you may need to add additional <wait10>s if the build was to fail for you.

9.5.1.4. Packer execution

Along the way a modal will pop up asking you "Do you want the application “packer” to accept incoming network connections?". Give your approval otherwise Packer won't be able to do its job. The command-line output of Packer when creating the nemonik_alpine310.box will resemble:

INFO: Creating nemonik/devops_alpine310 box...

setting PROXYOPTS to none in http/answers

building nemonik_alpine310.box via packer

virtualbox-iso: output will be in this color.

==> virtualbox-iso: Retrieving ISO

==> virtualbox-iso: Trying isos/x86_64/alpine-virt-3.10.0-x86_64.iso

==> virtualbox-iso: Trying isos/x86_64/alpine-virt-3.10.0-x86_64.iso?checksum=sha256%3Ab3d8fe65c2777edcbc30b52cde7f5ae21dff8ecda612d5fe7b10d5c23cda40c4

==> virtualbox-iso: isos/x86_64/alpine-virt-3.10.0-x86_64.iso?checksum=sha256%3Ab3d8fe65c2777edcbc30b52cde7f5ae21dff8ecda612d5fe7b10d5c23cda40c4 => /Users/mjwalsh/Development/workspace/DevOps_class/alpine-playground/box/packer-alpine310/packer_cache/0172be7009f3c62b985d67dec5495188c7596bcd.iso

==> virtualbox-iso: Starting HTTP server on port 8922

==> virtualbox-iso: Creating virtual machine...

==> virtualbox-iso: Creating hard drive...

==> virtualbox-iso: Creating forwarded port mapping for communicator (SSH, WinRM, etc) (host port 3684)

==> virtualbox-iso: Executing custom VBoxManage commands...

virtualbox-iso: Executing: modifyvm alpine-3.10.0-x86_64 --memory 1024

virtualbox-iso: Executing: modifyvm alpine-3.10.0-x86_64 --cpus 1

virtualbox-iso: Executing: modifyvm alpine-3.10.0-x86_64 --rtcuseutc on

==> virtualbox-iso: Starting the virtual machine...

==> virtualbox-iso: Waiting 30s for boot...

==> virtualbox-iso: Typing the boot command...

A little VirtualBox window for the VM will also pop up, so that you can monitor progress as Alpine is installed. At some point Alpine will reboot and Packer will continue outputting:

==> virtualbox-iso: Using ssh communicator to connect: 127.0.0.1

==> virtualbox-iso: Waiting for SSH to become available...

==> virtualbox-iso: Provisioning with shell script: scripts/configure.sh

==> virtualbox-iso: + rc-update -u

virtualbox-iso: * Caching service dependencies ... [ ok ]

==> virtualbox-iso: + setup-apkcache

==> virtualbox-iso: + apk update

virtualbox-iso: Enter apk cache directory (or '?' or 'none') [/var/cache/apk]: fetch http://dl-cdn.alpinelinux.org/alpine/v3.10/main/x86_64/APKINDEX.tar.gz

virtualbox-iso: v3.10.4-14-g975b6a3945 [http://dl-cdn.alpinelinux.org/alpine/v3.10/main/]

virtualbox-iso: OK: 5669 distinct packages available

==> virtualbox-iso: + apk upgrade -U --available

virtualbox-iso: OK: 87 MiB in 52 packages

==> virtualbox-iso: + apk add -U bash bash-completion sudo curl

virtualbox-iso: (1/10) Installing readline (8.0.0-r0)

virtualbox-iso: (2/10) Installing bash (5.0.0-r0)

virtualbox-iso: Executing bash-5.0.0-r0.post-install

virtualbox-iso: (3/10) Installing bash-completion (2.8-r0)

virtualbox-iso: (4/10) Installing openrc-bash-completion (0.41.2-r1)

virtualbox-iso: (5/10) Installing ca-certificates (20190108-r0)

virtualbox-iso: (6/10) Installing nghttp2-libs (1.39.2-r0)

virtualbox-iso: (7/10) Installing libcurl (7.66.0-r0)

virtualbox-iso: (8/10) Installing curl (7.66.0-r0)

virtualbox-iso: (9/10) Installing kmod-bash-completion (24-r1)

virtualbox-iso: (10/10) Installing sudo (1.8.27-r2)

virtualbox-iso: Executing busybox-1.30.1-r3.trigger

virtualbox-iso: Executing ca-certificates-20190108-r0.trigger

virtualbox-iso: OK: 94 MiB in 62 packages

==> virtualbox-iso: + echo http://dl-cdn.alpinelinux.org/alpine/v3.10/community

==> virtualbox-iso: + apk add -U virtualbox-guest-additions virtualbox-guest-modules-virt

virtualbox-iso: fetch http://dl-cdn.alpinelinux.org/alpine/v3.10/community/x86_64/APKINDEX.tar.gz

virtualbox-iso: (1/3) Installing virtualbox-guest-additions (6.0.8-r1)

virtualbox-iso: Executing virtualbox-guest-additions-6.0.8-r1.pre-install

virtualbox-iso: (2/3) Installing virtualbox-guest-modules-virt (4.19.98-r0)

virtualbox-iso: (3/3) Installing virtualbox-guest-additions-openrc (6.0.8-r1)

virtualbox-iso: Executing busybox-1.30.1-r3.trigger

virtualbox-iso: Executing kmod-24-r1.trigger

virtualbox-iso: OK: 98 MiB in 65 packages

==> virtualbox-iso: + rc-update add virtualbox-guest-additions

virtualbox-iso: * service virtualbox-guest-additions added to runlevel default

==> virtualbox-iso: + echo vboxsf

==> virtualbox-iso: + echo 'UseDNS no'

==> virtualbox-iso: + adduser -D vagrant

==> virtualbox-iso: + chpasswd

==> virtualbox-iso: + echo vagrant:vagrant

==> virtualbox-iso: chpasswd: password for 'vagrant' changed

==> virtualbox-iso: + mkdir -pm 700 /home/vagrant/.ssh

==> virtualbox-iso: + chown -R vagrant:vagrant /home/vagrant/.ssh

==> virtualbox-iso: + wget -O /home/vagrant/.ssh/authorized_keys https://raw.githubusercontent.com/mitchellh/vagrant/master/keys/vagrant.pub

==> virtualbox-iso: Connecting to raw.githubusercontent.com (151.101.0.133:443)

==> virtualbox-iso: authorized_keys 100% |********************************| 409 0:00:00 ETA

==> virtualbox-iso: + chmod 644 /home/vagrant/.ssh/authorized_keys

==> virtualbox-iso: + adduser vagrant wheel

==> virtualbox-iso: + echo 'Defaults exempt_group=wheel'

==> virtualbox-iso: + echo '%wheel ALL=NOPASSWD:ALL'

==> virtualbox-iso: + sed -i.bak '[email protected]/[email protected]/[email protected]' /etc/passwd

==> virtualbox-iso: + rm /etc/passwd.bak

==> virtualbox-iso: + echo

==> virtualbox-iso: + rm -Rf /var/cache/apk/APKINDEX.6456cd1a.tar.gz /var/cache/apk/APKINDEX.d8b2a6f4.tar.gz /var/cache/apk/bash-5.0.0-r0.045599ea.apk /var/cache/apk/bash-completion-2.8-r0.c0f500d3.apk /var/cache/apk/ca-certificates-20190108-r0.c0ec382f.apk /var/cache/apk/curl-7.66.0-r0.86434890.apk /var/cache/apk/installed /var/cache/apk/kmod-bash-completion-24-r1.ea84cd3e.apk /var/cache/apk/libcurl-7.66.0-r0.ae6524b8.apk /var/cache/apk/nghttp2-libs-1.39.2-r0.6f35d6f8.apk /var/cache/apk/openrc-bash-completion-0.41.2-r1.198430a1.apk /var/cache/apk/readline-8.0.0-r0.32186548.apk /var/cache/apk/sudo-1.8.27-r2.fe94d45e.apk /var/cache/apk/virtualbox-guest-additions-6.0.8-r1.b025c3f0.apk /var/cache/apk/virtualbox-guest-additions-openrc-6.0.8-r1.b528fb1a.apk /var/cache/apk/virtualbox-guest-modules-virt-4.19.98-r0.f198f848.apk

==> virtualbox-iso: + dd 'if=/dev/zero' 'of=/EMPTY' 'bs=1M'

It will take some time to zero out the unused portion of the root drive, but Packer will continue:

==> virtualbox-iso: 59959+0 records in

==> virtualbox-iso: 59958+0 records out

==> virtualbox-iso: + rm -f /EMPTY

==> virtualbox-iso: + cat /dev/null

==> virtualbox-iso: + history -c

==> virtualbox-iso: + sync

==> virtualbox-iso: + sync

==> virtualbox-iso: + sync

==> virtualbox-iso: + exit 0

==> virtualbox-iso: Gracefully halting virtual machine...

==> virtualbox-iso: Preparing to export machine...

virtualbox-iso: Deleting forwarded port mapping for the communicator (SSH, WinRM, etc) (host port 3684)

==> virtualbox-iso: Exporting virtual machine...

virtualbox-iso: Executing: export alpine-3.10.0-x86_64 --output output-virtualbox-iso/alpine-3.10.0-x86_64.ova

==> virtualbox-iso: Deregistering and deleting VM...

==> virtualbox-iso: Running post-processor: vagrant

==> virtualbox-iso (vagrant): Creating Vagrant box for 'virtualbox' provider

virtualbox-iso (vagrant): Unpacking OVA: output-virtualbox-iso/alpine-3.10.0-x86_64.ova

virtualbox-iso (vagrant): Renaming the OVF to box.ovf...

virtualbox-iso (vagrant): Using custom Vagrantfile: configs/vagrantfile.tpl

virtualbox-iso (vagrant): Compressing: Vagrantfile

virtualbox-iso (vagrant): Compressing: alpine-3.10.0-x86_64-disk001.vmdk

virtualbox-iso (vagrant): Compressing: box.ovf

virtualbox-iso (vagrant): Compressing: metadata.json

Build 'virtualbox-iso' finished.

==> Builds finished. The artifacts of successful builds are:

--> virtualbox-iso: 'virtualbox' provider box: nemonik_alpine310.box

9.5.2. Vagrant

This class uses Vagrant, a command-line utility for managing the life cycle of virtual machines as a vagrant in that the VMs are not meant to hang around in the same place for very long.

Unless you want to pollute your machine with every imaginable programming language, framework and library version you'll find yourself often creating a virtual machine (VM) for each software project. Sometimes more than one. And if you're like me of the past you'll end up with a VirtualBox full of VMs. If you haven't gone about this the right way, you'll end up wondering what VM went with which project and now how did I create it? The anti-pattern around this problem is to write documentation. A better way that aligns with a DevOps repeatable practices is to create automation to provision and configure your development VMs. This is where Vagrant comes in as it is "a command-line utility for managing the life cycle of virtual machines."

9.5.2.1. Vagrant documentation and source

Vagrant's documentation can be found at

https://www.vagrantup.com/docs/index.html

It's canonical (i.e., authoritative) source can be found at

https://github.com/hashicorp/vagrant/

Vagrant is written in Ruby. In fact, a Vagrantfile is written in a Ruby DSL and I make full use of this to extend the functionality of the Vagrantfile..

9.5.2.2. Installing Vagrant

- If you are Windows or OS X download Vagrant from

https://releases.hashicorp.com/vagrant/2.2.10/

The class has been verified to work with Version 2.2.10. Newer version may or may not work.

If you're using Linux, use your operating system's package manager to install vagrant. For exmple, to install on Arch Linux one would use

sudo pacman -Syu vagrant

-

Click on the installer once downloaded and follow along. On Windows, the installer may stall calculating for a bit and may bury modals you'll need to respond to in the Windows Task bar, so keep an eye out for that. The installer will automatically add the vagrant command to your system path so that it is available on the command line. If it is not found, the documentation advises you to try logging out and logging back into your system. This is particularly necessary sometimes for Windows. Windows will require a reboot, so remember to come back and complete step-3, if you are on the MITRE corporate network.

-

If you're not on the MITRE corporate network please skip this step.

-

On Windows, use the File Explorer to replace the existing

C:\Hashicorp\vagrant\embedded\cacert.pemfile with the project'svagrant_files/cacert.pemby using the File Explorer. -

On Mac OS X, copy it to

/opt/vagrant/embeddedas root using

sudo cp vagrant_files/cacert.pem /opt/vagrant/embedded/. -

NOTE

- Vagrant respects

SSL_CERT_FILEandCURL_CA_BUNDLEenvironment variables used to point to cacert bundles. If you run into SSL errors, you may haveSSL_CERT_FILEand/orCURL_CA_BUNDLEenvironment variable files set requiring you to add MITRE CA certificates to the file specified by these environment variables. If you use theset_env.shat the root of the project it will unset these environment variables forcing vagrant to use its cacert.pem file you replace above. - The same site has the Mac OS X download, whose installation is less involved.

9.5.2.3. The Vagrantfile explained

The Vagrantfile found at the root of the project describes how to provision and configure one or more virtual machines.

Vagrant's own documentation puts it best:

Vagrant is meant to run with one Vagrantfile per project, and the Vagrantfile is supposed to be committed to version control. This allows other developers involved in the project to check out the code, run vagrant up, and be on their way. Vagrantfiles are portable across every platform Vagrant supports.

If we were instead provisioning Amazon EC2 instances, we'd alternatively use Terraform, a tool for building, changing, and versioning infrastructure.

The following sub-sections enumerate the various sections of the Vagrantfile broken apart in order to discuss.

9.5.2.3.1. Modelines

# -*- mode: ruby -*-

# vi: set ft=ruby :

When authoring, tells your text editor (e.g. emacs or vim) to choose a specific editing mode for the Vagrantfile. Line one is a modeline for emacs and line two is a modeline for vim.

9.5.2.3.2. Setting extra variables for Ansible roles

The following lines:

# Vagrant will start at your current path and then move upward looking

# for a Vagrant file. The following will provide the path for the found

# Vagrantfile, so you can execute `vagrant` commands on the command-line

# anywhere in the project a Vagrantfile doesn't already exist.

vagrantfilePath = ""

if File.dirname(__FILE__).end_with?('Vagrantfile')

vagrantfilePath = File.dirname(File.dirname(__FILE__))

else

vagrantfilePath = File.dirname(__FILE__)

end

# Used to hold all the configuration variable and convienance methods for accessing

require File.join(vagrantfilePath, 'configuration_vars.rb')

in the Vagrantfile at the root of the project will include configuration_vars.rb Ruby module. This Ruby module defines the Ansible extra_vars used by Ansible roles found in ansible/roles and box/ansible/role as well as the bash scripting templates used by the Vagrantfile at the root of the project as well as the box/Vagrantfile as well as the bash scripts found in box/. You would update the version of GitLab used by the class by updating :gitlab_version here.

The contents of configuration_vars.rb Ruby module will resemble:

#-*- mode: ruby -*-

# vi: set ft=ruby :

# Copyright (C) 2020 Michael Joseph Walsh - All Rights Reserved

# You may use, distribute and modify this code under the

# terms of the the license.

#

# You should have received a copy of the license with

# this file. If not, please email <[email protected]>

module ConfigurationVars

# Define variablese

VARS = {

# The network block the cluster and apps will be in

network_prefix: "192.168.0",

# # Add OpenEBS drives ('yes'/'no')

# openebs_drives: 'yes',

openebs_drives: 'no',

# Sets the OpenEBS drive size in GB

openebs_drive_size_in_gb: 100,

# Provision and configure development vagrant ('yes'/'no')

# create_development: 'no',

create_development: 'yes',

# development_is_worker_node: 'yes',

# The number of nodes to provision the Kubernetes cluster. One will be a master.

nodes: 2,

# nodes: 1,

# The Vagrant box to base our DevOps box on. Pick just one.

base_box: 'centos/7',

base_box_version: '2004.01',

# base_box: 'ubuntu/bionic64',

# base_box_version: '20200304.0.0',

# base_box: 'nemonik/alpine310',

# base_box_version: '0',

vagrant_root_drive_size: '80GB',

ansible_version: '2.10.3',

default_retries: '60',

default_delay: '10',

docker_timeout: '300',

docker_retries: '60',

docker_delay: '10',

k3s_version: 'v1.19.4+k3s1',

k3s_cluster_secret: 'kluster_secret',

kubectl_version: 'v1.19.4',

kubectl_checksum: 'sha256:7df333f1fc1207d600139fe8196688303d05fbbc6836577808cda8fe1e3ea63f',

kubernetes_dashboard: 'yes',

kubernetes_dashboard_version: 'v2.0.0',

traefik: 'yes',

traefik_version: '1.7.26',

traefik_http_port: '80',

traefik_admin_port: '8080',

traefik_host: '192.168.0.206',

metallb: 'yes',

metallb_version: 'v0.9.5',

kompose_version: '1.18.0',

docker_compose_version: '1.27.4',

docker_compose_pip_version: '1.25.0rc2',

helm_cli_version: '3.2.1',

helm_cli_checksum: '018f9908cb950701a5d59e757653a790c66d8eda288625dbb185354ca6f41f6b',

registry_version: '2.7.1',

registry: 'yes',

registry_host: '192.168.0.10',

registry_port: '5000',

passthrough_registry: 'yes',

passthrough_registry_host: '192.168.0.10',

passthrough_registry_port: '5001',

registry_deploy_via: 'docker-compose',

gitlab: 'yes',

gitlab_version: '13.2.3',

gitlab_host: '192.168.0.202',

gitlab_port: '80',

gitlab_ssh_port: '10022',

gitlab_user: 'root',

drone: 'yes',

drone_version: '1.9.0',

drone_runner_docker_version: '1.4.0',

drone_host: '192.168.0.10',

drone_cli_version: 'v1.2.1',

plantuml_server: 'yes',

plantuml_server_version: 'latest',

plantuml_host: '192.168.0.203',

plantuml_port: '80',

taiga: 'yes',

taiga_version: 'latest',

taiga_host: '192.168.0.204',

taiga_port: '80',

sonarqube: 'yes',

sonarqube_version: '8.5.1-community',

sonarqube_host: '192.168.0.205',

sonarqube_port: '9000',

sonar_scanner_cli_version: '4.3.0.2102',

inspec_version: '4.18.39',

python_container_image: 'yes',

python_version: '2.7.18',

golang_container_image: 'yes',

golang_sonarqube_scanner_image: 'yes',

golang_version: '1.15',

selenium_standalone_chrome_version: '3.141',

standalone_firefox_container_image: 'yes',

selenium_standalone_firefox_version: '3.141',

owasp_zap2docker_stable_image: 'yes',

zap2docker_stable_version: '2.8.0',

openwhisk: 'yes',

openwhisk_host: '192.168.0.207',

cache_path: '/vagrant/cache',

images_cache_path: '/vagrant/cache/images',

create_cache: 'yes',

host_os: (

host_os = RbConfig::CONFIG['host_os']

case host_os

when /mswin|msys|mingw|cygwin|bccwin|wince|emc/

'windows'

when /darwin|mac os/

'macosx'

when /linux/

'linux'

when /bsd/

'unix'

else

raise Error, "unknown os: #{host_os.inspect}"

end

)

}

VARS[:ansible_python_version] = (

if VARS[:base_box].downcase.include? 'centos' and VARS[:base_box].to_s.include? '7'

'python2'

else

'python3'

end

)

def ConfigurationVars.as_string( http_proxy, https_proxy, ftp_proxy, no_proxy, certs)

vars = VARS

vars[:http_proxy] = (!http_proxy ? "" : http_proxy)

vars[:https_proxy] = (!https_proxy ? "" : https_proxy)

vars[:ftp_proxy] = (!ftp_proxy ? "" : ftp_proxy)

vars[:no_proxy] = (!no_proxy ? "" : no_proxy)

vars[:CA_CERTIFICATES] = ''

unless certs.nil? || certs == ''

vars[:CA_CERTIFICATES] = certs

end

vars_string = ''

vars.each do |key, value|

if ( ( key == :CA_CERTIFICATES ) && ( !value.nil? ) && value != '' )

vars_string = vars_string + "\\\"#{key}\\\":\\\["

value.each { |item|

vars_string = vars_string + "\\\"#{item}\\\","

}

vars_string = vars_string.chop + '\\],'

else

if (value.is_a? Integer)

value = value.to_s

end

vars_string = vars_string + "\\\"#{key}\\\":\\\"#{value}\\\","

end

end

return '\\{' + vars_string.chop + '\\}'

end

DETERMINE_OS_TEMPLATE = <<~SHELL

echo Determining OS...

os=""

if [[ $(command -v lsb_release | wc -l) == *"1"* ]]; then

os="$(lsb_release -is)-$(lsb_release -cs)"

elif [ -f "/etc/os-release" ]; then

if [[ $(cat /etc/os-release | grep -i alpine | wc -l) -gt "0" ]]; then

os="Alpine"

elif [[ $(cat /etc/os-release | grep -i "CentOS Linux 7" | wc -l) -gt "0" ]]; then

os="CentOS 7"

fi

else

echo -n "Cannot determine OS."

exit -1

fi

SHELL

OS_PACKAGES_FROM_CACHE_TEMPLATE = <<~SHELL

echo OS packages from cache...

mkdir -p /tmp/root-cache

box="#{VARS[:base_box]}"

case $os in

"Alpine")

package_manager="apk"

;;

"Ubuntu-bionic")

package_manager="apt"

;;

"CentOS 7")

package_manager="yum"

;;

*)

echo -n "${os} not supported."

exit -1

;;

esac

if [ -f "/vagrant/cache/TYPE/${box}/${package_manager}.tar.gz" ]; then

update=true

if [ -f "/tmp/root-cache/${package_manager}.tar.gz" ]; then

if ((`stat -c%s "/vagrant/cache/TYPE/${box}/${package_manager}.tar.gz"`!=`stat -c%s "/tmp/root-cache/${package_manager}.tar.gz"`)); then

update=false

fi

fi

if ($update == true); then

echo Installing ${box} ${package_manager} packages from cache...

cd /tmp/root-cache

cp /vagrant/cache/TYPE/${box}/${package_manager}.tar.gz ${package_manager}.tar.gz

tar zxf ${package_manager}.tar.gz

if [ -d "${package_manager}" ]; then

case $os in

"Alpine")

echo "installing apk packages from cache..."

apk add --repositories-file=/dev/null --allow-untrusted --no-network apk/*.apk

;;

"Ubuntu-bionic")

#echo "installing apt packages from cache..."

#cd apt/archives

#dpkg -i ./*.deb

#apt --fix-broken install

echo "not yet reliable..."

;;

"CentOS 7")

mv yum /var/cache/

;;

*)

echo "${os} not supported." 1>&2

exit -1

;;

esac

fi

rm -Rf ${package_manager}

else

echo No new ${box} packages in cache...

fi

else

echo No cached ${box} packages...

fi

SHELL

ROOT_INSTALL_ANSIBLE_DEPENDENCIES_TEMPLATE = <<~SHELL

echo Root installing ansible dependencies...

case $os in

"Alpine")

# install Alpine packages

apk add python3 python3-dev py3-pip musl-dev libffi-dev libc-dev py3-cryptography make gcc libressl-dev

;;

"Ubuntu-bionic")

apt update

apt upgrade -y

apt install -y python3 python3-dev python3-pip make gcc

;;

"CentOS 7")

yum update -y

yum install -y epel-release

yum install -y python-pip python-devel make gcc python-cffi

# pip uninstall -y bcrypt

# yum --enablerepo=epel install -y python2-bcrypt

;;

*)

echo "${os} not supported." 1>&2

exit -1

;;

esac

SHELL

USER_INSTALL_DEPENDENCIES_TEMPLATE = <<~SHELL

echo User installing ansible dependencies...

#{ConfigurationVars::VARS[:ansible_python_version]} -m pip install --user --upgrade pip

/home/vagrant/.local/bin/pip install --user --upgrade setuptools

/home/vagrant/.local/bin/pip install --user paramiko ansible==#{ConfigurationVars::VARS[:ansible_version]}

case $os in

"Alpine"|"Ubuntu-bionic")

;;

"CentOS 7")

# /home/vagrant/.local/bin/pip uninstall -y bcrypt

# /home/vagrant/.local/bin/pip install -y bcrypt==3.1.7

;;

*)

echo "${os} not supported." 1>&2

exit -1

;;

esac

SHELL

INSTALL_ANSIBLE_TEMPLATE = <<~SHELL

case $os in

"Alpine"|"Ubuntu-bionic"|"CentOS 7")

#{ConfigurationVars::VARS[:ansible_python_version]} -m pip install --user --upgrade pip setuptools

#{ConfigurationVars::VARS[:ansible_python_version]} -m pip install --user paramiko ansible==#{ConfigurationVars::VARS[:ansible_version]}

;;

*)

echo "${os} not supported." 1>&2

exit -1

;;

esac

SHELL

RUN_ANSIBLE_TEMPLATE = <<~SHELL

echo Running ansible-playbook PLAYBOOK_PATH...

case $os in

"Alpine"|"Ubuntu-bionic"|"CentOS 7")

n=0

until [ "$n" -ge #{ConfigurationVars::VARS[:default_retries]} ]; do

/home/vagrant/.local/bin/ansible-galaxy install --force --roles-path ansible/roles --role-file requirements.yml && break

n=$((n+1))

sleep #{ConfigurationVars::VARS[:default_delay]}

done

PYTHONUNBUFFERED=1 ANSIBLE_FORCE_COLOR=true /home/vagrant/.local/bin/ansible-playbook PLAYBOOK_PATH --limit="LIMIT" --extra-vars=ANSIBLE_EXTRA_VARS --extra-vars='ansible_python_interpreter="/usr/bin/env #{ConfigurationVars::VARS[:ansible_python_version]}"' --vault-password-file=VAULT_PASS_PATH -vvvv --connection=local --inventory=INVENTORY_PATH

;;

# "CentOS 7")

# n=0

# until [ "$n" -ge #{ConfigurationVars::VARS[:default_retries]} ]; do

# ansible-galaxy install --force --roles-path ansible/roles --role-file requirements.yml && break

# n=$((n+1))

# sleep #{ConfigurationVars::VARS[:default_delay]}

# done

# PYTHONUNBUFFERED=1 ANSIBLE_FORCE_COLOR=true ansible-playbook PLAYBOOK_PATH --limit="LIMIT" --extra-vars=ANSIBLE_EXTRA_VARS --vault-password-file=VAULT_PASS_PATH -vvvv --connection=local --inventory=INVENTORY_PATH

# ;;

*)

echo "${os} not supported." 1>&2

exit -1

;;

esac

SHELL

RESIZE_ROOT_TEMPLATE = <<~SHELL

echo Resizing root...

case $os in

"Alpine")

# install Alpine packages

echo "resize root not yet handled."

;;

"Ubuntu-bionic")

echo "resize root not yet handled."

;;

"CentOS 7")

echo "Resizing root volume..."

yum -y install cloud-utils-growpart

growpart /dev/sda 1

xfs_growfs /

;;

*)

echo "${os} not supported." 1>&2

exit -1

;;

esac

SHELL

SITE_PACKAGES_FROM_CACHE_TEMPLATE = <<~SHELL

echo Site packages from cache...

if [ -f "/vagrant/cache/TYPE/${box}/site-packages.tar.gz" ]; then

update=true

if [ -f "/tmp/root-cache/site-packages.tar.gz" ]; then

if ((`stat -c%s "/vagrant/cache/TYPE/${box}/site-packages"`!=`stat -c%s "/tmp/root-cache/site-packages"`)); then

update=false

fi

fi

if ($update == true); then

echo Unpacking #{ VARS[:ansible_python_version] } site-packages from cache...

cd /tmp/root-cache

cp /vagrant/cache/TYPE/${box}/site-packages.tar.gz site-packages.tar.gz

cd /usr/lib/#{ VARS[:ansible_python_version] }*

tar zxvf /tmp/root-cache/site-packages.tar.gz

else

echo No new updates to site-packages in cache...

fi

fi

SHELL

USER_CACHED_CONTENT_TEMPLATE = <<~SHELL

echo Use cached content...

mkdir -p /tmp/vagrant-cache

box="#{VARS[:base_box]}"

if [ "${os}" == "CentOS 7" ] && [ -f "/vagrant/cache/TYPE/${box}/rvm.tar.gz" ]; then

update=true

if [ -f "/tmp/vagrant-cache/rvm.tar.gz" ]; then

if ((`stat -c%s "/vagrant/cache/TYPE/${box}/rvm.tar.gz"`!=`stat -c%s "/tmp/vagrant-cache/rvm.tar.gz"`)); then

update=false

fi

fi

if ($update == true); then

echo Unpacking /home/vagrant/[.rvm .gnupg .bash_profile .bashrc .profile .mkshrc .zshrc .zlogin] from cache...

cp /vagrant/cache/TYPE/${box}/rvm.tar.gz /tmp/vagrant-cache/rvm.tar.gz

cd /home/vagrant/

tar zxvf /tmp/vagrant-cache/rvm.tar.gz

else

echo No new updates to /home/vagrant/[.rvm .gnupg .bash_profile .bashrc .profile .mkshrc .zshrc .zlogin] in cache...

fi

else

echo No /home/vagrant/[.rvm .gnupg .bash_profile .bashrc .profile .mkshrc .zshrc .zlogin] in cache...

fi

if [ -f "/vagrant/cache/TYPE/${box}/cache.tar.gz" ]; then

update=true

if [ -f "/tmp/vagrant-cache/cache.tar.gz" ]; then

if ((`stat -c%s "/vagrant/cache/TYPE/${box}/cache.tar.gz"`!=`stat -c%s "/tmp/vagrant-cache/cache.tar.gz"`)); then

update=false

fi

fi

if ($update == true); then

echo Unpacking /home/vagrant/.cache from cache...

cp /vagrant/cache/TYPE/${box}/cache.tar.gz /tmp/vagrant-cache/cache.tar.gz

cd /home/vagrant/

tar zxvf /tmp/vagrant-cache/cache.tar.gz

else

echo No new updates to /home/vagrant/.cache in cache...

fi

else

echo No /home/vagrant/.cache cache...

fi

if [ -f "/vagrant/cache/TYPE/${box}/local.tar.gz" ]; then

update=true

if [ -f "/tmp/vagrant-cache/local.tar.gz" ]; then

if ((`stat -c%s "/vagrant/cache/TYPE/${box}/local.tar.gz"`!=`stat -c%s "/tmp/vagrant-cache/local.tar.gz"`)); then

update=false

fi

fi

if ($update == true); then

echo Unpacking /home/vagrant/.local from cache...

cp /vagrant/cache/TYPE/${box}/local.tar.gz /tmp/vagrant-cache/local.tar.gz

cd /home/vagrant/

tar -zxvf /tmp/vagrant-cache/local.tar.gz

else

echo No new updates to /home/vagrant/.local in cache...

fi

else

echo No /home/vagrant/.local cache...

fi

if [ -f "/vagrant/cache/TYPE/${box}/gem.tar.gz" ]; then

update=true

if [ -f "/tmp/vagrant-cache/gem.tar.gz" ]; then

if ((`stat -c%s "/vagrant/cache/TYPE/${box}/gem.tar.gz"`!=`stat -c%s "/tmp/vagrant-cache/gem.tar.gz"`)); then

update=false

fi

fi

if ($update == true); then

echo Unpacking /home/vagrant/.gem from cache...

cp /vagrant/cache/TYPE/${box}/gem.tar.gz /tmp/vagrant-cache/gem.tar.gz

cd /home/vagrant

tar -zxvf /tmp/vagrant-cache/gem.tar.gz

else

echo No new updates to /home/vagrant/.gem in cache...

fi

else

echo No /home/vagrant/.gem cache...

fi

SHELL

end

9.5.2.3.3. Automatically installing and removing the necessary Vagrant plugins

Vagrant can be extended by plugins and this class makes use of a number of them. One of which, the vagrant-vbguest plugin is not installed if the Vagrant's base operating system is Alpine, but is installed otherwise.

uninstall_plugins = %w( vagrant-cachier vagrant-alpine )

required_plugins = %w( vagrant-timezone vagrant-proxyconf vagrant-certificates vagrant-disksize vagrant-reload ) # vagrant-disksize

if (not os.downcase.include? 'alpine')

required_plugins = required_plugins << "vagrant-vbguest"

else

# as alpine is currently not supported by vagrant-vbguest

uninstall_plugins = uninstall_plugins << "vagrant-vbguest"

end

# Uninstall the following plugins

plugin_uninstalled = false

uninstall_plugins.each do |plugin|

if Vagrant.has_plugin?(plugin)

system "vagrant plugin uninstall #{plugin}"

plugin_uninstalled = true

end

end

# Require the following plugins

plugin_installed = false

required_plugins.each do |plugin|

unless Vagrant.has_plugin?(plugin)

system "vagrant plugin install #{plugin}"

plugin_installed = true

end

end

#system "vagrant plugin update"

# if plugins were installed, restart

if plugin_installed || plugin_uninstalled

puts "restarting"

exec "vagrant #{ARGV.join' '}"

end

9.5.2.3.4. Inserting Proxy setting via host environmental variables

Later in the Vagrantfile, a bit of code makes use of the vagrant-proxyconf plugin to configure the HTTP proxy settings for the vagrants (i.e., the transient VMs).

# Set proxy settings for all vagrants

#

# Depends on install of vagrant-proxyconf plugin.

#

# To use:

#

# 1. Install `vagrant plugin install vagrant-proxyconf`

# 2. Set environmental variables for `http_proxy`, `https_proxy`, `ftp_proxy`, and `no_proxy`

#

# For example:

#

# ```

# export http_proxy=

# export https_proxy=

# export ftp_proxy=

# export no_proxy=

# ```

if (ENV['http_proxy'] || ENV['https_proxy'])

config.proxy.http = ENV['http_proxy']

config.proxy.https = ENV['https_proxy']

config.proxy.ftp = ENV['ftp_proxy']

config.proxy.no_proxy = ENV['no_proxy']

config.proxy.enabled = { docker: false }

if ( ARGV.include? 'up' ) || ( ARGV.include? 'provision' )

puts "INFO: HTTP Proxy variables set.".green

puts "INFO: http_proxy = #{ config.proxy.http }".green

puts "INFO: https_proxy = #{ config.proxy.https }".green

puts "INFO: ftp_proxy = #{ config.proxy.ftp }".green

puts "INFO: no_proxy = #{ config.proxy.no_proxy }".green

end

else

if ( ARGV.include? 'up' ) || ( ARGV.include? 'provision' )

puts "INFO: No http_proxy or https_proxy environment variables are set.".green

end

config.proxy.http = nil

config.proxy.https = nil

config.proxy.ftp = nil

config.proxy.no_proxy = nil

config.proxy.enabled = false

end

9.5.2.3.5. Inserting enterprise CA certificates

Later still, a bit of code makes use of the vagrant-certificates plugin's to inject the specified certificates into the vagrants. This is useful, for example, if your enterprise network has a firewall (or appliance) which utilizes SSL interception. So, the existence of this plugin tell us more broadly others have to deal with the havoc SSL interception brings to development.

# To add Enterprise CA Certificates to all vagrants

#

# Depends on the install of the vagrant-certificates plugin

#

# To use:

#

# 1. Install `vagrant plugin install vagrant-certificates`.

# 2. Set environement variable for `CA_CERTIFICATES` containing a comma separated list of certificate URLs.

#

# For example:

#

# ```

# export CA_CERTIFICATES=http://employeeshare.mitre.org/m/mjwalsh/transfer/MITRE%20BA%20ROOT.crt,http://employeeshare.mitre.org/m/mjwalsh/transfer/MITRE%20BA%20NPE%20CA-3%281%29.crt

# ```

#

# The Root certificate *must* be denotes as the root certificat like so:

#

# http://employeeshare.mitre.org/m/mjwalsh/transfer/MITRE%20BA%20ROOT.crt

#

if ENV['CA_CERTIFICATES']

# Because @williambailey's vagrant-ca-certificates has an issue https://github.com/williambailey/vagrant-ca-certificates/issues/34 I am using @Toilal fork, vagrant-certificates

if ( ARGV.include? 'up' ) || ( ARGV.include? 'provision' )

puts "INFO: CA Certificates set to #{ ENV['CA_CERTIFICATES'] }".green

end

config.certificates.enabled = true

config.certificates.certs = ENV['CA_CERTIFICATES'].split(',')

else

if ( ARGV.include? 'up' ) || ( ARGV.include? 'provision' )

puts "INFO: No CA_CERTIFICATES environment variable set.".green

end

config.certificates.certs = nil

config.certificates.enabled = false

end

9.5.2.3.6. Auto-generate the Ansible inventory file

The Vagrantfile doesn't make use of the Vagrant's own Ansible Local provisioner, but instead makes use of the Shell provisioner to install and use Ansible to configure the Vagrants.

To do this Ansible needs an inventory file and so the Vagrantfile dynamically creates the inventory file via the following code:

# Write hosts file for Ansible

require 'erb'

@worker_nodes = [*1..ConfigurationVars::VARS[:nodes]-1]

template = <<-TEXT

#

# Do not change this file by hand as it is dynamically generated via the Vagrantfile.

#

development ansible_connection=local

master ansible_connection=local

<% for @node in @worker_nodes %>node<%= @node %> ansible_connection=local

<% end %>

[boxes]

box

[masters]

master

[workers]

<% for @node in @worker_nodes %>node<%= @node %>

<% end %>

[developments]

development

TEXT

open(File.join(vagrantfilePath, 'hosts'), 'w') do |f|

f.puts ERB.new(template).result

end

resulting in a hosts file being created in the root of the project upon vagrant up being called on the command-line.