chuckcho / Video Caffe

Projects that are alternatives of or similar to Video Caffe

Video-Caffe: Caffe with C3D implementation and video reader

This is 3D convolution (C3D) and video reader implementation in the latest Caffe (Dec 2016). The original Facebook C3D implementation is branched out from Caffe on July 17, 2014 with git commit b80fc86, and has not been rebased with the original Caffe, hence missing out quite a few new features in the recent Caffe. I therefore pulled in C3D concept and an accompanying video reader and applied to the latest Caffe, and will try to rebase this repo with the upstream whenever there is a new important feature. This repo is rebased on 99bd997, on Aug`21 2018. Please reach me for any feedback or question.

Check out the original Caffe readme for Caffe-specific information.

Branches

refactor branch is a recent re-work, based on the original Caffe and Nd convolution and pooling with cuDNN PR. This is a cleaner, less-hacky implementation of 3D convolution/pooling than the master branch, and is supposed to more stable than the master branch. So, feel free to try this branch. One missing feature in the refactor branch (yet) is the python wrapper.

Requirements

In addition to prerequisites for Caffe, video-caffe depends on cuDNN. It is known to work with CuDNN verson 4 and 5, but it may need some efforts to build with v3.

- If you use "make" to build make sure

Makefile.configpoint to the right paths for CUDA and CuDNN. - If you use "cmake" to build, double-check

CUDNN_INCLUDEandCUDNN_LIBRARYare correct. If not, you may want something likecmake -DCUDNN_INCLUDE="/your/path/to/include" -DCUDNN_LIBRARY="/your/path/to/lib" ${video-caffe-root}.

Building video-caffe

Key steps to build video-caffe are:

git clone [email protected]:chuckcho/video-caffe.gitcd video-caffemkdir build && cd buildcmake ..- Make sure CUDA and CuDNN are detected and their paths are correct.

make all -j8make install- (optional)

make runtest

Usage

Look at ${video-caffe-root}/examples/c3d_ucf101/c3d_ucf101_train_test.prototxt for how 3D convolution and pooling are used. In a nutshell, use NdConvolution or NdPooling layer with {kernel,stride,pad}_shape that specifies 3D shapes in (L x H x W) where L is the temporal length (usually 16).

...

# ----- video/label input -----

layer {

name: "data"

type: "VideoData"

top: "data"

top: "label"

video_data_param {

source: "examples/c3d_ucf101/c3d_ucf101_train_split1.txt"

batch_size: 50

new_height: 128

new_width: 171

new_length: 16

shuffle: true

}

include {

phase: TRAIN

}

transform_param {

crop_size: 112

mirror: true

mean_value: 90

mean_value: 98

mean_value: 102

}

}

...

# ----- 1st group -----

layer {

name: "conv1a"

type: "NdConvolution"

bottom: "data"

top: "conv1a"

param {

lr_mult: 1

decay_mult: 1

}

param {

lr_mult: 2

decay_mult: 0

}

convolution_param {

num_output: 64

kernel_shape { dim: 3 dim: 3 dim: 3 }

stride_shape { dim: 1 dim: 1 dim: 1 }

pad_shape { dim: 1 dim: 1 dim: 1 }

weight_filler {

type: "gaussian"

std: 0.01

}

bias_filler {

type: "constant"

value: 0

}

}

}

...

layer {

name: "pool1"

type: "NdPooling"

bottom: "conv1a"

top: "pool1"

pooling_param {

pool: MAX

kernel_shape { dim: 1 dim: 2 dim: 2 }

stride_shape { dim: 1 dim: 2 dim: 2 }

}

}

...

UCF-101 training demo

Scripts and training files for C3D training on UCF-101 are located in examples/c3d_ucf101/. Steps to train C3D on UCF-101:

- Download UCF-101 dataset from UCF-101 website.

- Unzip the dataset: e.g.

unrar x UCF101.rar - (Optional) video reader works more stably with extracted frames than directly with video files. Extract frames from UCF-101 videos by revising and running a helper script,

${video-caffe-root}/examples/c3d_ucf101/extract_UCF-101_frames.sh. - Change

${video-caffe-root}/examples/c3d_ucf101/c3d_ucf101_{train,test}_split1.txtto correctly point to UCF-101 videos or directories that contain extracted frames. - Modify

${video-caffe-root}/examples/c3d_ucf101/c3d_ucf101_train_test.prototxtto your taste or HW specification. Especiallybatch_sizemay need to be adjusted for the GPU memory. - Run training script: e.g.

cd ${video-caffe-root} && examples/c3d_ucf101/train_ucf101.sh(optionally use--gputo use multiple GPU's) - (Optional) Occasionally run

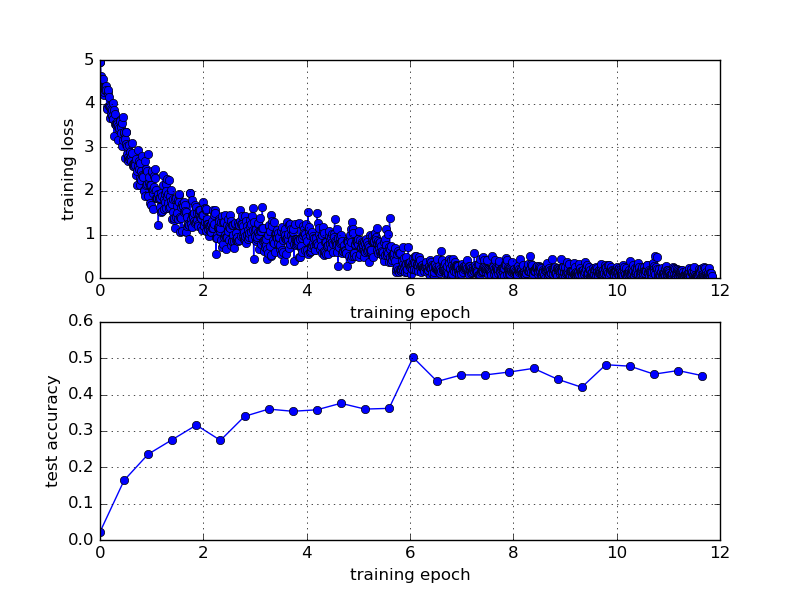

${video-caffe-root}/tools/extra/plot_training_loss.shto get training loss / validation accuracy (top1/5) plot. It's pretty hacky, so look at the file to meet your need. - At 7 epochs of training, clip accuracy should be around 45%.

A typical training will yield the following loss and top-1 accuracy:

Pre-trained model

A pre-trained model is available (downloadable link) for UCF101 (trained from scratch), achieving top-1 accuracy of ~47%.

To-do

- Feature extractor script.

- Python demo script that loads a video and classifies.

- Convert Sport1M pre-trained model and make it available.

License and Citation

Caffe is released under the BSD 2-Clause license.