Google Search Console Index Coverage Extractor

This script allows users of Google Search Console (GSC) to extract all the different reports from the Index Coverage report section of the platform and the Sitemap Coverage report section. I wrote a blog post about why I built this script.

Reports that extracts

Error

- Server error (5xx)

- Redirect error

- Submitted URL blocked by robots.txt

- Submitted URL marked ‘noindex’

- Submitted URL seems to be a Soft 404

- Submitted URL has crawl issue

- Submitted URL not found (404)

- Submitted URL returned 403

- Submitted URL returns unauthorized request (401)

- Submitted URL blocked due to other 4xx issue

Warning

- Indexed, though blocked by robots.txt

- Page indexed without content

Valid

- Submitted and indexed

- Indexed, not submitted in sitemap

Excluded

- Excluded by ‘noindex’ tag

- Blocked by page removal tool

- Blocked by robots.txt

- Blocked due to unauthorized request (401)

- Crawled - currently not indexed

- Discovered - currently not indexed

- Alternate page with proper canonical tag

- Duplicate without user-selected canonical

- Duplicate, Google chose different canonical than user

- Not found (404)

- Page with redirect

- Soft 404

- Duplicate, submitted URL not selected as canonical

- Blocked due to access forbidden (403)

- Blocked due to other 4xx issue

Installing and running the script

The script uses the ECMAScript modules import/export syntax so double check that you are above version 14 to run the script.

# Check Node version

node -vAfter downloading/cloning the repo, install the necessary modules to run the script.

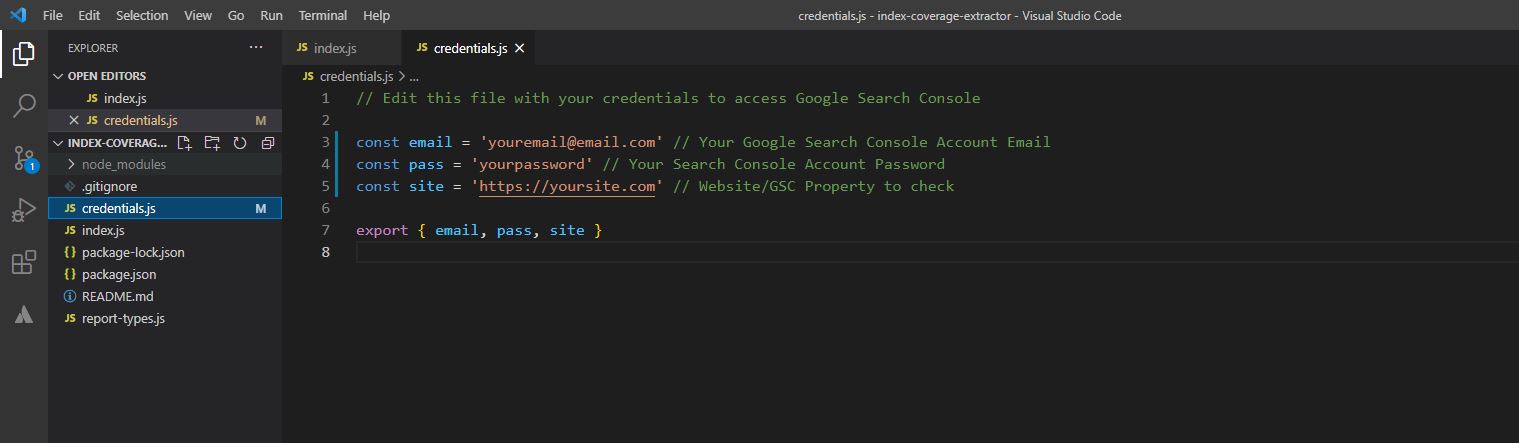

npm installIn order to extract the coverage data from your website/property update the credential.js file with your Search Console credentials.

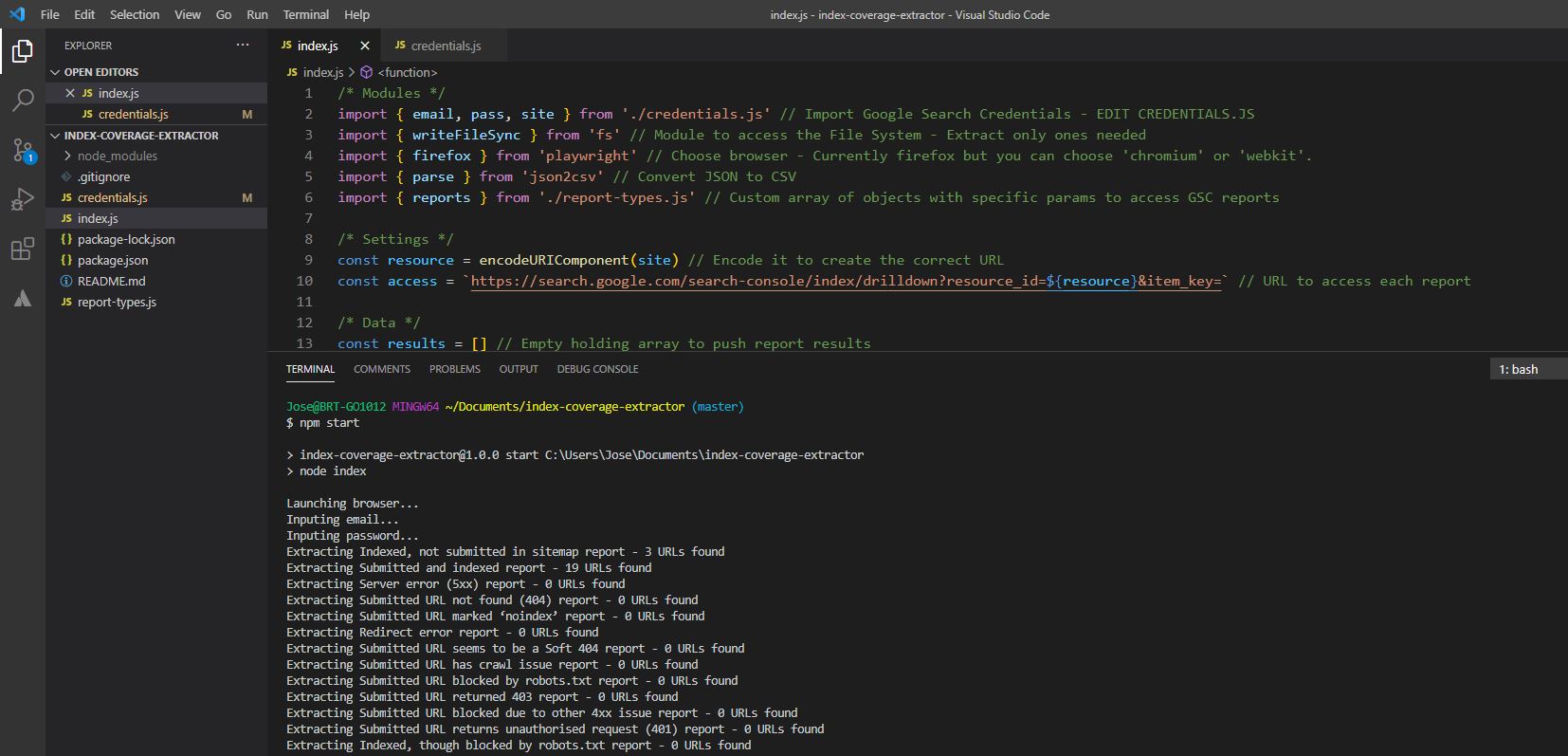

After that you can run the script with npm start command from your terminal.

npm startYou will see the processing messages in your terminal while the script runs.

Settings

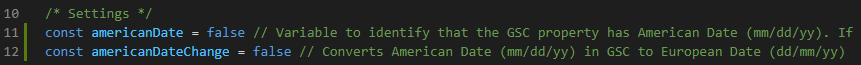

The script extracts the "Latest updated" dates that GSC provides. Hence the date can be in two different formats: American date (mm/dd/yyyy) and European date (dd/mm/yyyy). Therefore there is an option to set which date format you would like the script to output the dates:

The default setting assumes your property shows the dates in European date format (dd/mm/yyyy). If your GSC property shows the dates in American date format then you would need to change americanDate = true. Also if your property is in American date format but you'd like to change it to European date format you can do that by changing americanDateChange = true.

Output

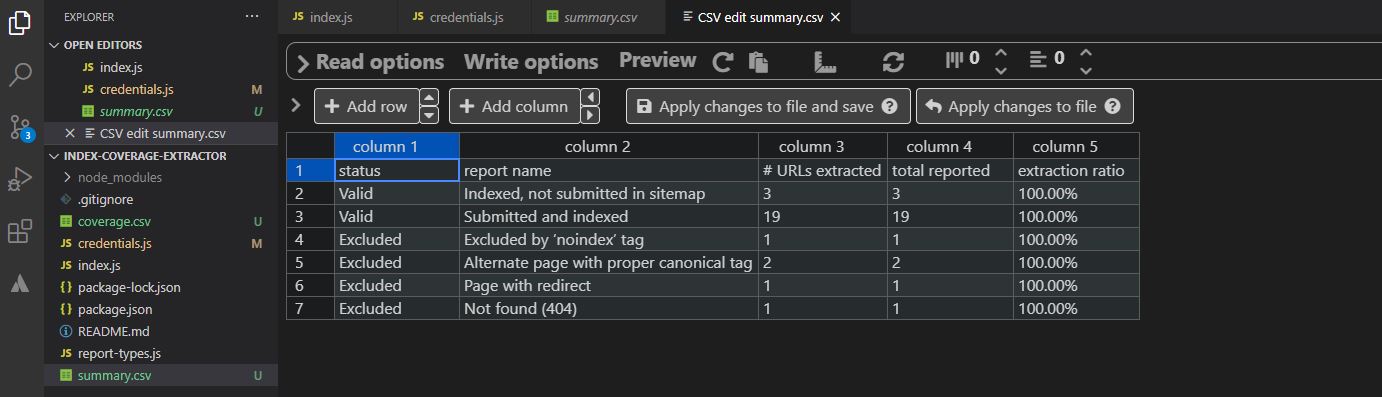

The script will create a "results.xlsx" file, a "coverage.csv" file and a "summary.csv" file.

The "results.xlsx" file will contain 4 tabs:

- A summary of the index coverage extraction.

- The individual URLs extracted from the Coverage section.

- A summary of the index coverage extraction from the Sitemap section.

- The individual URLs extracted from the Sitemap section.

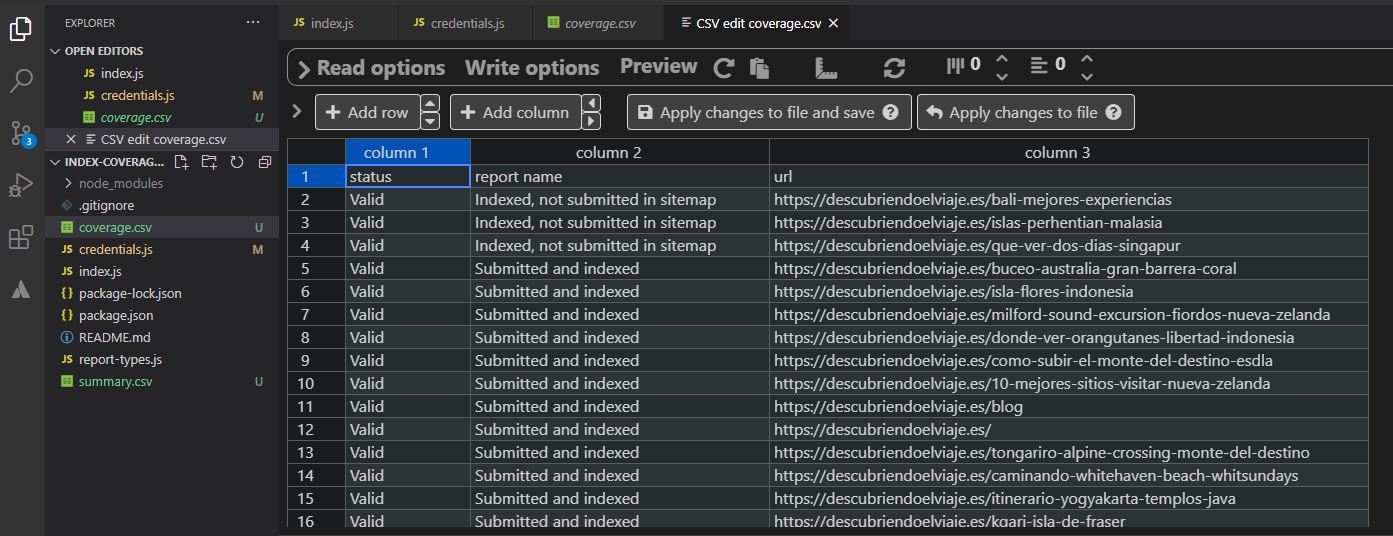

The "coverage.csv" will contain all the URLs that have been extracted from each individual coverage report.

The "summary.csv" will contain the amount of urls per report that have been extracted, the total number that GSC reports in the user interface (either the same or higher) and an "extraction ratio" which is a division between the URLs extracted and the total number of URLs reported by GSC.

The "sitemap.csv" will contain all the URLs that have been extracted from each individual sitemap coverage report.

The "sum-sitemap.csv" will contain a summary of the top-level coverage numbers per sitemap reported by GSC