SKTBrain / Kobert

Licence: apache-2.0

Korean BERT pre-trained cased (KoBERT)

Stars: ✭ 591

Projects that are alternatives of or similar to Kobert

Indonesian Language Models

Indonesian Language Models and its Usage

Stars: ✭ 64 (-89.17%)

Mutual labels: jupyter-notebook, language-model

Gpt2 French

GPT-2 French demo | Démo française de GPT-2

Stars: ✭ 47 (-92.05%)

Mutual labels: jupyter-notebook, language-model

Azureml Bert

End-to-End recipes for pre-training and fine-tuning BERT using Azure Machine Learning Service

Stars: ✭ 342 (-42.13%)

Mutual labels: jupyter-notebook, language-model

Vietnamese Electra

Electra pre-trained model using Vietnamese corpus

Stars: ✭ 55 (-90.69%)

Mutual labels: jupyter-notebook, language-model

Robbert

A Dutch RoBERTa-based language model

Stars: ✭ 120 (-79.7%)

Mutual labels: jupyter-notebook, language-model

Bert Sklearn

a sklearn wrapper for Google's BERT model

Stars: ✭ 182 (-69.2%)

Mutual labels: jupyter-notebook, language-model

Sentiment analysis fine grain

Multi-label Classification with BERT; Fine Grained Sentiment Analysis from AI challenger

Stars: ✭ 546 (-7.61%)

Mutual labels: jupyter-notebook, language-model

Functional Zoo

PyTorch and Tensorflow functional model definitions

Stars: ✭ 577 (-2.37%)

Mutual labels: jupyter-notebook

Pandas Cookbook

Recipes for using Python's pandas library

Stars: ✭ 5,520 (+834.01%)

Mutual labels: jupyter-notebook

Gym Trading

Environment for reinforcement-learning algorithmic trading models

Stars: ✭ 574 (-2.88%)

Mutual labels: jupyter-notebook

Grokking Deep Learning

this repository accompanies the book "Grokking Deep Learning"

Stars: ✭ 5,380 (+810.32%)

Mutual labels: jupyter-notebook

Business Machine Learning

A curated list of practical business machine learning (BML) and business data science (BDS) applications for Accounting, Customer, Employee, Legal, Management and Operations (by @firmai)

Stars: ✭ 575 (-2.71%)

Mutual labels: jupyter-notebook

Deep Learning For Hackers

Machine Learning tutorials with TensorFlow 2 and Keras in Python (Jupyter notebooks included) - (LSTMs, Hyperameter tuning, Data preprocessing, Bias-variance tradeoff, Anomaly Detection, Autoencoders, Time Series Forecasting, Object Detection, Sentiment Analysis, Intent Recognition with BERT)

Stars: ✭ 586 (-0.85%)

Mutual labels: jupyter-notebook

Dnc Tensorflow

A TensorFlow implementation of DeepMind's Differential Neural Computers (DNC)

Stars: ✭ 587 (-0.68%)

Mutual labels: jupyter-notebook

Easy Scraping Tutorial

Simple but useful Python web scraping tutorial code.

Stars: ✭ 583 (-1.35%)

Mutual labels: jupyter-notebook

Korean BERT pre-trained cased (KoBERT)

Why'?'

- 구글 BERT base multilingual cased의 한국어 성능 한계

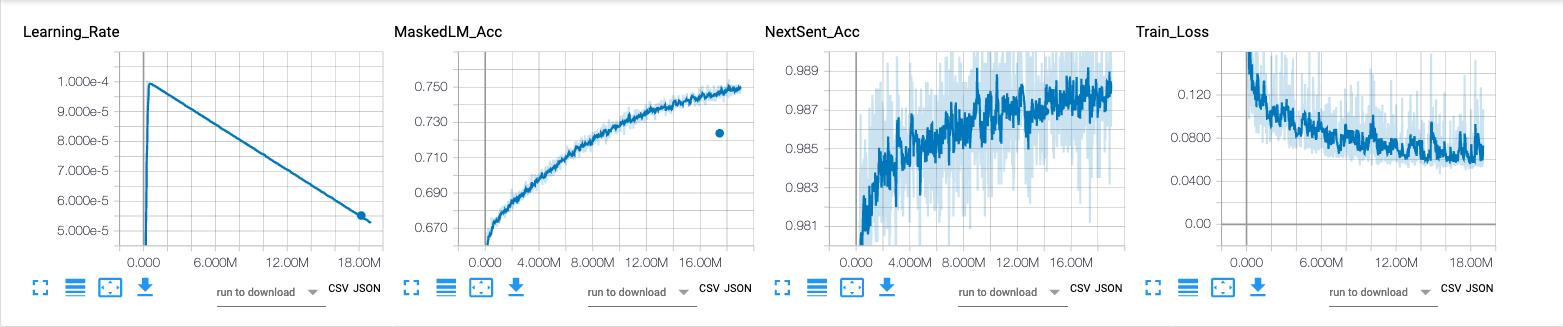

Training Environment

- Architecture

predefined_args = {

'attention_cell': 'multi_head',

'num_layers': 12,

'units': 768,

'hidden_size': 3072,

'max_length': 512,

'num_heads': 12,

'scaled': True,

'dropout': 0.1,

'use_residual': True,

'embed_size': 768,

'embed_dropout': 0.1,

'token_type_vocab_size': 2,

'word_embed': None,

}

- 학습셋

| 데이터 | 문장 | 단어 |

|---|---|---|

| 한국어 위키 | 5M | 54M |

- 학습 환경

- V100 GPU x 32, Horovod(with InfiniBand)

- 사전(Vocabulary)

- 크기 : 8,002

- 한글 위키 기반으로 학습한 토크나이저(SentencePiece)

- Less number of parameters(92M < 110M )

Requirements

- Python >= 3.6

- PyTorch >= 1.7.0

- MXNet >= 1.4.0

- gluonnlp >= 0.6.0

- sentencepiece >= 0.1.6

- onnxruntime >= 0.3.0

- transformers >= 3.5.0

How to install

git clone https://github.com/SKTBrain/KoBERT.git

cd KoBERT

pip install -r requirements.txt

pip install .

How to use

Using with PyTorch

>>> import torch

>>> from kobert.pytorch_kobert import get_pytorch_kobert_model

>>> input_ids = torch.LongTensor([[31, 51, 99], [15, 5, 0]])

>>> input_mask = torch.LongTensor([[1, 1, 1], [1, 1, 0]])

>>> token_type_ids = torch.LongTensor([[0, 0, 1], [0, 1, 0]])

>>> model, vocab = get_pytorch_kobert_model()

>>> sequence_output, pooled_output = model(input_ids, input_mask, token_type_ids)

>>> pooled_output.shape

torch.Size([2, 768])

>>> vocab

Vocab(size=8002, unk="[UNK]", reserved="['[MASK]', '[SEP]', '[CLS]']")

>>> # Last Encoding Layer

>>> sequence_output[0]

tensor([[-0.2461, 0.2428, 0.2590, ..., -0.4861, -0.0731, 0.0756],

[-0.2478, 0.2420, 0.2552, ..., -0.4877, -0.0727, 0.0754],

[-0.2472, 0.2420, 0.2561, ..., -0.4874, -0.0733, 0.0765]],

grad_fn=<SelectBackward>)

model은 디폴트로 eval()모드로 리턴됨, 따라서 학습 용도로 사용시 model.train()명령을 통해 학습 모드로 변경할 필요가 있다.

- Naver Sentiment Analysis Fine-Tuning with pytorch

Using with ONNX

>>> import onnxruntime

>>> import numpy as np

>>> from kobert.utils import get_onnx

>>> onnx_path = get_onnx()

>>> sess = onnxruntime.InferenceSession(onnx_path)

>>> input_ids = [[31, 51, 99], [15, 5, 0]]

>>> input_mask = [[1, 1, 1], [1, 1, 0]]

>>> token_type_ids = [[0, 0, 1], [0, 1, 0]]

>>> len_seq = len(input_ids[0])

>>> pred_onnx = sess.run(None, {'input_ids':np.array(input_ids),

>>> 'token_type_ids':np.array(token_type_ids),

>>> 'input_mask':np.array(input_mask),

>>> 'position_ids':np.array(range(len_seq))})

>>> # Last Encoding Layer

>>> pred_onnx[-2][0]

array([[-0.24610452, 0.24282141, 0.25895312, ..., -0.48613444,

-0.07305173, 0.07560554],

[-0.24783179, 0.24200465, 0.25520486, ..., -0.4877185 ,

-0.0727044 , 0.07536091],

[-0.24721591, 0.24196623, 0.2560626 , ..., -0.48743123,

-0.07326943, 0.07650235]], dtype=float32)

ONNX 컨버팅은 soeque1께서 도움을 주셨습니다.

Using with MXNet-Gluon

>>> import mxnet as mx

>>> from kobert.mxnet_kobert import get_mxnet_kobert_model

>>> input_id = mx.nd.array([[31, 51, 99], [15, 5, 0]])

>>> input_mask = mx.nd.array([[1, 1, 1], [1, 1, 0]])

>>> token_type_ids = mx.nd.array([[0, 0, 1], [0, 1, 0]])

>>> model, vocab = get_mxnet_kobert_model(use_decoder=False, use_classifier=False)

>>> encoder_layer, pooled_output = model(input_id, token_type_ids)

>>> pooled_output.shape

(2, 768)

>>> vocab

Vocab(size=8002, unk="[UNK]", reserved="['[MASK]', '[SEP]', '[CLS]']")

>>> # Last Encoding Layer

>>> encoder_layer[0]

[[-0.24610372 0.24282135 0.2589539 ... -0.48613444 -0.07305248

0.07560539]

[-0.24783105 0.242005 0.25520545 ... -0.48771808 -0.07270523

0.07536077]

[-0.24721491 0.241966 0.25606337 ... -0.48743105 -0.07327032

0.07650219]]

<NDArray 3x768 @cpu(0)>

Tokenizer

- Pretrained Sentencepiece tokenizer

>>> from gluonnlp.data import SentencepieceTokenizer

>>> from kobert.utils import get_tokenizer

>>> tok_path = get_tokenizer()

>>> sp = SentencepieceTokenizer(tok_path)

>>> sp('한국어 모델을 공유합니다.')

['▁한국', '어', '▁모델', '을', '▁공유', '합니다', '.']

Subtasks

Naver Sentiment Analysis

- Dataset : https://github.com/e9t/nsmc

| Model | Accuracy |

|---|---|

| BERT base multilingual cased | 0.875 |

| KoBERT | 0.901 |

| KoGPT2 | 0.899 |

KoBERT와 CRF로 만든 한국어 객체명인식기

문장을 입력하세요: SKTBrain에서 KoBERT 모델을 공개해준 덕분에 BERT-CRF 기반 객체명인식기를 쉽게 개발할 수 있었다.

len: 40, input_token:['[CLS]', '▁SK', 'T', 'B', 'ra', 'in', '에서', '▁K', 'o', 'B', 'ER', 'T', '▁모델', '을', '▁공개', '해', '준', '▁덕분에', '▁B', 'ER', 'T', '-', 'C', 'R', 'F', '▁기반', '▁', '객', '체', '명', '인', '식', '기를', '▁쉽게', '▁개발', '할', '▁수', '▁있었다', '.', '[SEP]']

len: 40, pred_ner_tag:['[CLS]', 'B-ORG', 'I-ORG', 'I-ORG', 'I-ORG', 'I-ORG', 'O', 'B-POH', 'I-POH', 'I-POH', 'I-POH', 'I-POH', 'O', 'O', 'O', 'O', 'O', 'O', 'B-POH', 'I-POH', 'I-POH', 'I-POH', 'I-POH', 'I-POH', 'I-POH', 'O', 'O', 'O', 'O', 'O', 'O', 'O', 'O', 'O', 'O', 'O', 'O', 'O', 'O', '[SEP]']

decoding_ner_sentence: [CLS] <SKTBrain:ORG>에서 <KoBERT:POH> 모델을 공개해준 덕분에 <BERT-CRF:POH> 기반 객체명인식기를 쉽게 개발할 수 있었다.[SEP]

Version History

- v.0.1 : 초기 모델 릴리즈

- v.0.1.1 : 사전(vocabulary)과 토크나이저 통합

Contacts

KoBERT 관련 이슈는 이곳에 등록해 주시기 바랍니다.

License

KoBERT는 Apache-2.0 라이선스 하에 공개되어 있습니다. 모델 및 코드를 사용할 경우 라이선스 내용을 준수해주세요. 라이선스 전문은 LICENSE 파일에서 확인하실 수 있습니다.

Note that the project description data, including the texts, logos, images, and/or trademarks,

for each open source project belongs to its rightful owner.

If you wish to add or remove any projects, please contact us at [email protected].