twhui / Liteflownet3

Programming Languages

LiteFlowNet3

This repository (https://github.com/twhui/LiteFlowNet3) provides the offical release of the code package for my paper "LiteFlowNet3: Resolving Correspondence Ambiguity for More Accurate Optical Flow Estimation" published in ECCV 2020. The pre-print is available on ECVA or arXiv (July 2020). Supplementary material is released on ECVA. A short summary video is also available on YouTube as well.

Overview

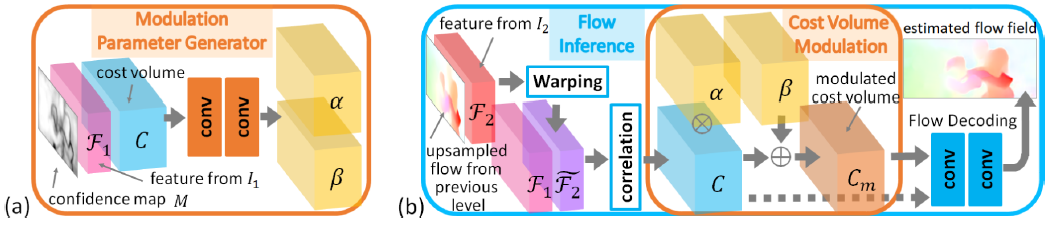

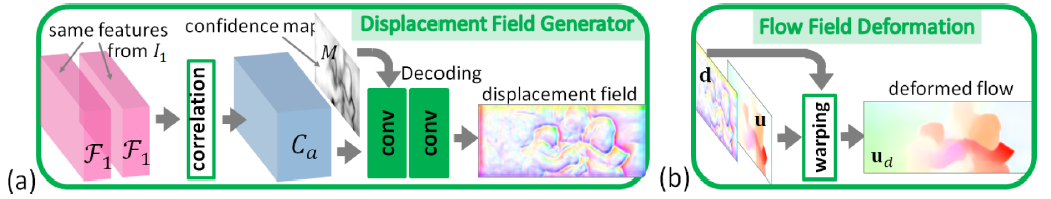

LiteFlowNet3 is built upon our previous work LiteFlowNet2 (TPAMI 2020) with the incorporation of cost volume modulation (CM) and flow field deformation (FD) for improving the flow accuracy further. For the ease of presentation, only a 2-level encoder-decoder structure is shown. The proposed modules are applicable to other levels but not limited to level 1.

LiteFlowNet3 is built upon our previous work LiteFlowNet2 (TPAMI 2020) with the incorporation of cost volume modulation (CM) and flow field deformation (FD) for improving the flow accuracy further. For the ease of presentation, only a 2-level encoder-decoder structure is shown. The proposed modules are applicable to other levels but not limited to level 1.

Contributions

(1) Cost volume modulation (CM): Given a pair of images, the existence of partial occlusion and homogeneous regions makes the establishment of correspondence very challenging. This situation also occurs on feature space because simply transforming images into feature maps does not resolve the correspondence ambiguity. In this way, a cost volume is corrupted and the subsequent flow decoding is seriously affected. To address this problem, we propose to filter outliers in a cost volume by using an adaptive modulation before performing the flow decoding. Besides, a confidence map is introduced to facilitate generating modulation parameters.

(2) Flow field deformation (FD): In coarse-to-fine flow estimation, a flow estimate from the previous level is used as the flow initialization for the next level. This highly demands the previous estimate to be accurate. Otherwise, erroneous optical flow is propagated to the subsequent levels. Due to local flow consistency, neighboring image points that have similar feature vectors have similar optical flow. With this motivation, we propose to refine a given flow field by replacing each inaccurate optical flow with an accurate one from a nearby position. The refinement can be easily achieved by meta-warping of the flow field according to a displacement field. An auto-correlation cost volume of feature map is used to store the similarity score of neighboring image points. To avoid trivial solution, a confidence map associated with the given flow field is used to guide the displacement decoding from the cost volume.

Performance

| Sintel Clean Testing Set | Sintel Final Testing Set | KITTI12 Testing Set (Avg-All) | KITTI15 Testing Set (Fl-fg) | Model Size (M) | Runtime* (ms) GTX 1080 | |

|---|---|---|---|---|---|---|

| LiteFlowNet (CVPR18) | 4.54 | 5.38 | 1.6 | 7.99% | 5.4 | 88 |

| LiteFlowNet2 (TPAMI20) | 3.48 | 4.69 | 1.4 | 7.64% | 6.4 | 40 |

| HD3 (CVPR19) | 4.79 | 4.67 | 1.4 | 9.02% | 39.9 | 128 |

| IRR-PWC (CVPR19) | 3.84 | 4.58 | 1.6 | 7.52% | 6.4 | 180 |

| LiteFlowNet3 (ECCV20) | 3.03 | 4.53 | 1.3 | 6.96% | 5.2 | 59 |

Note: *Runtime is averaged over 100 runs for a Sintel's image pair of size 1024 × 436.

License and Citation

This software and associated documentation files (the "Software"), and the research paper (LiteFlowNet3: Resolving Correspondence Ambiguity for More Accurate Optical Flow Estimation) including but not limited to the figures, and tables (the "Paper") are provided for academic research purposes only and without any warranty. Any commercial use requires my consent. When using any parts of the Software or the Paper in your work, please cite the following papers:

@InProceedings{hui20liteflownet3,

author = {Tak-Wai Hui and Chen Change Loy},

title = {{LiteFlowNet3: Resolving Correspondence Ambiguity for More Accurate Optical Flow Estimation}},

journal = {{Proceedings of the European Conference on Computer Vision (ECCV)}},

year = {2020},

pages = {169--184},

}@InProceedings{hui20liteflownet2,

author = {Tak-Wai Hui and Xiaoou Tang and Chen Change Loy},

title = {{A Lightweight Optical Flow CNN - Revisiting Data Fidelity and Regularization},

journal = {{IEEE Transactions on Pattern Analysis and Machine Intelligence}},

year = {2020},

url = {http://mmlab.ie.cuhk.edu.hk/projects/LiteFlowNet/}

}@InProceedings{hui18liteflownet,

author = {Tak-Wai Hui and Xiaoou Tang and Chen Change Loy},

title = {{LiteFlowNet: A Lightweight Convolutional Neural Network for Optical Flow Estimation}},

booktitle = {{Proceedings of IEEE Conference on Computer Vision and Pattern Recognition (CVPR)}},

year = {2018},

pages = {8981--8989},

url = {http://mmlab.ie.cuhk.edu.hk/projects/LiteFlowNet/}

}Prerequisite and Compiling

LiteFlowNet3 uses the same Caffe package as LiteFlowNet. Please refer to the details in LiteFlowNet GitHub repository.

Training

Please refer to the training steps in LiteFlowNet GitHub repository and adopt the training protocols in LiteFlowNet3 paper.

Trained models

Download the models (LiteFlowNet3-ft-sintel, LiteFlowNet3-ft-kitti, LiteFlowNet3-S-ft-sintel, LiteFlowNet3-S-ft-kitti) and then place the models in the folder models/trained.

Testing

- Open the testing folder

$ cd LiteFlowNet3/models/testing- Create a soft link in the folder

/testing

$ ln -s ../../build/tools bin-

Replace

MODEin./test_MODE.pytobatchif all the images has the same resolution (e.g. Sintel dataset), otherwise replace it toiter(e.g. KITTI dataset). -

Replace

MODELin lines 9 and 10 oftest_MODE.pyto one of the trained models (e.g.LiteFlowNet3-ft-sintel). -

Run the testing script. Flow fields (

MODEL-0000000.flo,MODEL-0000001.flo, ... etc) are stored in the folder/testing/resultshaving the same order as the image pair sequence.

$ test_MODE.py img1_pathList.txt img2_pathList.txt resultsDeclaration

The early version of LiteFlowNet3 was submitted to ICCV 2019 for reviewing in March 2019. The improved work was offically published in ECCV 2020 in August 2020. We uploaded a preprint to arXiv in July 2020. We are the first and the original authors to propose the aforementioned contributions (including but not limited to the motivations and technical details) for optical flow estimation.