timsainb / Noisereduce

Licence: mit

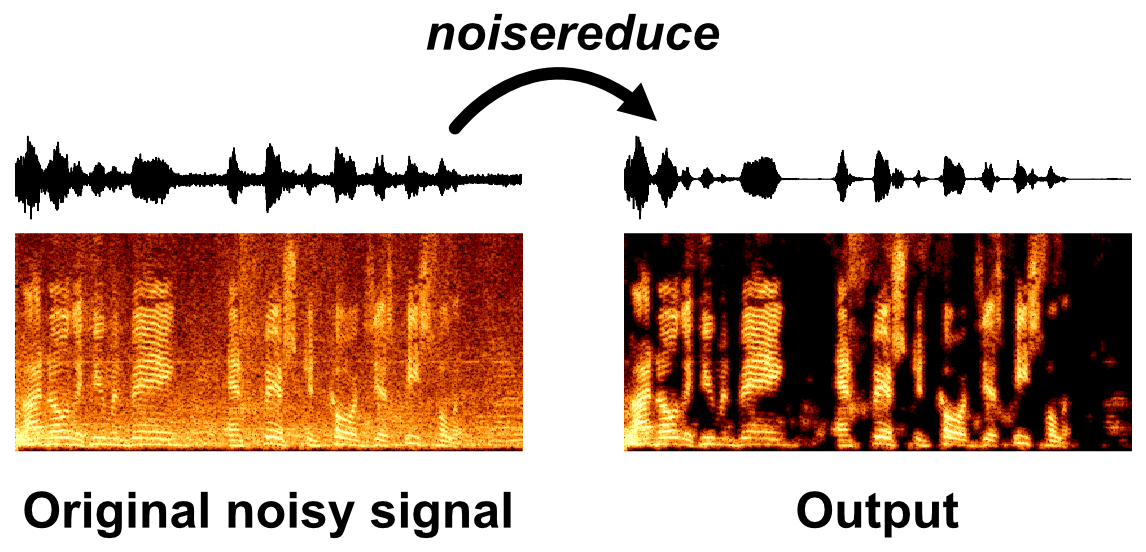

Noise reduction in python using spectral gating (speech, bioacoustics, time-domain signals)

Stars: ✭ 347

Labels

Projects that are alternatives of or similar to Noisereduce

Deeppurpose

A Deep Learning Toolkit for DTI, Drug Property, PPI, DDI, Protein Function Prediction (Bioinformatics)

Stars: ✭ 342 (-1.44%)

Mutual labels: jupyter-notebook

Machine Learning

Content for Udacity's Machine Learning curriculum

Stars: ✭ 3,618 (+942.65%)

Mutual labels: jupyter-notebook

Pytorch Cortexnet

PyTorch implementation of the CortexNet predictive model

Stars: ✭ 349 (+0.58%)

Mutual labels: jupyter-notebook

Coursera Deep Learning Deeplearning.ai

(完结)网易云课堂微专业《深度学习工程师》听课笔记,编程作业和课后练习

Stars: ✭ 344 (-0.86%)

Mutual labels: jupyter-notebook

Nlp Papers With Arxiv

Statistics and accepted paper list of NLP conferences with arXiv link

Stars: ✭ 345 (-0.58%)

Mutual labels: jupyter-notebook

Fast Pytorch

Pytorch Tutorial, Pytorch with Google Colab, Pytorch Implementations: CNN, RNN, DCGAN, Transfer Learning, Chatbot, Pytorch Sample Codes

Stars: ✭ 346 (-0.29%)

Mutual labels: jupyter-notebook

Keras Oneshot

koch et al, Siamese Networks for one-shot learning, (mostly) reimplimented in keras

Stars: ✭ 348 (+0.29%)

Mutual labels: jupyter-notebook

Pytorch Tutorials Examples And Books

PyTorch1.x tutorials, examples and some books I found 【不定期更新】整理的PyTorch 1.x 最新版教程、例子和书籍

Stars: ✭ 346 (-0.29%)

Mutual labels: jupyter-notebook

Kaggle competition treasure

Describe past Kaggle solutions

Stars: ✭ 346 (-0.29%)

Mutual labels: jupyter-notebook

Nbval

A py.test plugin to validate Jupyter notebooks

Stars: ✭ 347 (+0%)

Mutual labels: jupyter-notebook

T81 558 deep learning

Washington University (in St. Louis) Course T81-558: Applications of Deep Neural Networks

Stars: ✭ 4,152 (+1096.54%)

Mutual labels: jupyter-notebook

Ml cheat sheet

My notes and superstitions about common machine learning algorithms

Stars: ✭ 349 (+0.58%)

Mutual labels: jupyter-notebook

Amazon Forest Computer Vision

Amazon Forest Computer Vision: Satellite Image tagging code using PyTorch / Keras with lots of PyTorch tricks

Stars: ✭ 346 (-0.29%)

Mutual labels: jupyter-notebook

Noise reduction in python using spectral gating

- This algorithm is based (but not completely reproducing) on the one outlined by Audacity for the noise reduction effect (Link to C++ code)

- The algorithm takes two inputs:

- A noise audio clip containing prototypical noise of the audio clip (optional)

- A signal audio clip containing the signal and the noise intended to be removed

Steps of algorithm

- An FFT is calculated over the noise audio clip

- Statistics are calculated over FFT of the the noise (in frequency)

- A threshold is calculated based upon the statistics of the noise (and the desired sensitivity of the algorithm)

- An FFT is calculated over the signal

- A mask is determined by comparing the signal FFT to the threshold

- The mask is smoothed with a filter over frequency and time

- The mask is appled to the FFT of the signal, and is inverted

Installation

pip install noisereduce

noisereduce optionally uses Tensorflow as a backend to speed up FFT and gaussian convolution. It is not listed in the requirements.txt so because (1) it is optional and (2) tensorflow-gpu and tensorflow (cpu) are both compatible with this package. The package requires Tensorflow 2+ for all tensorflow operations.

Usage

import noisereduce as nr

# load data

rate, data = wavfile.read("mywav.wav")

# select section of data that is noise

noisy_part = data[10000:15000]

# perform noise reduction

reduced_noise = nr.reduce_noise(audio_clip=data, noise_clip=noisy_part, verbose=True)

Arguments to noise_reduce

n_grad_freq (int): how many frequency channels to smooth over with the mask.

n_grad_time (int): how many time channels to smooth over with the mask.

n_fft (int): number audio of frames between STFT columns.

win_length (int): Each frame of audio is windowed by `window()`. The window will be of length `win_length` and then padded with zeros to match `n_fft`..

hop_length (int):number audio of frames between STFT columns.

n_std_thresh (int): how many standard deviations louder than the mean dB of the noise (at each frequency level) to be considered signal

prop_decrease (float): To what extent should you decrease noise (1 = all, 0 = none)

pad_clipping (bool): Pad the signals with zeros to ensure that the reconstructed data is equal length to the data

use_tensorflow (bool): Use tensorflow as a backend for convolution and fft to speed up computation

verbose (bool): Whether to plot the steps of the algorithm

Citation

If you use this code in your research, please cite it:

@software{tim_sainburg_2019_3243139,

author = {Tim Sainburg},

title = {timsainb/noisereduce: v1.0},

month = jun,

year = 2019,

publisher = {Zenodo},

version = {db94fe2},

doi = {10.5281/zenodo.3243139},

url = {https://doi.org/10.5281/zenodo.3243139}

}

or

@article{sainburg2020finding,

title={Finding, visualizing, and quantifying latent structure across diverse animal vocal repertoires},

author={Sainburg, Tim and Thielk, Marvin and Gentner, Timothy Q},

journal={PLoS computational biology},

volume={16},

number={10},

pages={e1008228},

year={2020},

publisher={Public Library of Science}

}

Project based on the cookiecutter data science project template. #cookiecutterdatascience

Note that the project description data, including the texts, logos, images, and/or trademarks,

for each open source project belongs to its rightful owner.

If you wish to add or remove any projects, please contact us at [email protected].