anvaka / Pplay

Programming Languages

Pixel Play

This website allows you to play with pixels on the screen using WebGL. All you need to do is fill in a function that takes a point and returns its color:

f(coordinate) => color

You can immediately see the scene, pan and zoom around (as if it was Google Maps):

Once you like what you've created - you can simply copy the link and share it with the world (e.g. /r/pplay).

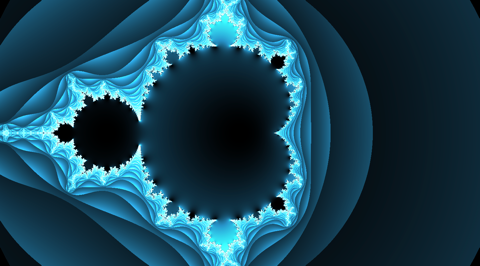

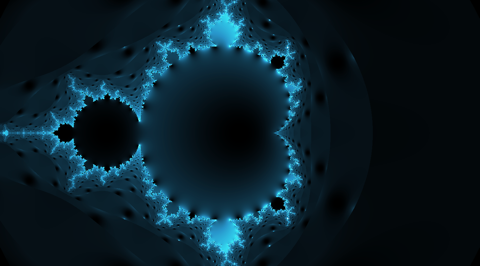

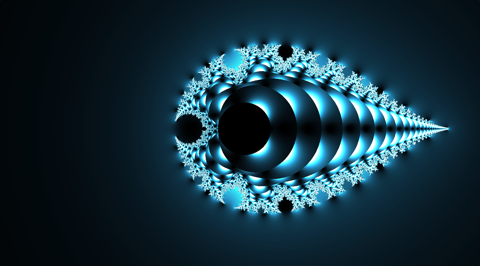

Example

If you click "Randomize" button, the website will generate a fractal.

It is very easy to generate a fractal with just couple lines. All you need to do is update a sum inside the loop.

vec4 get_color(vec2 p) {

float t = 0.;

vec2 z = vec2(0.);

vec2 c = p;

for(int i = 0; i < 32; ++i) {

if (length(z) > 2.) break;

// Change this line to get a new fractal

z = c_mul(z, z) + c;

t = float(i);

}

return length((z)) * vec4(t * vec3(1./64., 1./32., 1./24.), 1.0);

}

Changing initial condition (variable c) or the final color code (where we return vec4)

often produces very different and beautiful fractals.

Here are just a few examples. Click on any image above to explore fractal and try to find any difference between code - it is going to be very small.

Not only fractals

To be honest, this is a very generic tool. All it does is that one function. Take a coordinate of a point, and output a color.

Coordinate of a point is given by variable vec2 p, so you can get p.x, and p.y.

The color is returned from the main function return vec4(r, g, b, a) - each component of this vector corresponds

to color, and ranges between 0 and 1.

Given this information, we can change the output to anything we want:

Here we first set color to a blue-ish color vec4(0.1, 0.2, 0.4, 1.), and then mixed it with coordinates of a point.

I have also used a special variable called iFrame - it is always equal to the current frame number (increases

with time). So we can use a periodic function like sin to get animation. You can find more special variables below

Extended functionality

Pixel play comes with small library of functions and variables.

External variables

Variables are modeled after shadertoy.com conventions and are available for you to use in your code:

// Current frame number, counted from launch of the program

uniform float iFrame;

// How many seconds passed since program launch

uniform float iTime;

// Mouse (or touch) coordinates. `.xy` - current, `.zw` - last clicked.

// Note: To translate coordinates to scene coordinates use

// screen2scene(iMouse.xy) -> vec2 method.

uniform vec4 iMouse;

// screen resolution

uniform vec2 iResolution;

Complex numbers math

Each complex number is a 2d vector (vec2 type). Standard GLSL multiplication/division rules cannot

be applied to complex numbers, so we have a helper function: c_mul(z1, z2) and c_div(z0, z1)

multiplies and divides two complex numbers.

Other trigonometric functions are available with prefix c_. (e.g. c_sin(z), c_cos(z), c_tanh(z), and so on).

You can find the list of them all here.

Query string limit

By default your code is saved in the query string. However, if it exceeds 1,000 characters

it cannot be saved (as browsers do not support long query strings). This shouldn't be a problem if you

don't want to share your code.

But if you want to share what you've created you will need to save your code with .glsl extension

on https://gist.github.com/ and then update the query string of the PixelPlay:

- Delete

fcquery argument (if present) - Add

gist=gistId

When you save a gist it will give you the url like this: https://gist.github.com/anvaka/0f11251039eb366630bc7ca08ce0eefd

- those numbers are your

gistId. The final url will look like this:

https://anvaka.github.io/pplay/?gist=0f11251039eb366630bc7ca08ce0eefd&tx=0.284&ty=0.284&scale=0.471

Audio channel

It is possible to make audio channel accessible to a shader. I'm still not sure what is the best way to model this (your suggestions are appreciated!), but here is how it is constructed now.

Each of the 512 columns in the texture row represent a frequency, encoded in rgba format:

-

r- immediate frequency value -

g- average frequency value (calculated asg = prev_g * 0.5 + r * 0.5) -

b- "long" average frequency value (calculated asb = 0.9 * prev_b + r * 0.1) -

a- is not used at the moment.

Immediate value is usually very "spiky", while b is smoothed out.

First two rows of the texture alternate between current and previous rendering frame. On odd frame number

row 0 represent current audio buffer, and 1 is previous buffer. On even frame number this is inverse (

current audio signal will be in the row 1, while previous signal is in the row 0). This alternation is

done to preserve CPU cycles and not move data unnecessarily.

Rows 3 and 4 are also alternating between current and previous frame, but they serve as aggregation

mechanism:

- Columns

0 .. 255store average value of frequency pairs. I.e.column(i) = (freq[i] + freq[i + 1])/2 - Columns

256 .. 383store average value of average pairs in the first256columns. I.e.column(256 + i/2) = column(i) + column(i + 1) - .. and so on ..

For example, consider the following row of original frequencies:

Original row: 2 2 1 3 4 6 1 3

Aggregation: 2 2 5 2

As you can see, we only need four elements to store aggregated pairs of the first row, so, let's use remaining columns in the aggregation row, to aggregate aggregations:

Aggregation: 2 2 5 2

Aggregation (cont): 2 3.5

Aggregation (cont): 2.75

Or, if we flatten aggregation row out:

Original row: 2 2 1 3 4 6 1 3

Aggregation: 2 2 5 2 2 3.5 2.75 nil

This aggregation row is created to avoid summation and cycles in the shader. If you need to know average volume of the sound, you can just look at second to the last value, you don't need to

(2 + 2 + 1 + 3 + 4 + 6 + 1 + 3)/8 = 2.75

You just look at the second to the last column in the aggregation row. Here is a snippet to fetch audio frequency at a given column/row:

vec4 getBandValue(float bandId, float row) {

// assuming sound is bound to `iChannel0`

return texture2D(iChannel0, vec2((bandId + 0.5)/512., (row + 0.5)/4.));

}

// then you can use it like so:

// This takes current frequency value in bin 42.

vec4 imm = getBandValue(42., mod(iFrame + 1., 2.));

// This takes previous frame frequency value in bin 42.

vec4 imm_prev = getBandValue(42., mod(iFrame, 2.));

// To get average volume of the current frame:

vec4 avg_Volume = getBandValue(510., mod(iFrame + 1., 2.) + 2.);

// To get average aggregated low frequency of the current frame (bass)

// 498. = 512. - 2. - 4. - 8.

vec4 avg_bass = getBandValue(498., mod(iFrame + 1., 2.) + 2.);

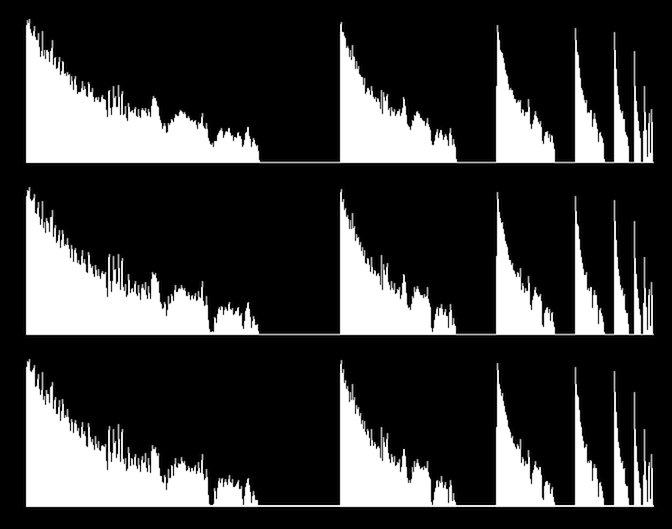

Here is an example of the three histograms:

- The first row is a smoothed, long average (

bcomponent). - The second row is an average value (

gcomponent); - The last row is immediate value (

rcomponent)

From left to right the histograms represent average value of the previous histogram:

original <- avg <- avg of avg <- avg of avg of avg ...

If you are using a desktop computer, you can click on the image above to open it and see interactive play. Why desktop? Because of the following caveat:

Caveat

Keep in mind, that Apple does not support audio element analysis in iOS.

Thanks

Thank you for reading this!

I hope you find this project useful and fun to play with. Please don't forget to share what you find or learn.

Local build

# install dependencies

npm install

# serve with hot reload at localhost:8889

npm start

License

MIT