ph1ps / Nudity Coreml

Licence: mit

A CoreML models that detects nudity in a picture

Stars: ✭ 87

Programming Languages

swift

15916 projects

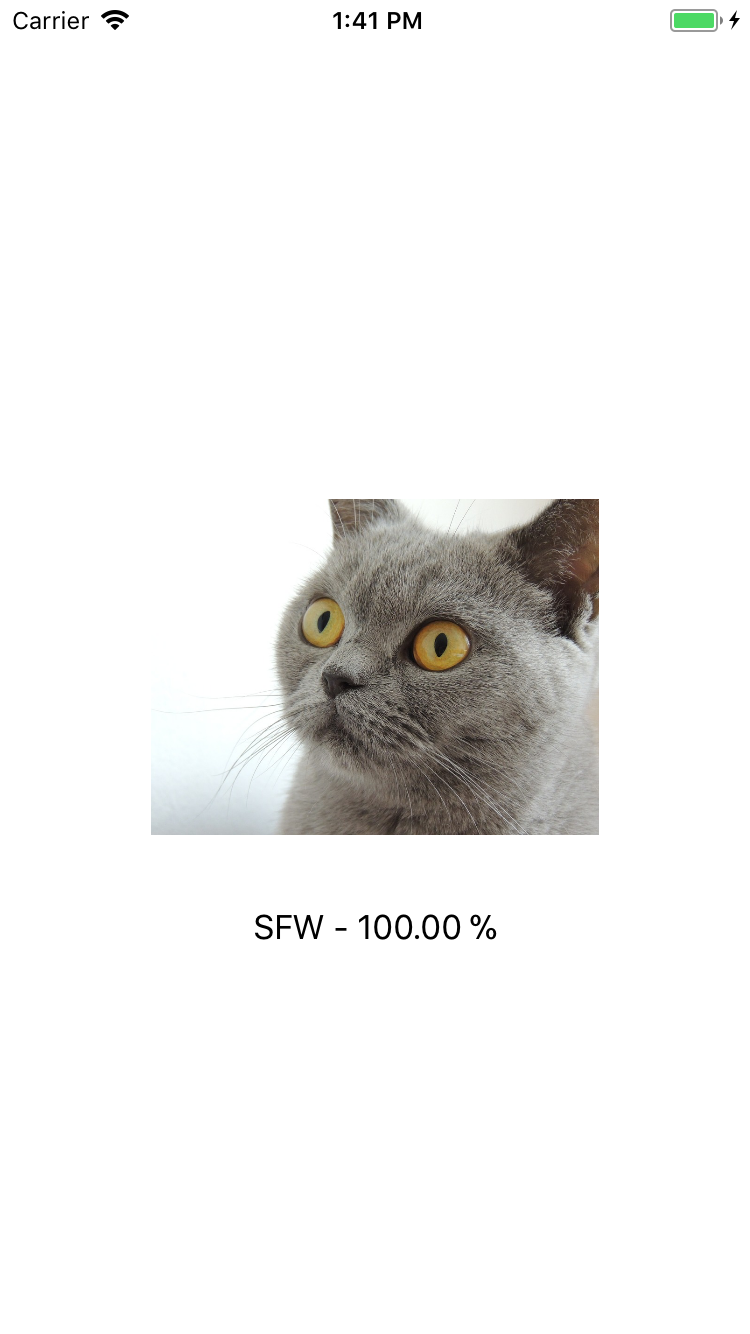

Nudity Detection for CoreML

Description

This is the OpenNSFW dataset implemented in Apple's new framework called CoreML. The OpenNSFW dataset can predict images as either SFW (safe for work) or NSFW (not safe for work) from images. The model was built with Caffe and is a fine-tuned Resnet model.

To test this model you can open the Nudity.xcodeproj and run it on your device (iOS 11 and Xcode 9 is required). To test further images just add them to the project and replace my testing with yours.

Obtaining the model

- Download the model from Google Drive and drag it right into your project folder

- Convert the model on your own:

- Change directory:

cd ./Convert - Change directory:

sh convert.sh

- Change directory:

More information

If you want to find out more how this model works and on which data it was trained on, feel free to visit the original OpenNSFW page on Github

Examples

I won't show any NSFW examples for obvious reasons.

Note that the project description data, including the texts, logos, images, and/or trademarks,

for each open source project belongs to its rightful owner.

If you wish to add or remove any projects, please contact us at [email protected].