1Konny / Wae Pytorch

Stars: ✭ 47

Programming Languages

python

139335 projects - #7 most used programming language

Projects that are alternatives of or similar to Wae Pytorch

Fun-with-MNIST

Playing with MNIST. Machine Learning. Generative Models.

Stars: ✭ 23 (-51.06%)

Mutual labels: vae, wgan

Tensorflow Generative Model Collections

Collection of generative models in Tensorflow

Stars: ✭ 3,785 (+7953.19%)

Mutual labels: vae, wgan

Generative-Model

Repository for implementation of generative models with Tensorflow 1.x

Stars: ✭ 66 (+40.43%)

Mutual labels: vae, wgan

Deeplearningmugenknock

でぃーぷらーにんぐを無限にやってディープラーニングでDeepLearningするための実装CheatSheet

Stars: ✭ 684 (+1355.32%)

Mutual labels: vae, wgan

generative deep learning

Generative Deep Learning Sessions led by Anugraha Sinha (Machine Learning Tokyo)

Stars: ✭ 24 (-48.94%)

Mutual labels: vae, wgan

Generative Models

Annotated, understandable, and visually interpretable PyTorch implementations of: VAE, BIRVAE, NSGAN, MMGAN, WGAN, WGANGP, LSGAN, DRAGAN, BEGAN, RaGAN, InfoGAN, fGAN, FisherGAN

Stars: ✭ 438 (+831.91%)

Mutual labels: vae, wgan

Awesome Vaes

A curated list of awesome work on VAEs, disentanglement, representation learning, and generative models.

Stars: ✭ 418 (+789.36%)

Mutual labels: vae

Generative Models

Collection of generative models, e.g. GAN, VAE in Pytorch and Tensorflow.

Stars: ✭ 6,701 (+14157.45%)

Mutual labels: vae

Disentangling Vae

Experiments for understanding disentanglement in VAE latent representations

Stars: ✭ 398 (+746.81%)

Mutual labels: vae

Pytorch Vqvae

Vector Quantized VAEs - PyTorch Implementation

Stars: ✭ 396 (+742.55%)

Mutual labels: vae

Voice Conversion

Voice conversion (VC) investigation using three variants of VAE

Stars: ✭ 21 (-55.32%)

Mutual labels: vae

Awesome Gans

Awesome Generative Adversarial Networks with tensorflow

Stars: ✭ 585 (+1144.68%)

Mutual labels: wgan

Tensorflow Mnist Vae

Tensorflow implementation of variational auto-encoder for MNIST

Stars: ✭ 422 (+797.87%)

Mutual labels: vae

Variational Autoencoder

Variational autoencoder implemented in tensorflow and pytorch (including inverse autoregressive flow)

Stars: ✭ 807 (+1617.02%)

Mutual labels: vae

Joint Vae

Pytorch implementation of JointVAE, a framework for disentangling continuous and discrete factors of variation 🌟

Stars: ✭ 404 (+759.57%)

Mutual labels: vae

Dlow

Official PyTorch Implementation of "DLow: Diversifying Latent Flows for Diverse Human Motion Prediction". ECCV 2020.

Stars: ✭ 32 (-31.91%)

Mutual labels: vae

Deepnude An Image To Image Technology

DeepNude's algorithm and general image generation theory and practice research, including pix2pix, CycleGAN, UGATIT, DCGAN, SinGAN, ALAE, mGANprior, StarGAN-v2 and VAE models (TensorFlow2 implementation). DeepNude的算法以及通用生成对抗网络(GAN,Generative Adversarial Network)图像生成的理论与实践研究。

Stars: ✭ 4,029 (+8472.34%)

Mutual labels: vae

Tensorflow Vae Gan Draw

A collection of generative methods implemented with TensorFlow (Deep Convolutional Generative Adversarial Networks (DCGAN), Variational Autoencoder (VAE) and DRAW: A Recurrent Neural Network For Image Generation).

Stars: ✭ 577 (+1127.66%)

Mutual labels: vae

Variational Autoencoder

PyTorch implementation of "Auto-Encoding Variational Bayes"

Stars: ✭ 25 (-46.81%)

Mutual labels: vae

WAE-pytorch

Pytorch implementation of WAE-MMD(paper).

Dependencies

python 3.6.4

pytorch 0.3.1.post2

visdom

Usage

- download

img_align_celeba.zipandlist_eval_partition.txtfiles from here, makedatadirectory, put downloaded files intodata, and then run./preprocess_celeba.sh. for example,

.

└── data

└── img_align_celeba.zip

└── list_eval_partition.txt

- initialize visdom

python -m visdom.server

- run by scripts

sh run_celeba_wae_mmd.sh

- check training process on the visdom server

localhost:8097

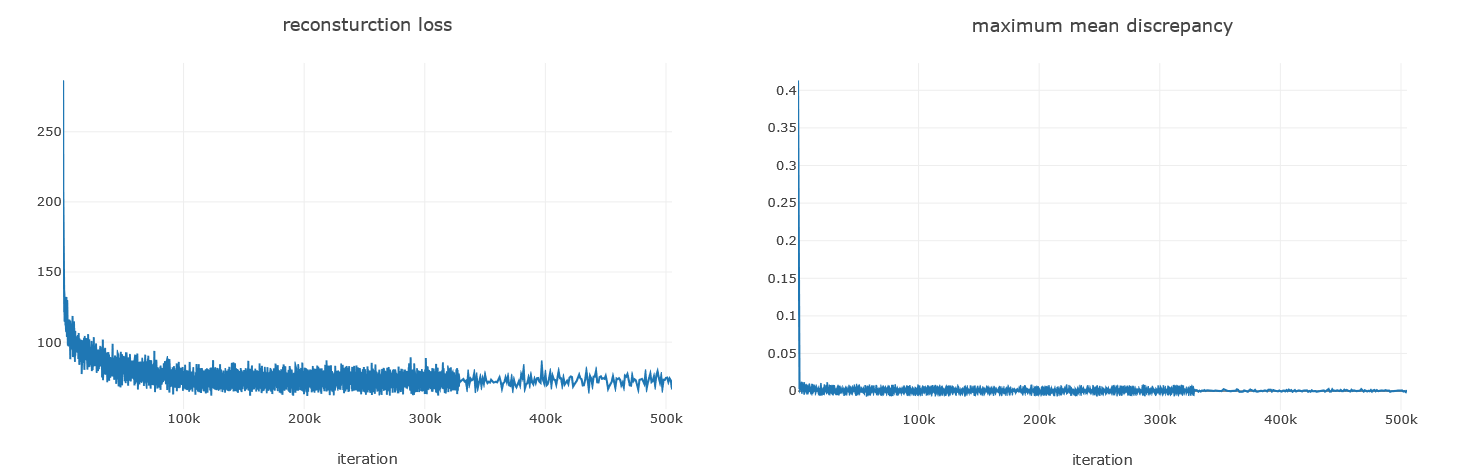

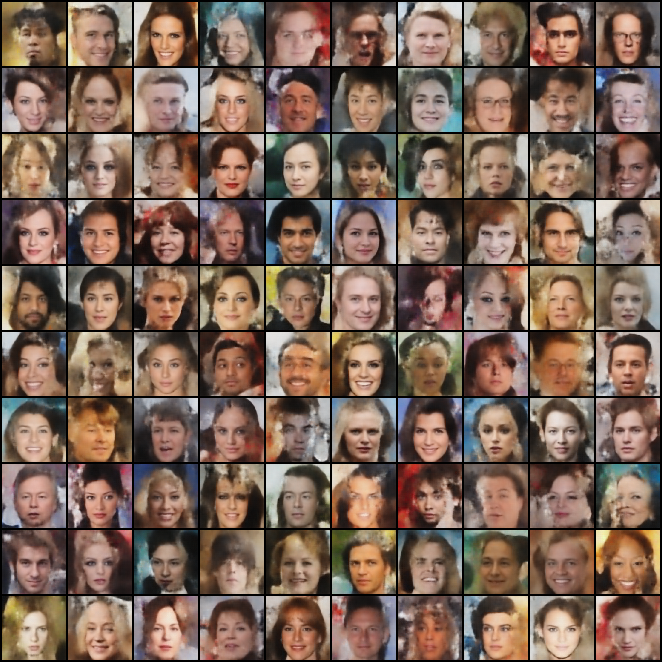

Results - CelebA

training plots

train data reconstruction

test data reconstruction

random data generation via sampling z from P(z)

References

- Wasserstein Auto-Encoders, Tolstikhin et al, ICLR, 2018

- Code repos : official, re-implementation, both in Tensorflow

Note that the project description data, including the texts, logos, images, and/or trademarks,

for each open source project belongs to its rightful owner.

If you wish to add or remove any projects, please contact us at [email protected].