malllabiisc / Wordgcn

Programming Languages

Projects that are alternatives of or similar to Wordgcn

WordGCN

Incorporating Syntactic and Semantic Information in Word Embeddings using Graph Convolutional Networks

Overview of WordGCN

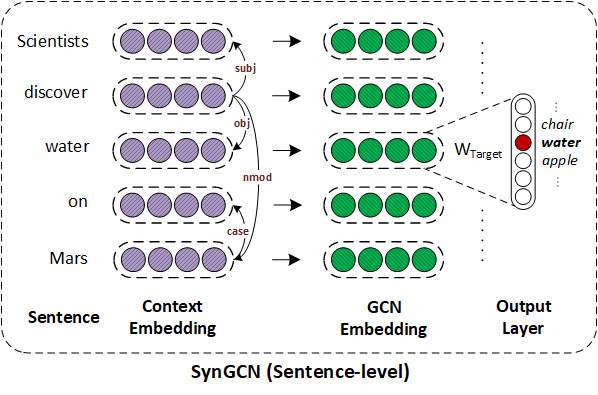

Overview of SynGCN: SynGCN employs Graph Convolution Network for utilizing dependency context for learning word embeddings. For each word in vocabulary, the model learns its representation by aiming to predict each word based on its dependency context encoded using GCNs. Please refer Section 5 of the paper for more details.

Dependencies

- Compatible with TensorFlow 1.x and Python 3.x.

- Dependencies can be installed using

requirements.txt.pip3 install -r requirements.txt

- Install word-embedding-benchmarks used for evaluating learned embeddings.

- The test and valid dataset splits used in the paper can be downloaded from this link. Replace the original

~/web_datafolder with the provided one. - For switching between valid and test split execute

python switch_evaluation_data.py -split <valid/valid>

- The test and valid dataset splits used in the paper can be downloaded from this link. Replace the original

Dataset:

-

We used Wikipedia corpus. The processed version can be downloaded from here or using the script below:

pip install gdown gdown --id 1iFpuKFpDnXCD9QpUw8wStG3ndKl7-KwX -O data.zip unzip data.zip rm data.zip

-

The processed dataset includes:

-

voc2id.txtmapping of words to to their unique identifiers. -

id2freq.txtcontains frequency of words in the corpus. -

de2id.txtmapping of dependency relations to their unique identifiers. -

data.txtcontains the entire Wikipedia corpus with each sentence of corpus stored in the following format:<num_words> <num_dep_rels> tok1 tok2 tok3 ... tokn dep_e1 dep_e2 .... dep_em

- Here,

num_wordsis the number of words andnum_dep_relsdenotes the number of dependency relations in the sentence. -

tok_1, tok_2 ...is the list of tokens in the sentence anddep_e1, dep_e2 ...is the list of dependency relations where each is of formsource_token|destination_token|dep_rel_label.

- Here,

-

Training SynGCN embeddings:

- Download the processed Wikipedia corpus (link) and extract it in

./datadirectory. - Execute

maketo compile the C++ code for creating batches. - To start training run:

python syngcn.py -name test_embeddings -gpu 0 -dump -maxsentlen <max_sentence_length in your data.txt> -maxdeplen <max_dependency_length in your data.txt> -embed_dim 300

- The trained embeddings will be stored in

./embeddingsdirectory with the provided nametest_embeddings. - Note: As reported in TensorFlow issue #13048. The current SynGCN's TF-based implementation is slow compared to Mikolov's word2vec implementation. For training SynGCN on a very large corpus might require multi-GPU or C++ based implementation.

Fine-tuning embedding using SemGCN:

- Pre-trained 300-dimensional

SynGCNembeddings can be downloaded from here. - For incorporating semantic information in given embeddings run:

python semgcn.py -embed ./embeddings/pretrained_embed.txt -semantic synonyms -embed_dim 300 -name fine_tuned_embeddings -dump -gpu 0

- The fine-tuned embeddings will be saved in

./embeddingsdirectory with namefine_tuned_embeddings.

Extrinsic Evaluation:

For extrinsic evaluation of embeddings the models from the following papers were used:

- NCR (Neural Co-reference Resolution): Higher-order Coreference Resolution with Coarse-to-fine Inference.

- NER (Named Entity Recognition): NeuroNER: an easy-to-use program for named-entity recognition based on neural networks.

- POS (Part-of-speech tagging): BiLSTM-CNN-CRF architecture for sequence tagging.

- SQuAD (Question Answering): Simple and Effective Multi-Paragraph Reading Comprehension

Citation:

Please cite the following paper if you use this code in your work.

@inproceedings{wordgcn2019,

title = "Incorporating Syntactic and Semantic Information in Word Embeddings using Graph Convolutional Networks",

author = "Vashishth, Shikhar and

Bhandari, Manik and

Yadav, Prateek and

Rai, Piyush and

Bhattacharyya, Chiranjib and

Talukdar, Partha",

booktitle = "Proceedings of the 57th Conference of the Association for Computational Linguistics",

month = jul,

year = "2019",

address = "Florence, Italy",

publisher = "Association for Computational Linguistics",

url = "https://www.aclweb.org/anthology/P19-1320",

pages = "3308--3318"

}

For any clarification, comments, or suggestions please create an issue or contact Shikhar.