TatsuyaShirakawa / Poincare Embedding

Licence: mit

Poincaré Embedding (unofficial)

Stars: ✭ 218

Projects that are alternatives of or similar to Poincare Embedding

Multi object datasets

Multi-object image datasets with ground-truth segmentation masks and generative factors.

Stars: ✭ 121 (-44.5%)

Mutual labels: representation-learning

Deformable Kernels

Deforming kernels to adapt towards object deformation. In ICLR 2020.

Stars: ✭ 166 (-23.85%)

Mutual labels: representation-learning

Variational Ladder Autoencoder

Implementation of VLAE

Stars: ✭ 196 (-10.09%)

Mutual labels: representation-learning

Srl Zoo

State Representation Learning (SRL) zoo with PyTorch - Part of S-RL Toolbox

Stars: ✭ 125 (-42.66%)

Mutual labels: representation-learning

Autoregressive Predictive Coding

Autoregressive Predictive Coding: An unsupervised autoregressive model for speech representation learning

Stars: ✭ 138 (-36.7%)

Mutual labels: representation-learning

Stylealign

[ICCV 2019]Aggregation via Separation: Boosting Facial Landmark Detector with Semi-Supervised Style Transition

Stars: ✭ 172 (-21.1%)

Mutual labels: representation-learning

Sigver wiwd

Learned representation for Offline Handwritten Signature Verification. Models and code to extract features from signature images.

Stars: ✭ 112 (-48.62%)

Mutual labels: representation-learning

Pytorch Byol

PyTorch implementation of Bootstrap Your Own Latent: A New Approach to Self-Supervised Learning

Stars: ✭ 213 (-2.29%)

Mutual labels: representation-learning

Attribute Aware Attention

[ACM MM 2018] Attribute-Aware Attention Model for Fine-grained Representation Learning

Stars: ✭ 143 (-34.4%)

Mutual labels: representation-learning

Vae vampprior

Code for the paper "VAE with a VampPrior", J.M. Tomczak & M. Welling

Stars: ✭ 173 (-20.64%)

Mutual labels: representation-learning

Hyte

EMNLP 2018: HyTE: Hyperplane-based Temporally aware Knowledge Graph Embedding

Stars: ✭ 130 (-40.37%)

Mutual labels: representation-learning

Kate

Code & data accompanying the KDD 2017 paper "KATE: K-Competitive Autoencoder for Text"

Stars: ✭ 135 (-38.07%)

Mutual labels: representation-learning

Jodie

A PyTorch implementation of ACM SIGKDD 2019 paper "Predicting Dynamic Embedding Trajectory in Temporal Interaction Networks"

Stars: ✭ 172 (-21.1%)

Mutual labels: representation-learning

Pcl

PyTorch code for "Prototypical Contrastive Learning of Unsupervised Representations"

Stars: ✭ 124 (-43.12%)

Mutual labels: representation-learning

Semantic Embeddings

Hierarchy-based Image Embeddings for Semantic Image Retrieval

Stars: ✭ 196 (-10.09%)

Mutual labels: representation-learning

Declutr

The corresponding code from our paper "DeCLUTR: Deep Contrastive Learning for Unsupervised Textual Representations". Do not hesitate to open an issue if you run into any trouble!

Stars: ✭ 111 (-49.08%)

Mutual labels: representation-learning

Awesome Visual Representation Learning With Transformers

Awesome Transformers (self-attention) in Computer Vision

Stars: ✭ 166 (-23.85%)

Mutual labels: representation-learning

Paddlehelix

Bio-Computing Platform featuring Large-Scale Representation Learning and Multi-Task Deep Learning “螺旋桨”生物计算工具集

Stars: ✭ 213 (-2.29%)

Mutual labels: representation-learning

Awesome Network Embedding

A curated list of network embedding techniques.

Stars: ✭ 2,379 (+991.28%)

Mutual labels: representation-learning

Simclr

SimCLRv2 - Big Self-Supervised Models are Strong Semi-Supervised Learners

Stars: ✭ 2,720 (+1147.71%)

Mutual labels: representation-learning

poincare-embedding

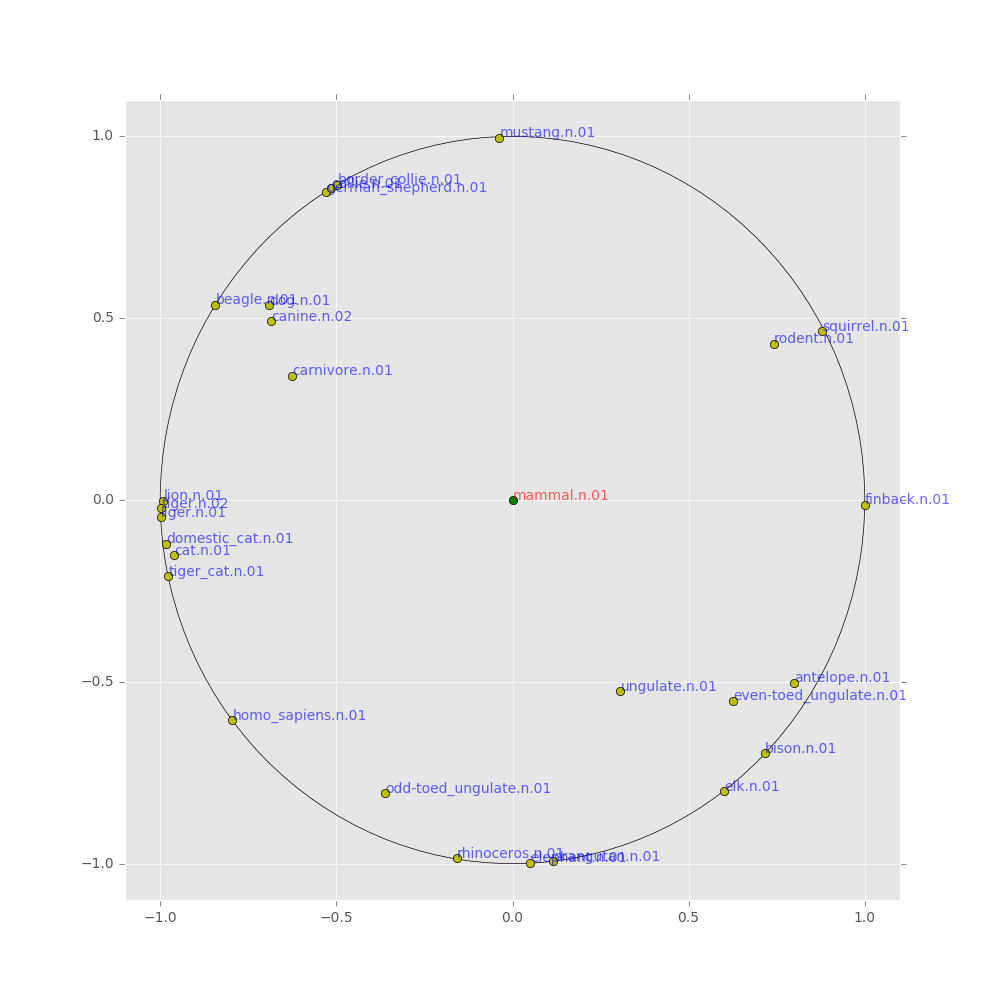

These codes implement Poincar'e Embedding introduced in the following paper:

Requirements

- C++ compiler that supports c++14 or later

- for Windows user, using cygwin is recommended (with CMAKE and gcc/g++ selection) (thanks @patrickgitacc)

Build

cd poincare-embedding

mkdir work & cd work

cmake ..

make

Setup python environment

From the poincare-embeddings directory...

python3 -m venv venv

source venv/bin/activate

if using windows:

python3 -m venv venv

venv\Scripts\activate

Then run the following:

python3 -m pip install -r requirements.txt

python3 -c "import nltk; nltk.download('wordnet')"

Tutorial

We assume that you are in work directory

cd poincare-embedding

mkdir work & cd work

Data Creation

You can create WordNet noun hypernym pairs as follows

python ../scripts/create_wordnet_noun_hierarchy.py ./wordnet_noun_hypernyms.tsv

and mammal subtree is created by

python ../scripts/create_mammal_subtree.py ./mammal_subtree.tsv

Run

./poincare_embedding ./mammal_subtree.tsv ./embeddings.tsv -d 2 -t 8 -e 1000 -l 0.1 -L 0.0001 -n 20 -s 0

Plot a Mammal Tree

python ../scripts/plot_mammal_subtree.py ./embeddings.tsv --center_mammal

Note: if that doesn't work, may need to run the following:

tr -d '\015' < embeddings.tsv > embeddings_clean.tsv

Double check that the file has removed the character in question, then run

mv embeddings_clean.tsv embeddings.tsv

Note that the project description data, including the texts, logos, images, and/or trademarks,

for each open source project belongs to its rightful owner.

If you wish to add or remove any projects, please contact us at [email protected].