HKUST-KnowComp / R Net

Licence: mit

Tensorflow Implementation of R-Net

Stars: ✭ 582

Programming Languages

python

139335 projects - #7 most used programming language

Labels

Projects that are alternatives of or similar to R Net

Dawn Bench Entries

DAWNBench: An End-to-End Deep Learning Benchmark and Competition

Stars: ✭ 254 (-56.36%)

Mutual labels: squad

co-attention

Pytorch implementation of "Dynamic Coattention Networks For Question Answering"

Stars: ✭ 54 (-90.72%)

Mutual labels: squad

diwako dui

A UI showing unit positions and names of units in your squad

Stars: ✭ 39 (-93.3%)

Mutual labels: squad

extractive rc by runtime mt

Code and datasets of "Multilingual Extractive Reading Comprehension by Runtime Machine Translation"

Stars: ✭ 36 (-93.81%)

Mutual labels: squad

SQUAD2.Q-Augmented-Dataset

Augmented version of SQUAD 2.0 for Questions

Stars: ✭ 31 (-94.67%)

Mutual labels: squad

X3daudio1 7 hrtf

HRTF for Arma 3, Skyrim, and other titles that use XAudio2 + X3DAudio

Stars: ✭ 192 (-67.01%)

Mutual labels: squad

Drqa

A pytorch implementation of Reading Wikipedia to Answer Open-Domain Questions.

Stars: ✭ 378 (-35.05%)

Mutual labels: squad

qa

TensorFlow Models for the Stanford Question Answering Dataset

Stars: ✭ 72 (-87.63%)

Mutual labels: squad

Transformer-QG-on-SQuAD

Implement Question Generator with SOTA pre-trained Language Models (RoBERTa, BERT, GPT, BART, T5, etc.)

Stars: ✭ 28 (-95.19%)

Mutual labels: squad

Squad

Dockerfile for automated build of a Squad gameserver: https://hub.docker.com/r/cm2network/squad/

Stars: ✭ 21 (-96.39%)

Mutual labels: squad

Medi-CoQA

Conversational Question Answering on Clinical Text

Stars: ✭ 22 (-96.22%)

Mutual labels: squad

Learning to retrieve reasoning paths

The official implementation of ICLR 2020, "Learning to Retrieve Reasoning Paths over Wikipedia Graph for Question Answering".

Stars: ✭ 318 (-45.36%)

Mutual labels: squad

PersianQA

Persian (Farsi) Question Answering Dataset (+ Models)

Stars: ✭ 114 (-80.41%)

Mutual labels: squad

Lambdahack

Haskell game engine library for roguelike dungeon crawlers; please offer feedback, e.g., after trying out the sample game with the web frontend at

Stars: ✭ 439 (-24.57%)

Mutual labels: squad

R Net

A Tensorflow Implementation of R-net: Machine reading comprehension with self matching networks

Stars: ✭ 321 (-44.85%)

Mutual labels: squad

FastFusionNet

A PyTorch Implementation of FastFusionNet on SQuAD 1.1

Stars: ✭ 38 (-93.47%)

Mutual labels: squad

R-Net

- A Tensorflow implementation of R-NET: MACHINE READING COMPREHENSION WITH SELF-MATCHING NETWORKS. This project is specially designed for the SQuAD dataset.

- Should you have any question, please contact Wenxuan Zhou ([email protected]).

Requirements

There have been a lot of known problems caused by using different software versions. Please check your versions before opening issues or emailing me.

General

- Python >= 3.4

- unzip, wget

Python Packages

- tensorflow-gpu >= 1.5.0

- spaCy >= 2.0.0

- tqdm

- ujson

Usage

To download and preprocess the data, run

# download SQuAD and Glove

sh download.sh

# preprocess the data

python config.py --mode prepro

Hyper parameters are stored in config.py. To debug/train/test the model, run

python config.py --mode debug/train/test

To get the official score, run

python evaluate-v1.1.py ~/data/squad/dev-v1.1.json log/answer/answer.json

The default directory for tensorboard log file is log/event

See release for trained model.

Detailed Implementaion

- The original paper uses additive attention, which consumes lots of memory. This project adopts scaled multiplicative attention presented in Attention Is All You Need.

- This project adopts variational dropout presented in A Theoretically Grounded Application of Dropout in Recurrent Neural Networks.

- To solve the degradation problem in stacked RNN, outputs of each layer are concatenated to produce the final output.

- When the loss on dev set increases in a certain period, the learning rate is halved.

- During prediction, the project adopts search method presented in Machine Comprehension Using Match-LSTM and Answer Pointer.

- To address efficiency issue, this implementation uses bucketing method (contributed by xiongyifan) and CudnnGRU. The bucketing method can speedup training, but will lower the F1 score by 0.3%.

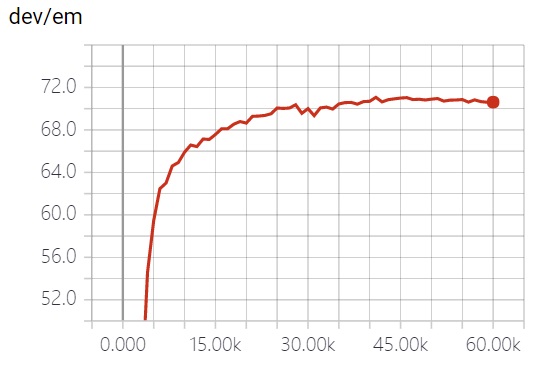

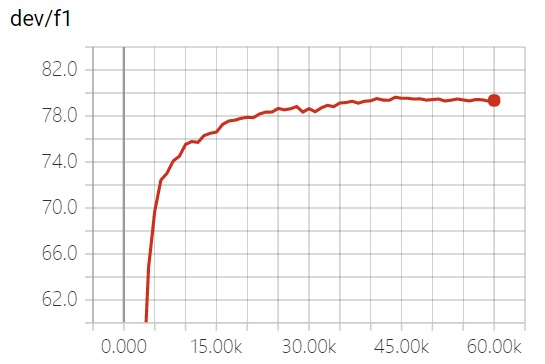

Performance

Score

| EM | F1 | |

|---|---|---|

| original paper | 71.1 | 79.5 |

| this project | 71.07 | 79.51 |

Training Time (s/it)

| Native | Native + Bucket | Cudnn | Cudnn + Bucket | |

|---|---|---|---|---|

| E5-2640 | 6.21 | 3.56 | - | - |

| TITAN X | 2.56 | 1.31 | 0.41 | 0.28 |

Extensions

These settings may increase the score but not used in the model by default. You can turn these settings on in config.py.

- Pretrained GloVe character embedding. Contributed by yanghanxy.

- Fasttext Embedding. Contributed by xiongyifan. May increase the F1 by 1% (reported by xiongyifan).

Note that the project description data, including the texts, logos, images, and/or trademarks,

for each open source project belongs to its rightful owner.

If you wish to add or remove any projects, please contact us at [email protected].