Ha0Tang / Attentiongan

Programming Languages

Labels

Projects that are alternatives of or similar to Attentiongan

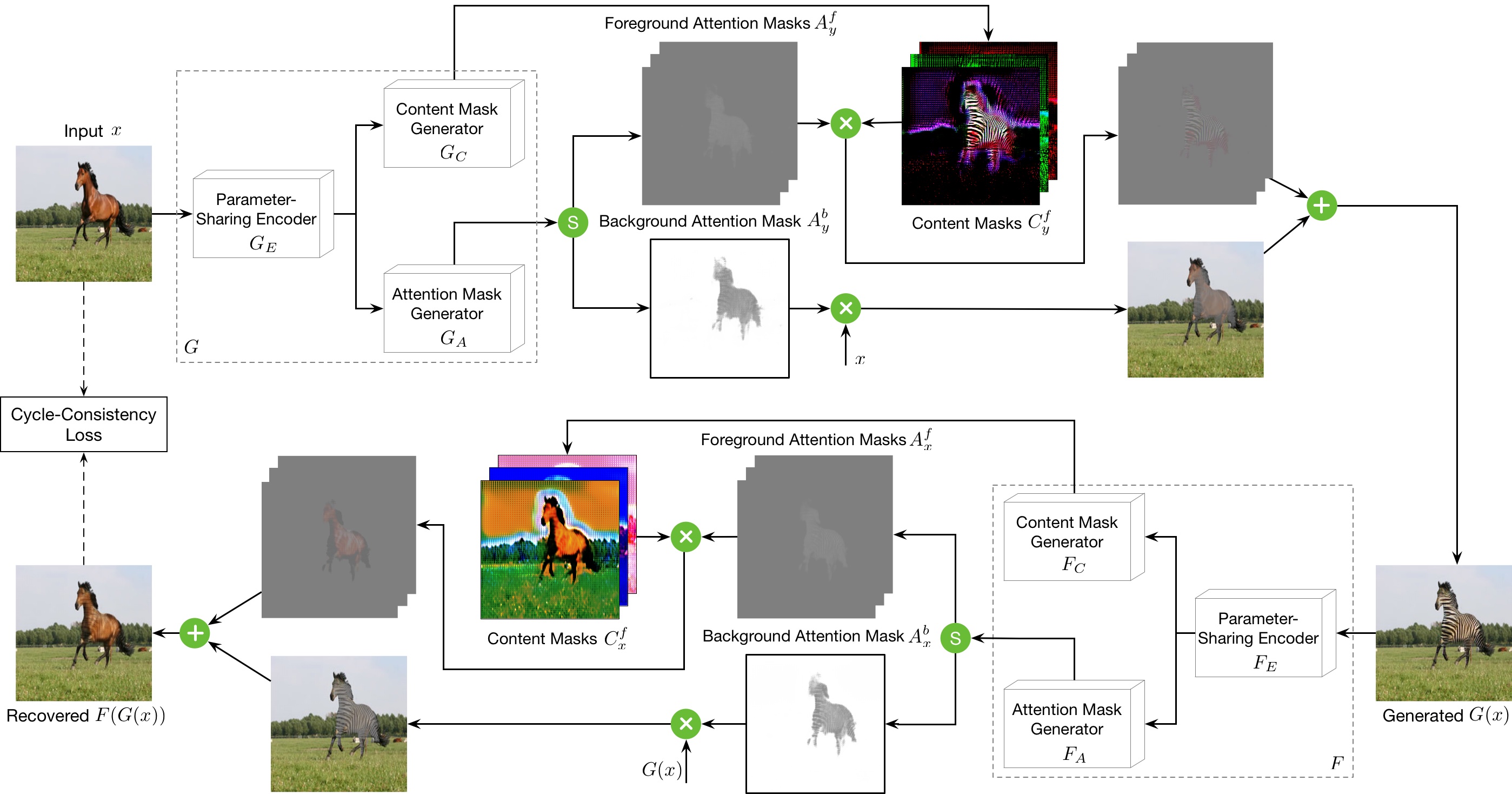

AttentionGAN-v2 for Unpaired Image-to-Image Translation

AttentionGAN-v2 Framework

The proposed generator learns both foreground and background attentions. It uses the foreground attention to select from the generated output for the foreground regions, while uses the background attention to maintain the background information from the input image. Please refer to our papers for more details.

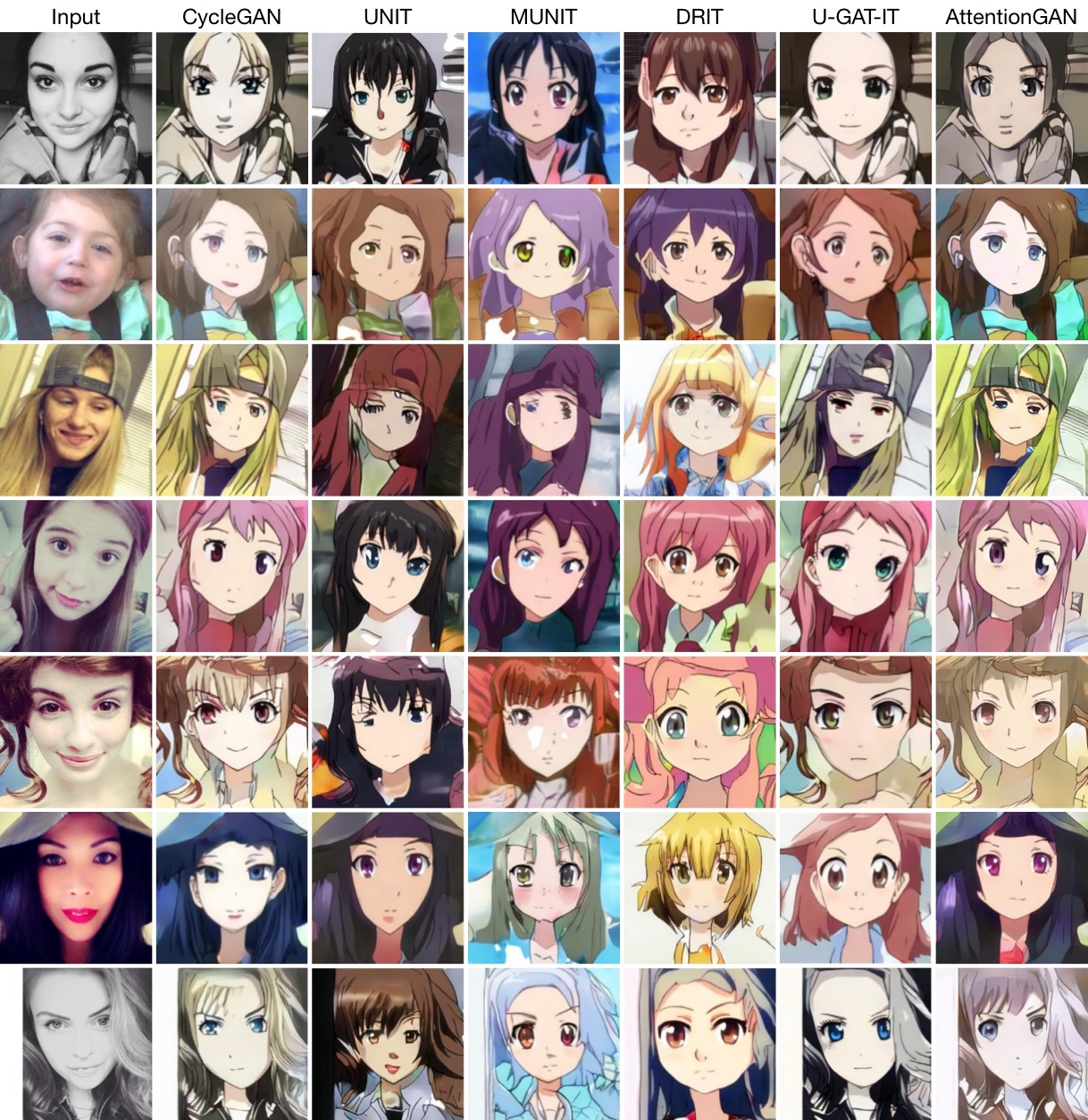

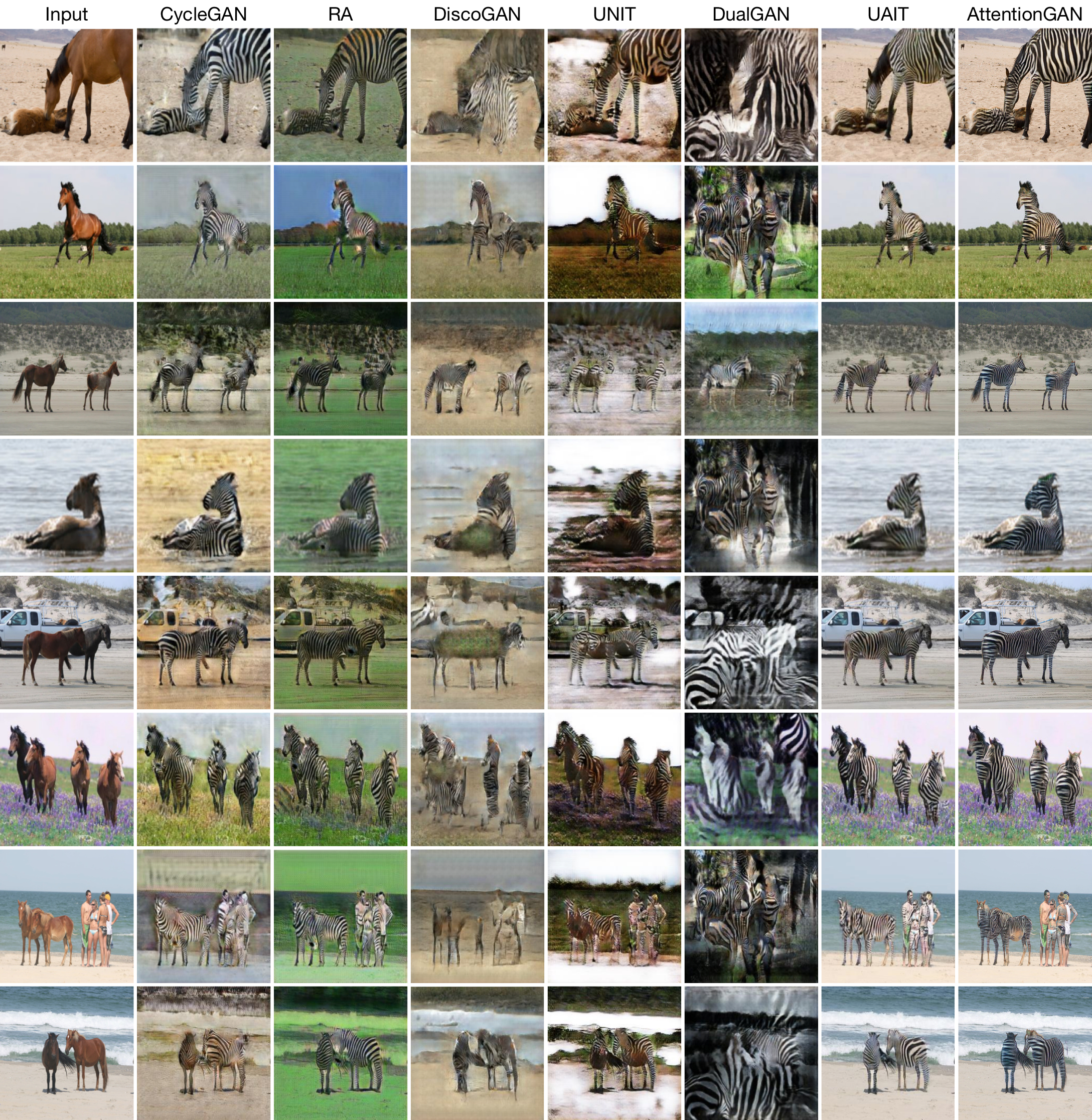

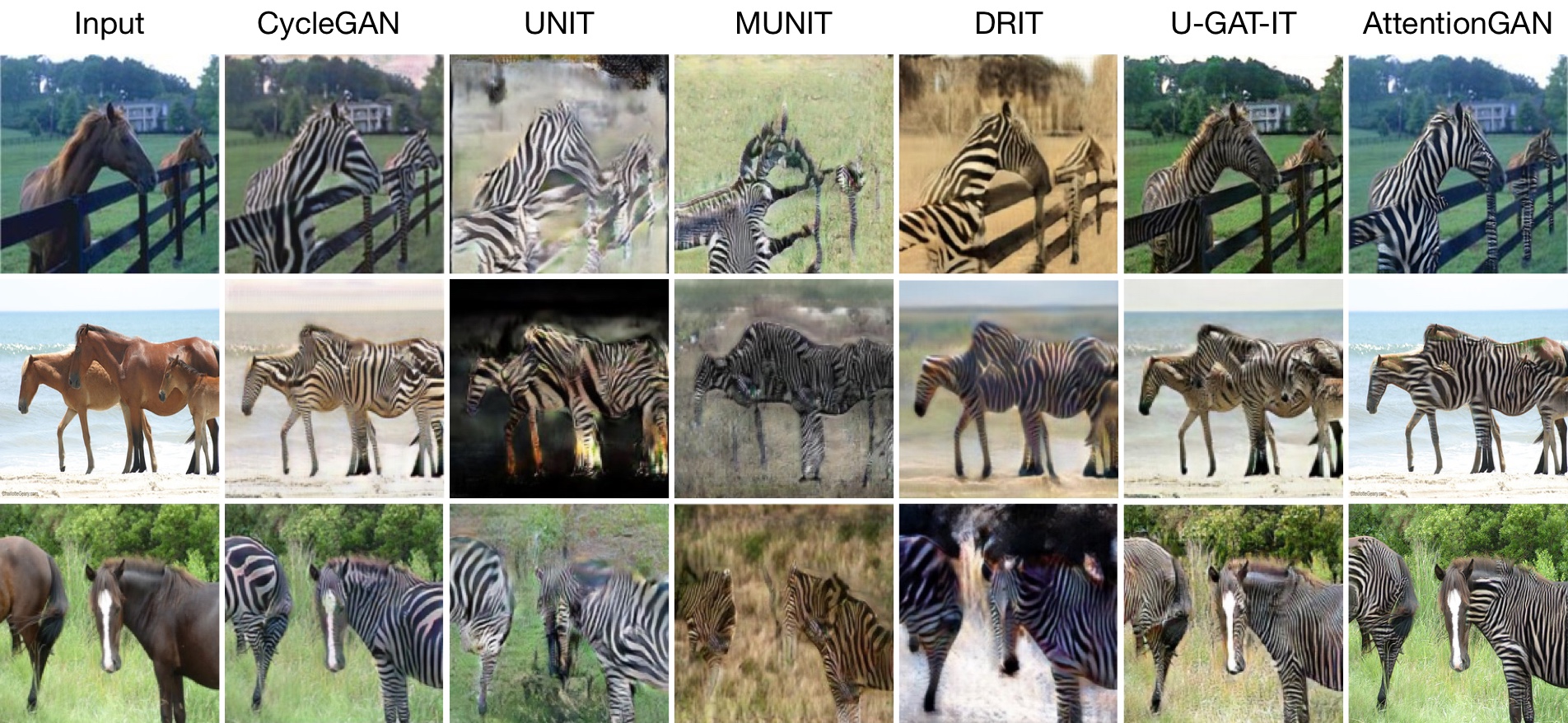

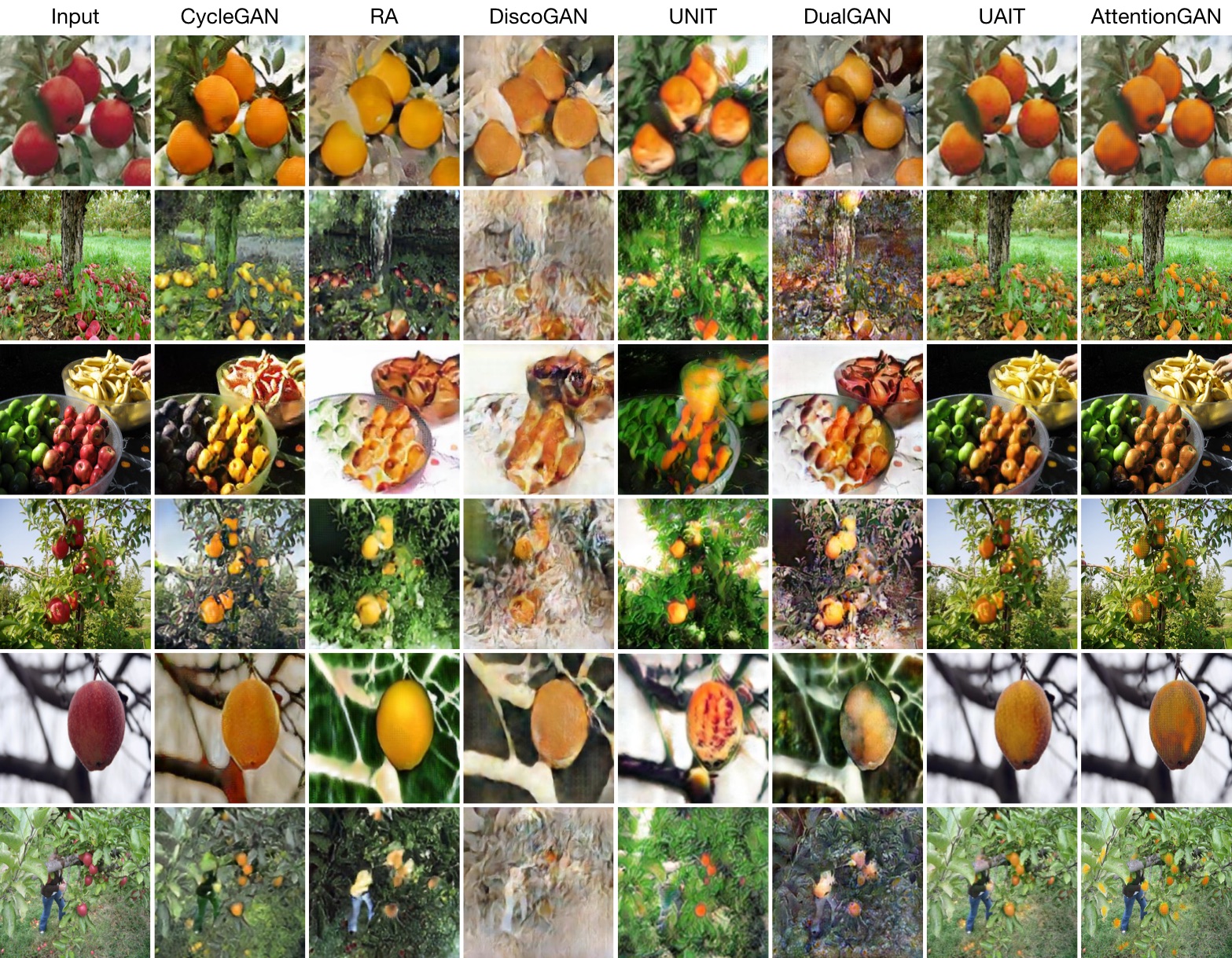

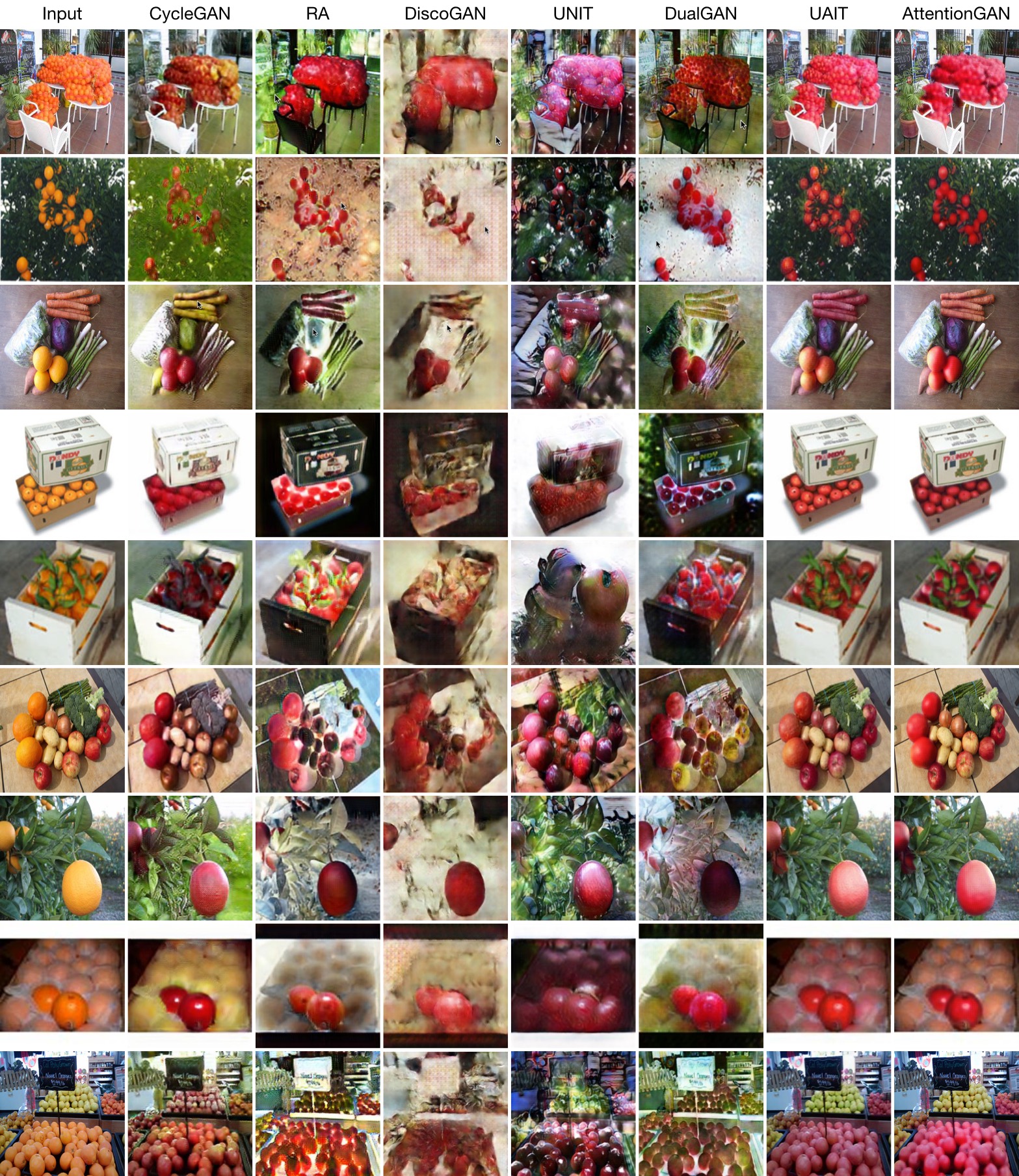

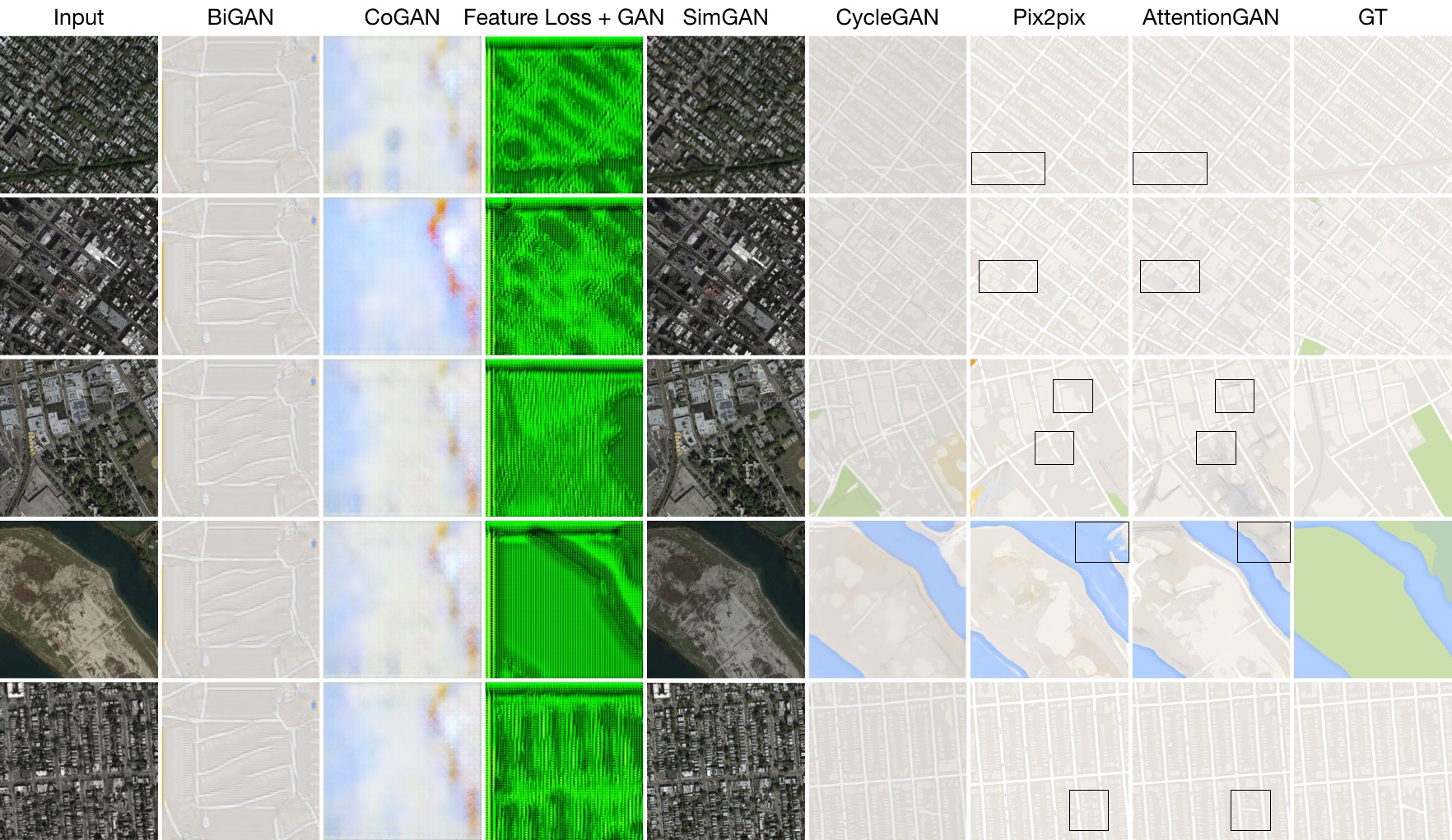

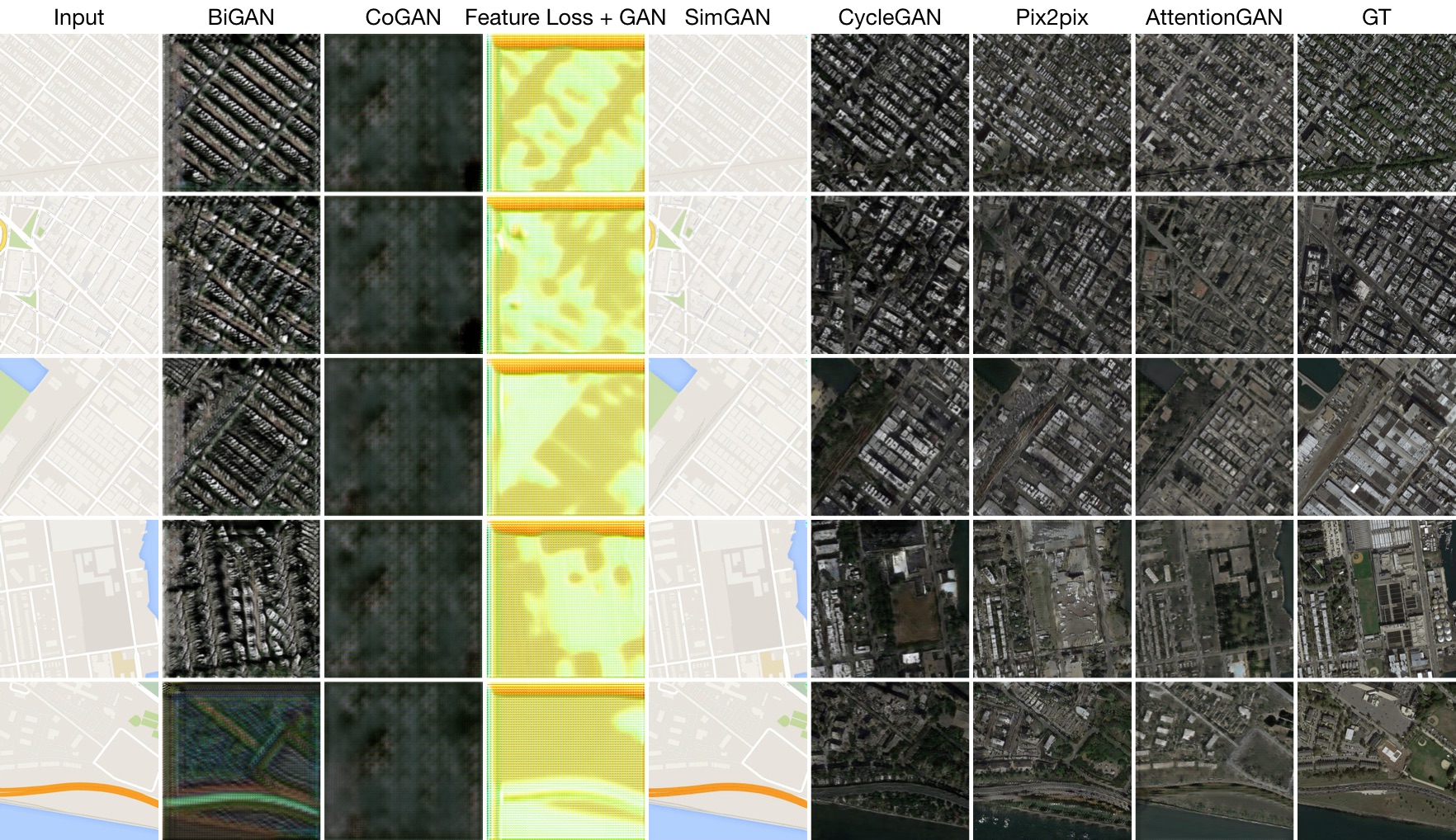

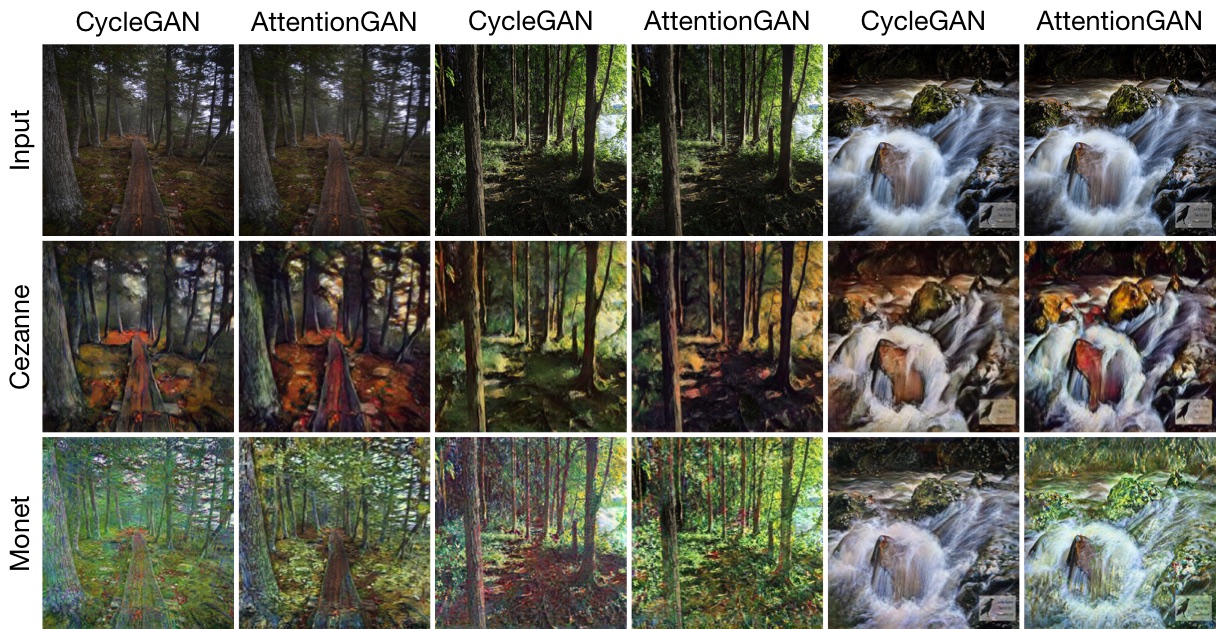

Comparsion with State-of-the-Art Methods

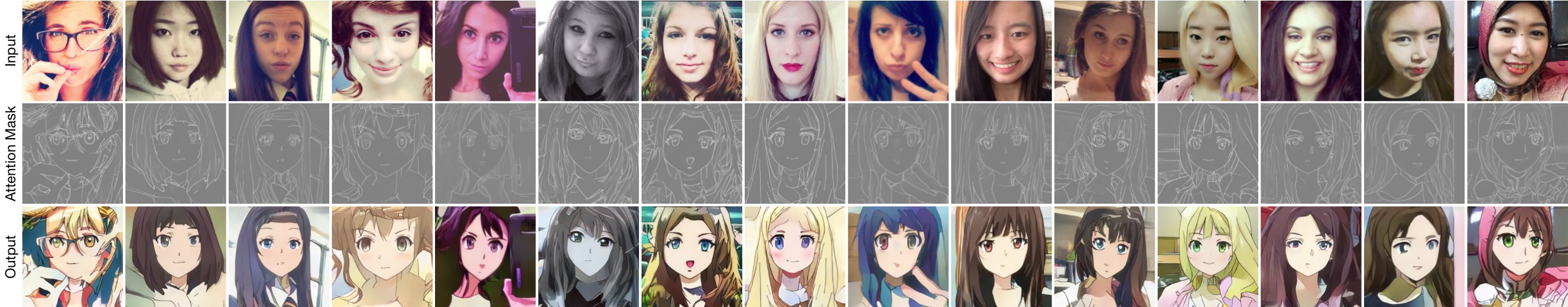

Selfie To Anime Translation

Horse to Zebra Translation

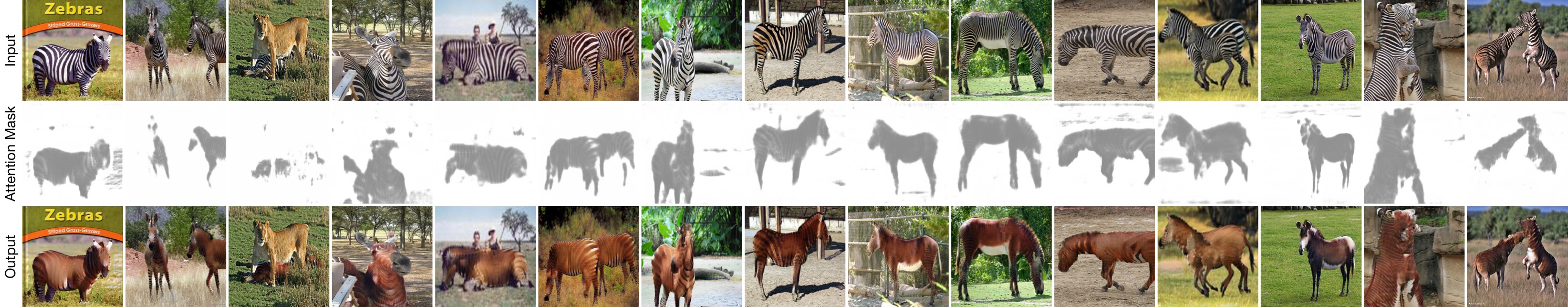

Zebra to Horse Translation

Apple to Orange Translation

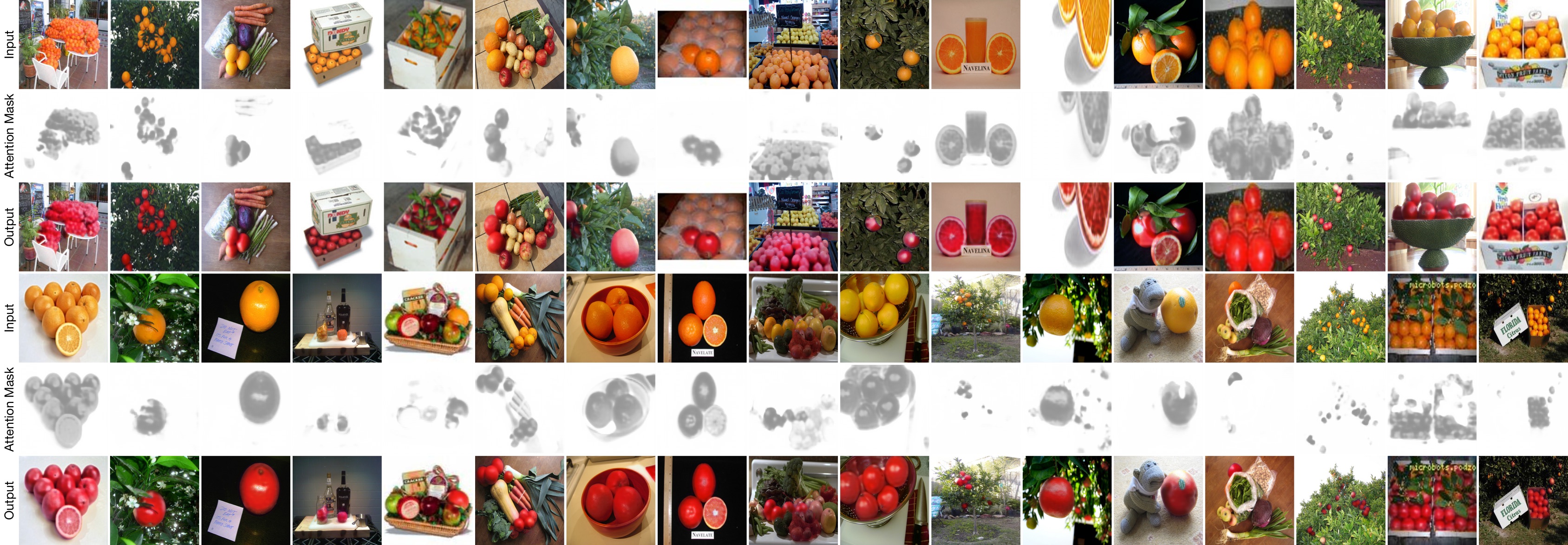

Orange to Apple Translation

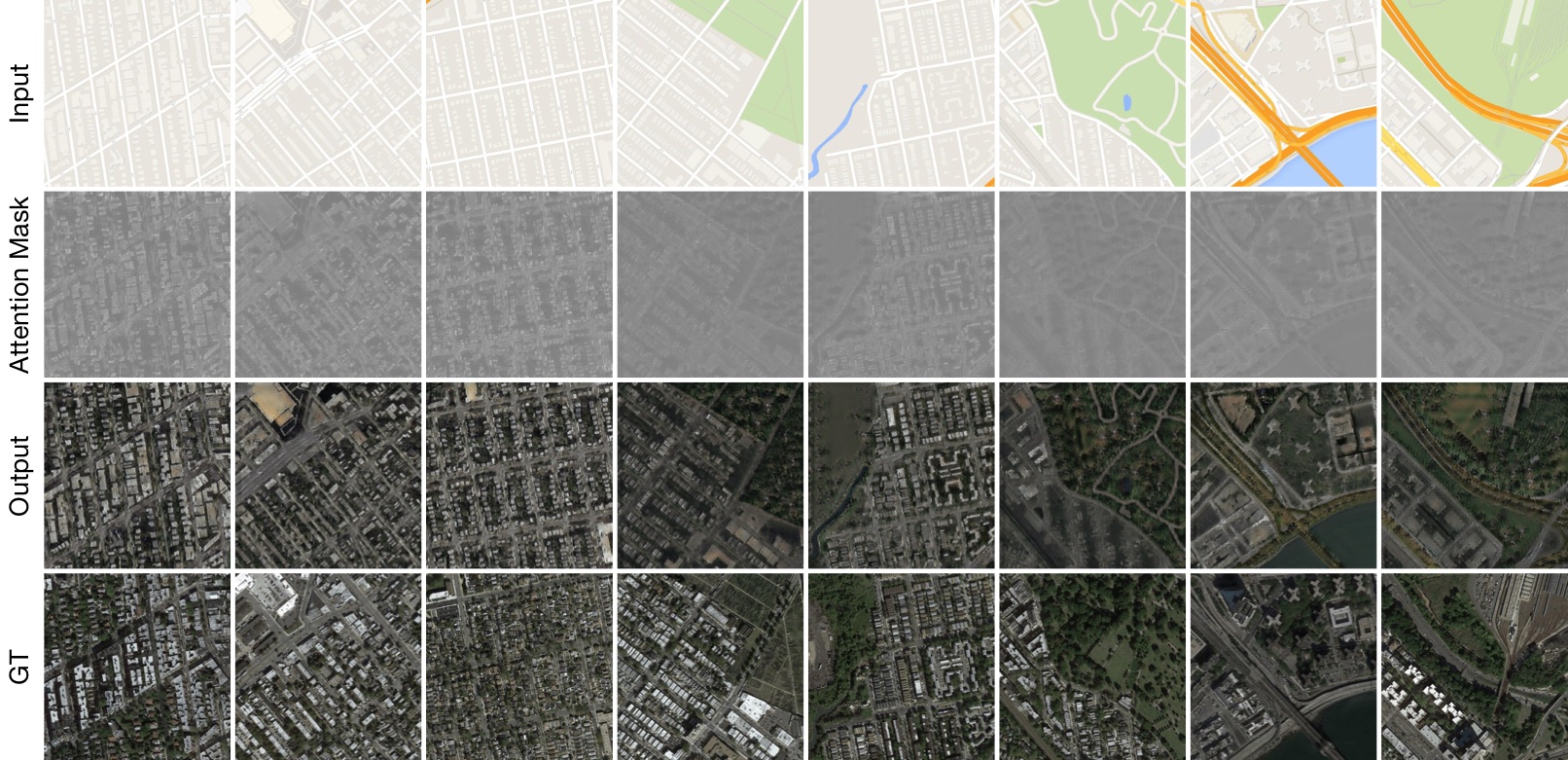

Map to Aerial Photo Translation

Aerial Photo to Map Translation

Style Transfer

Visualization of Learned Attention Masks

Selfie to Anime Translation

Horse to Zebra Translation

Zebra to Horse Translation

Apple to Orange Translation

Orange to Apple Translation

Map to Aerial Photo Translation

Aerial Photo to Map Translation

Extended Paper | Conference Paper

AttentionGAN: Unpaired Image-to-Image Translation using Attention-Guided Generative Adversarial Networks.

Hao Tang1, Hong Liu2, Dan Xu3, Philip H.S. Torr3 and Nicu Sebe1.

1University of Trento, Italy, 2Peking University, China, 3University of Oxford, UK.

The repository offers the official implementation of our paper in PyTorch.

Are you looking for AttentionGAN-v1 for Unpaired Image-to-Image Translation?

Are you looking for AttentionGAN-v1 for Multi-Domain Image-to-Image Translation?

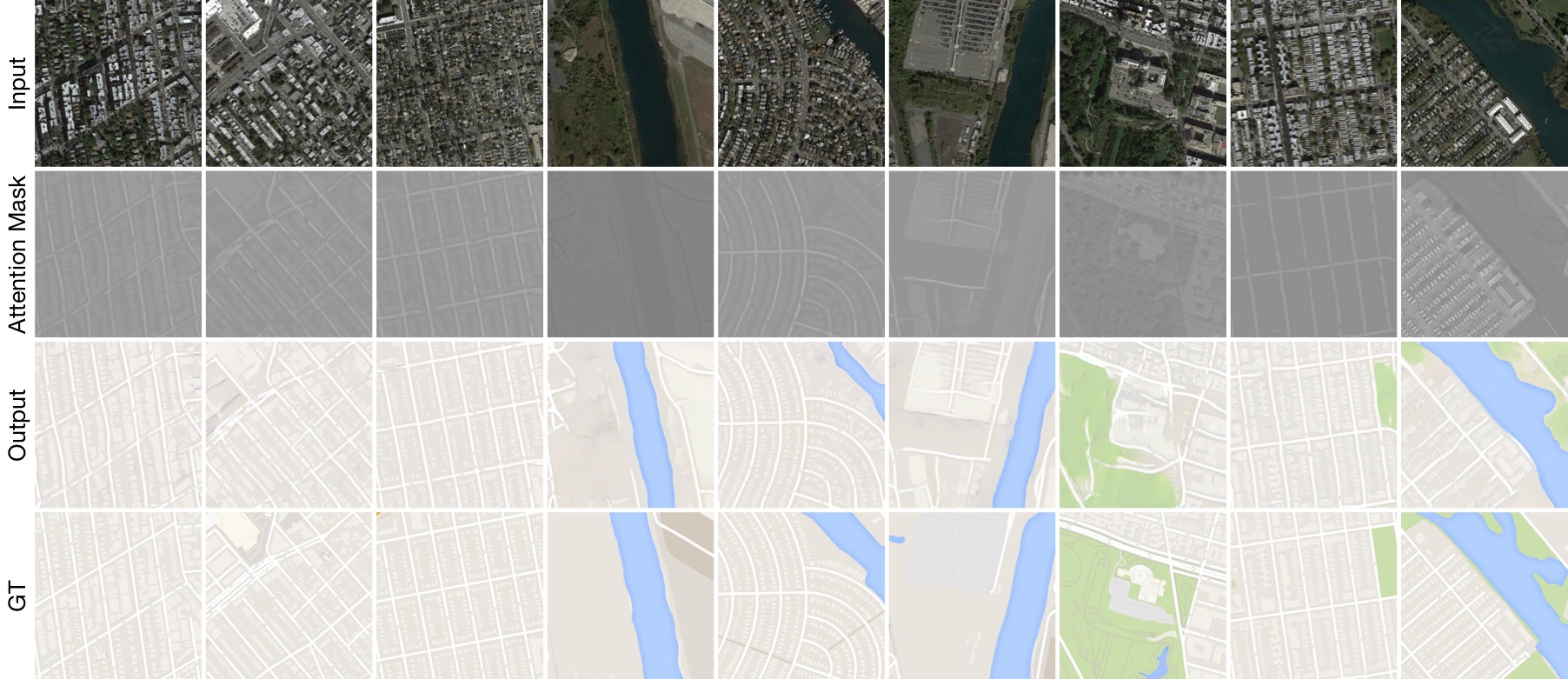

Facial Expression-to-Expression Translation

Order: The Learned Attention Masks, The Learned Content Masks, Final Results

Order: The Learned Attention Masks, The Learned Content Masks, Final Results

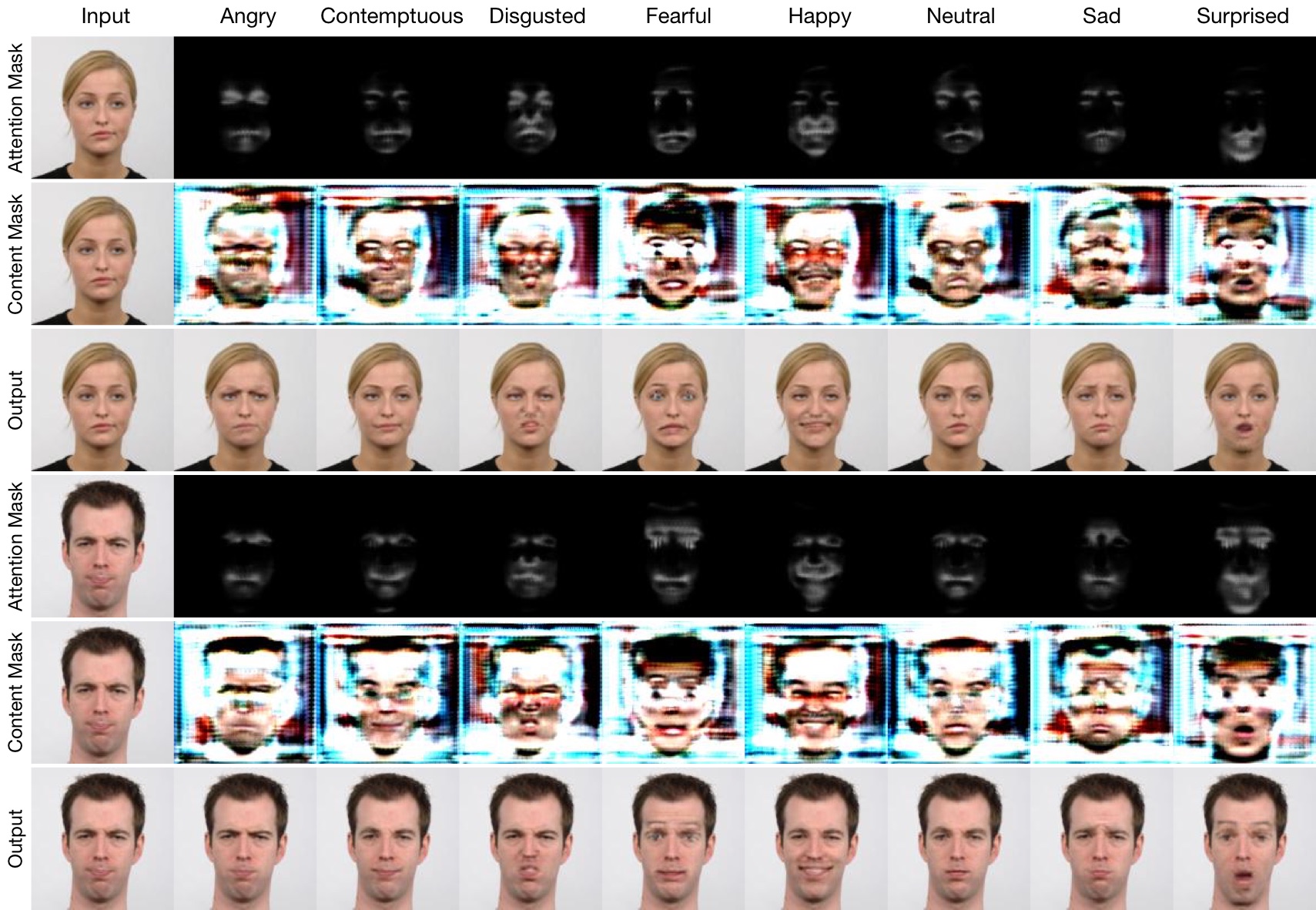

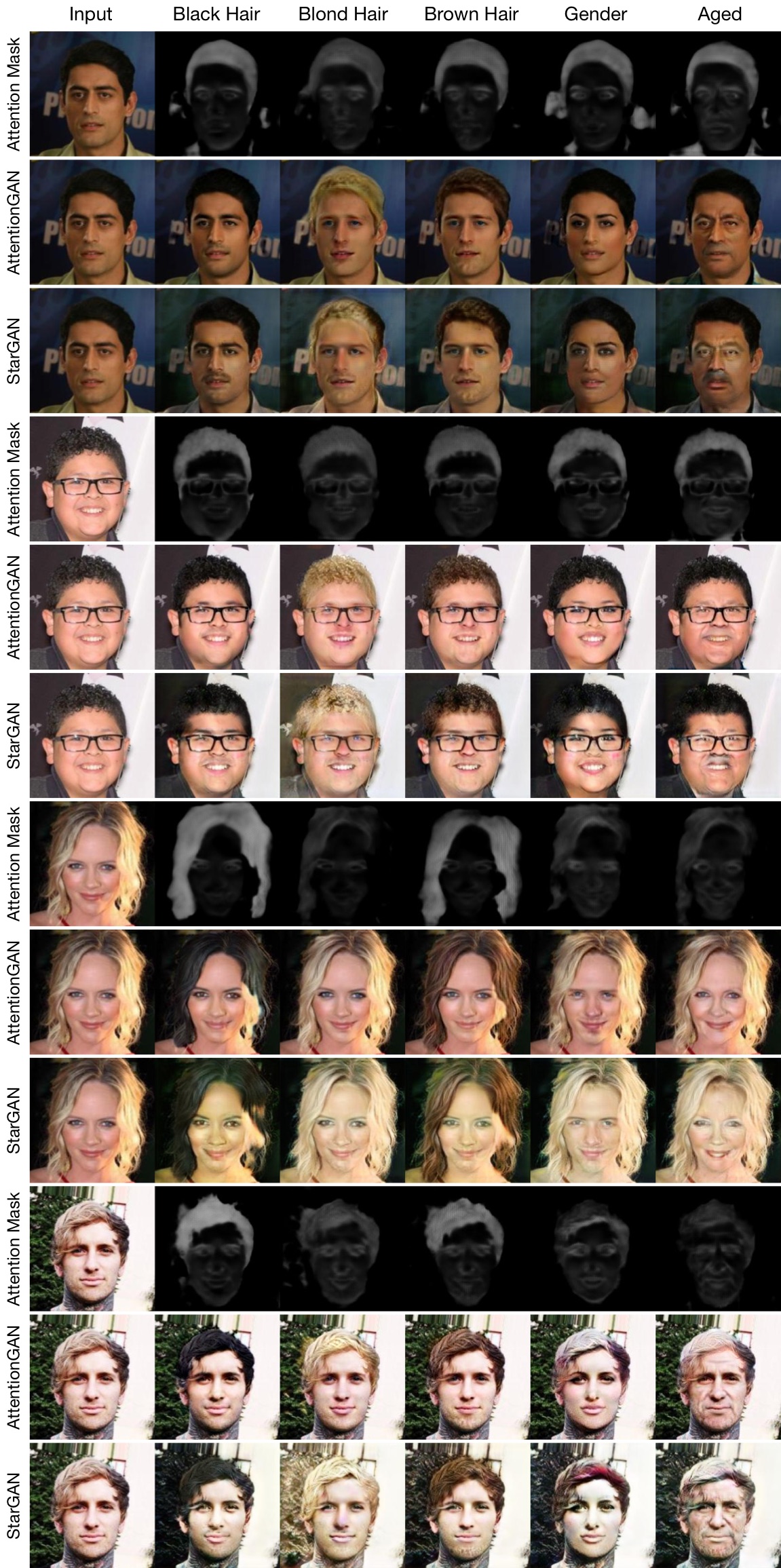

Facial Attribute Transfer

Order: The Learned Attention Masks, The Learned Content Masks, Final Results

Order: The Learned Attention Masks, The Learned Content Masks, Final Results

Order: The Learned Attention Masks, AttentionGAN, StarGAN

Order: The Learned Attention Masks, AttentionGAN, StarGAN

License

Copyright (C) 2019 University of Trento, Italy.

All rights reserved. Licensed under the CC BY-NC-SA 4.0 (Attribution-NonCommercial-ShareAlike 4.0 International)

The code is released for academic research use only. For commercial use, please contact [email protected].

Installation

Clone this repo.

git clone https://github.com/Ha0Tang/AttentionGAN

cd AttentionGAN/

This code requires PyTorch 0.4.1+ and python 3.6.9+. Please install dependencies by

pip install -r requirements.txt (for pip users)

or

./scripts/conda_deps.sh (for Conda users)

To reproduce the results reported in the paper, you would need an NVIDIA Tesla V100 with 16G memory.

Dataset Preparation

Download the datasets using the following script. Please cite their paper if you use the data. Try twice if it fails the first time!

sh ./datasets/download_cyclegan_dataset.sh dataset_name

The selfie2anime dataset can be download here.

AttentionGAN Training/Testing

- Download a dataset using the previous script (e.g., horse2zebra).

- To view training results and loss plots, run

python -m visdom.serverand click the URL http://localhost:8097. - Train a model:

sh ./scripts/train_attentiongan.sh

- To see more intermediate results, check out

./checkpoints/horse2zebra_attentiongan/web/index.html. - How to continue train? Append

--continue_train --epoch_count xxxon the command line. - Test the model:

sh ./scripts/test_attentiongan.sh

- The test results will be saved to a html file here:

./results/horse2zebra_attentiongan/latest_test/index.html.

Generating Images Using Pretrained Model

- You need download a pretrained model (e.g., horse2zebra) with the following script:

sh ./scripts/download_attentiongan_model.sh horse2zebra

- The pretrained model is saved at

./checkpoints/{name}_pretrained/latest_net_G.pth. - Then generate the result using

python test.py --dataroot ./datasets/horse2zebra --name horse2zebra_pretrained --model attention_gan --dataset_mode unaligned --norm instance --phase test --no_dropout --load_size 256 --crop_size 256 --batch_size 1 --gpu_ids 0 --num_test 5000 --epoch latest --saveDisk

The results will be saved at ./results/. Use --results_dir {directory_path_to_save_result} to specify the results directory. Note that if you want to save the intermediate results and have enough disk space, remove --saveDisk on the command line.

- For your own experiments, you might want to specify --netG, --norm, --no_dropout to match the generator architecture of the trained model.

Image Translation with Geometric Changes Between Source and Target Domains

For instance, if you want to run experiments of Selfie to Anime Translation. Usage: replace attention_gan_model.py and networks with the ones in the AttentionGAN-geo folder.

Test the Pretrained Model

Download data and pretrained model according above instructions.

python test.py --dataroot ./datasets/selfie2anime/ --name selfie2anime_pretrained --model attention_gan --dataset_mode unaligned --norm instance --phase test --no_dropout --load_size 256 --crop_size 256 --batch_size 1 --gpu_ids 0 --num_test 5000 --epoch latest

Train a New Model

python train.py --dataroot ./datasets/selfie2anime/ --name selfie2anime_attentiongan --model attention_gan --dataset_mode unaligned --pool_size 50 --no_dropout --norm instance --lambda_A 10 --lambda_B 10 --lambda_identity 0.5 --load_size 286 --crop_size 256 --batch_size 4 --niter 100 --niter_decay 100 --gpu_ids 0 --display_id 0 --display_freq 100 --print_freq 100

Test the Trained Model

python test.py --dataroot ./datasets/selfie2anime/ --name selfie2anime_attentiongan --model attention_gan --dataset_mode unaligned --norm instance --phase test --no_dropout --load_size 256 --crop_size 256 --batch_size 1 --gpu_ids 0 --num_test 5000 --epoch latest

Evaluation Code

- FID: Official Implementation

-

KID or Here: Suggested by UGATIT.

Install Steps:

conda create -n python36 pyhton=3.6 anacondaandpip install --ignore-installed --upgrade tensorflow==1.13.1. If you encounter the issueAttributeError: module 'scipy.misc' has no attribute 'imread', please dopip install scipy==1.1.0.

Citation

If you use this code for your research, please cite our papers.

@article{tang2019attentiongan,

title={AttentionGAN: Unpaired Image-to-Image Translation using Attention-Guided Generative Adversarial Networks},

author={Tang, Hao and Liu, Hong and Xu, Dan and Torr, Philip HS and Sebe, Nicu},

journal={arXiv preprint arXiv:1911.11897},

year={2019}

}

@inproceedings{tang2019attention,

title={Attention-Guided Generative Adversarial Networks for Unsupervised Image-to-Image Translation},

author={Tang, Hao and Xu, Dan and Sebe, Nicu and Yan, Yan},

booktitle={International Joint Conference on Neural Networks (IJCNN)},

year={2019}

}

Acknowledgments

This source code is inspired by CycleGAN, GestureGAN, and SelectionGAN.

Contributions

If you have any questions/comments/bug reports, feel free to open a github issue or pull a request or e-mail to the author Hao Tang ([email protected]).