seungwonpark / Melgan

Licence: bsd-3-clause

MelGAN vocoder (compatible with NVIDIA/tacotron2)

Stars: ✭ 444

Programming Languages

python

139335 projects - #7 most used programming language

Projects that are alternatives of or similar to Melgan

Hifi Gan

HiFi-GAN: Generative Adversarial Networks for Efficient and High Fidelity Speech Synthesis

Stars: ✭ 325 (-26.8%)

Mutual labels: gan, tts

Zerospeech Tts Without T

A Pytorch implementation for the ZeroSpeech 2019 challenge.

Stars: ✭ 100 (-77.48%)

Mutual labels: gan, tts

Pro gan pytorch

ProGAN package implemented as an extension of PyTorch nn.Module

Stars: ✭ 425 (-4.28%)

Mutual labels: gan

Simgan Captcha

Solve captcha without manually labeling a training set

Stars: ✭ 405 (-8.78%)

Mutual labels: gan

Gan Timeline

A timeline showing the development of Generative Adversarial Networks (GAN).

Stars: ✭ 379 (-14.64%)

Mutual labels: gan

Tensorflow Tutorial

Tensorflow tutorial from basic to hard, 莫烦Python 中文AI教学

Stars: ✭ 4,122 (+828.38%)

Mutual labels: gan

Cboard

AAC communication system with text-to-speech for the browser

Stars: ✭ 437 (-1.58%)

Mutual labels: tts

Pytorch Rl

This repository contains model-free deep reinforcement learning algorithms implemented in Pytorch

Stars: ✭ 394 (-11.26%)

Mutual labels: gan

Deep Learning Resources

由淺入深的深度學習資源 Collection of deep learning materials for everyone

Stars: ✭ 422 (-4.95%)

Mutual labels: gan

Igan

Interactive Image Generation via Generative Adversarial Networks

Stars: ✭ 3,845 (+765.99%)

Mutual labels: gan

Tensorflow Generative Model Collections

Collection of generative models in Tensorflow

Stars: ✭ 3,785 (+752.48%)

Mutual labels: gan

Transformer Tts

A Pytorch Implementation of "Neural Speech Synthesis with Transformer Network"

Stars: ✭ 418 (-5.86%)

Mutual labels: tts

Generative Compression

TensorFlow Implementation of Generative Adversarial Networks for Extreme Learned Image Compression

Stars: ✭ 428 (-3.6%)

Mutual labels: gan

Anycost Gan

[CVPR 2021] Anycost GANs for Interactive Image Synthesis and Editing

Stars: ✭ 367 (-17.34%)

Mutual labels: gan

Sean

SEAN: Image Synthesis with Semantic Region-Adaptive Normalization (CVPR 2020, Oral)

Stars: ✭ 387 (-12.84%)

Mutual labels: gan

Simgan

Implementation of Apple's Learning from Simulated and Unsupervised Images through Adversarial Training

Stars: ✭ 406 (-8.56%)

Mutual labels: gan

Generative Models

Annotated, understandable, and visually interpretable PyTorch implementations of: VAE, BIRVAE, NSGAN, MMGAN, WGAN, WGANGP, LSGAN, DRAGAN, BEGAN, RaGAN, InfoGAN, fGAN, FisherGAN

Stars: ✭ 438 (-1.35%)

Mutual labels: gan

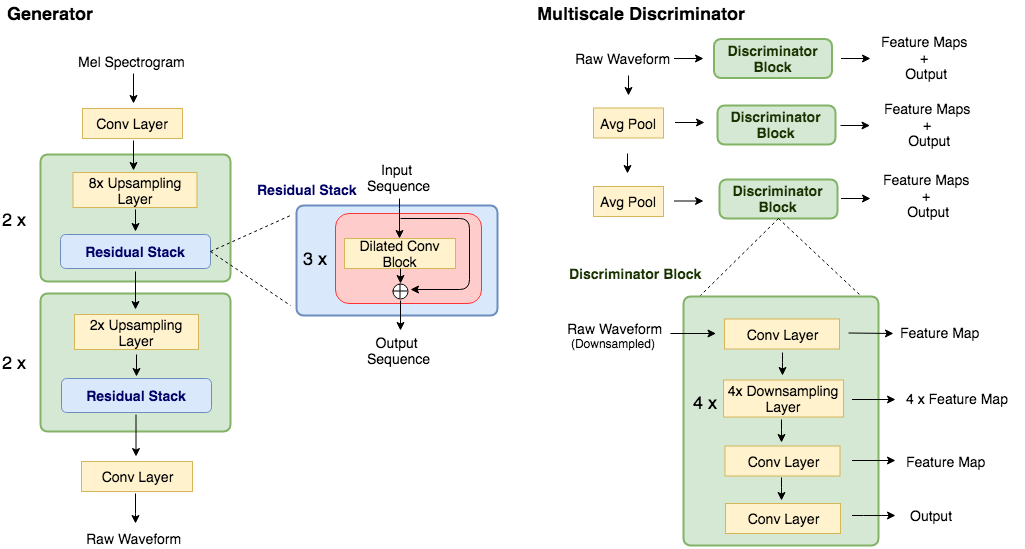

MelGAN

Unofficial PyTorch implementation of MelGAN vocoder

Key Features

- MelGAN is lighter, faster, and better at generalizing to unseen speakers than WaveGlow.

- This repository use identical mel-spectrogram function from NVIDIA/tacotron2, so this can be directly used to convert output from NVIDIA's tacotron2 into raw-audio.

- Pretrained model on LJSpeech-1.1 via PyTorch Hub.

Prerequisites

Tested on Python 3.6

pip install -r requirements.txt

Prepare Dataset

- Download dataset for training. This can be any wav files with sample rate 22050Hz. (e.g. LJSpeech was used in paper)

- preprocess:

python preprocess.py -c config/default.yaml -d [data's root path] - Edit configuration

yamlfile

Train & Tensorboard

-

python trainer.py -c [config yaml file] -n [name of the run]-

cp config/default.yaml config/config.yamland then editconfig.yaml - Write down the root path of train/validation files to 2nd/3rd line.

- Each path should contain pairs of

*.wavwith corresponding (preprocessed)*.melfile. - The data loader parses list of files within the path recursively.

-

tensorboard --logdir logs/

Pretrained model

Try with Google Colab: TODO

import torch

vocoder = torch.hub.load('seungwonpark/melgan', 'melgan')

vocoder.eval()

mel = torch.randn(1, 80, 234) # use your own mel-spectrogram here

if torch.cuda.is_available():

vocoder = vocoder.cuda()

mel = mel.cuda()

with torch.no_grad():

audio = vocoder.inference(mel)

Inference

python inference.py -p [checkpoint path] -i [input mel path]

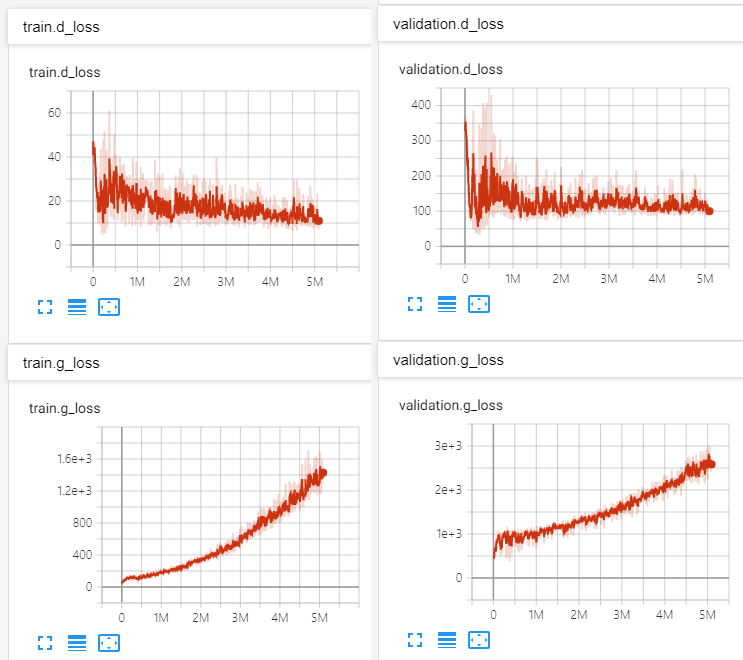

Results

See audio samples at: http://swpark.me/melgan/. Model was trained at V100 GPU for 14 days using LJSpeech-1.1.

Implementation Authors

- Seungwon Park @ MINDsLab Inc. ([email protected], [email protected])

- Myunchul Joe @ MINDsLab Inc.

- Rishikesh @ DeepSync Technologies Pvt Ltd.

License

BSD 3-Clause License.

- utils/stft.py by Prem Seetharaman (BSD 3-Clause License)

- datasets/mel2samp.py from https://github.com/NVIDIA/waveglow (BSD 3-Clause License)

- utils/hparams.py from https://github.com/HarryVolek/PyTorch_Speaker_Verification (No License specified)

Useful resources

- How to Train a GAN? Tips and tricks to make GANs work by Soumith Chintala

- Official MelGAN implementation by original authors

-

Reproduction of MelGAN - NeurIPS 2019 Reproducibility Challenge (Ablation Track) by Yifei Zhao, Yichao Yang, and Yang Gao

- "replacing the average pooling layer with max pooling layer and replacing reflection padding with replication padding improves the performance significantly, while combining them produces worse results"

Note that the project description data, including the texts, logos, images, and/or trademarks,

for each open source project belongs to its rightful owner.

If you wish to add or remove any projects, please contact us at [email protected].