PowerDataHub / Terraform Aws Airflow

Licence: apache-2.0

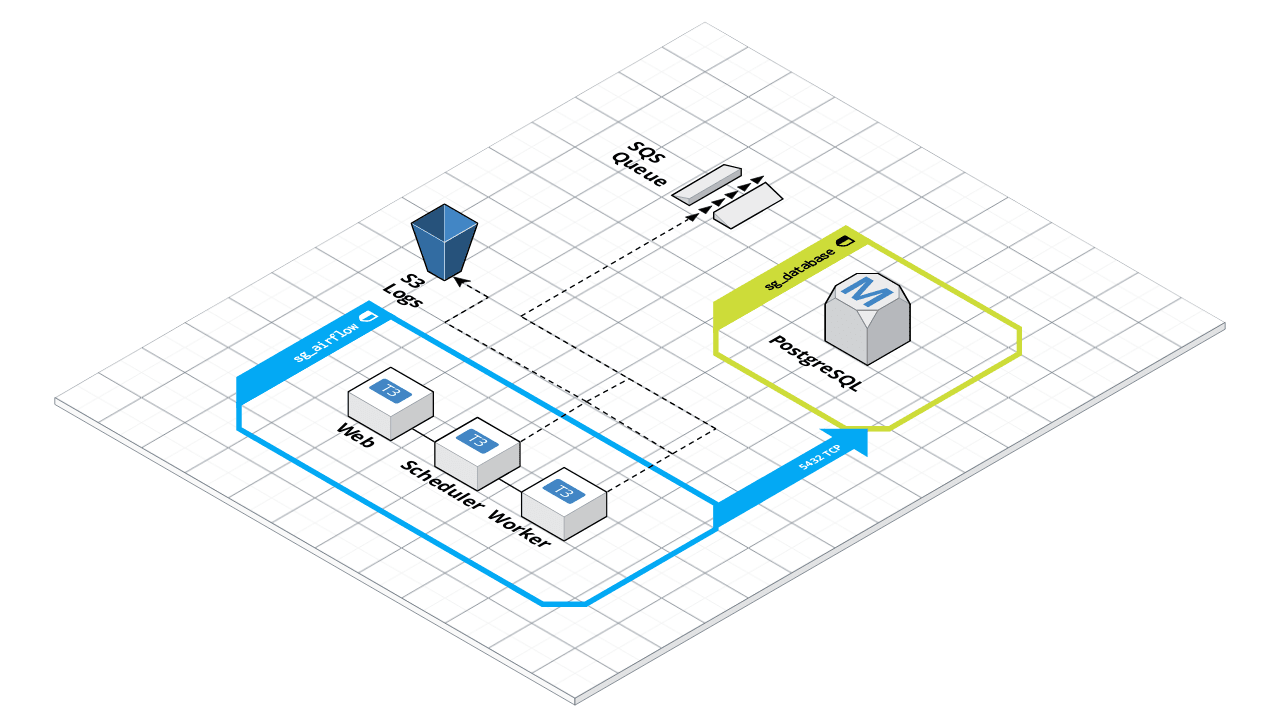

Terraform module to deploy an Apache Airflow cluster on AWS, backed by RDS PostgreSQL for metadata, S3 for logs and SQS as message broker with CeleryExecutor

Stars: ✭ 69

Projects that are alternatives of or similar to Terraform Aws Airflow

Iam Policy Json To Terraform

Small tool to convert an IAM Policy in JSON format into a Terraform aws_iam_policy_document

Stars: ✭ 282 (+308.7%)

Mutual labels: aws, hacktoberfest, terraform, hcl

Aws Ecs Airflow

Run Airflow in AWS ECS(Elastic Container Service) using Fargate tasks

Stars: ✭ 107 (+55.07%)

Mutual labels: aws, terraform, hcl, airflow

Elastic Beanstalk Terraform Setup

🎬 Playbook for setting up & deploying AWS Beanstalk Applications on Docker with 1 command

Stars: ✭ 69 (+0%)

Mutual labels: aws, terraform, hcl

Terraform Aws Github Ci

[DEPRECATED] Serverless CI for GitHub using AWS CodeBuild with PR and status support

Stars: ✭ 49 (-28.99%)

Mutual labels: aws, terraform, hcl

Terraform Aws Waf Owasp Top 10 Rules

A Terraform module to create AWF WAF Rules for OWASP Top 10 security risks protection.

Stars: ✭ 62 (-10.14%)

Mutual labels: aws, terraform, hcl

Terraform Ecs Autoscale Alb

ECS cluster with instance and service autoscaling configured and running behind an ALB with path based routing set up

Stars: ✭ 60 (-13.04%)

Mutual labels: aws, terraform, hcl

Terraform Aws Dynamodb

Terraform module that implements AWS DynamoDB with support for AutoScaling

Stars: ✭ 49 (-28.99%)

Mutual labels: aws, terraform, hcl

Terraform Aws Asg

Terraform AWS Auto Scaling Stack

Stars: ✭ 58 (-15.94%)

Mutual labels: aws, terraform, hcl

Terraform Aws Ecs Fargate

Terraform module which creates ECS Fargate resources on AWS.

Stars: ✭ 35 (-49.28%)

Mutual labels: aws, terraform, hcl

Terraform Security Scan

Run a security scan on your terraform with the very nice https://github.com/liamg/tfsec

Stars: ✭ 64 (-7.25%)

Mutual labels: aws, hacktoberfest, terraform

Terraform Aws Alb

Terraform module to provision a standard ALB for HTTP/HTTP traffic

Stars: ✭ 53 (-23.19%)

Mutual labels: aws, terraform, hcl

Terraform Aws Rds Cloudwatch Sns Alarms

Terraform module that configures important RDS alerts using CloudWatch and sends them to an SNS topic

Stars: ✭ 56 (-18.84%)

Mutual labels: aws, terraform, hcl

Infra Personal

Terraform for setting up my personal infrastructure

Stars: ✭ 45 (-34.78%)

Mutual labels: aws, terraform, hcl

Terraform Aws Jenkins Ha Agents

A terraform module for a highly available Jenkins deployment.

Stars: ✭ 41 (-40.58%)

Mutual labels: aws, terraform, hcl

Curso Aws Com Terraform

🎦 🇧🇷 Arquivos do curso "DevOps: AWS com Terraform Automatizando sua infraestrutura" publicado na Udemy. Você pode me ajudar comprando o curso utilizando o link abaixo.

Stars: ✭ 62 (-10.14%)

Mutual labels: aws, terraform, hcl

Karch

A Terraform module to create and maintain Kubernetes clusters on AWS easily, relying entirely on kops

Stars: ✭ 38 (-44.93%)

Mutual labels: aws, terraform, hcl

Airflow Toolkit

Any Airflow project day 1, you can spin up a local desktop Kubernetes Airflow environment AND one in Google Cloud Composer with tested data pipelines(DAGs) 🖥 >> [ 🚀, 🚢 ]

Stars: ✭ 51 (-26.09%)

Mutual labels: terraform, hcl, airflow

Terraform Sqs Lambda Trigger Example

Example on how to create a AWS Lambda triggered by SQS in Terraform

Stars: ✭ 31 (-55.07%)

Mutual labels: aws, terraform, hcl

Airflow AWS Module

Terraform module to deploy an Apache Airflow cluster on AWS, backed by RDS PostgreSQL for metadata, S3 for logs and SQS as message broker with CeleryExecutor

Terraform supported versions:

| Terraform version | Tag |

|---|---|

| <= 0.11 | v0.7.x |

| >= 0.12 | >= v0.8.x |

Usage

You can use this module from the Terraform Registry

module "airflow-cluster" {

# REQUIRED

source = "powerdatahub/airflow/aws"

key_name = "airflow-key"

cluster_name = "my-airflow"

cluster_stage = "prod" # Default is 'dev'

db_password = "your-rds-master-password"

fernet_key = "your-fernet-key" # see https://airflow.readthedocs.io/en/stable/howto/secure-connections.html

# OPTIONALS

vpc_id = "some-vpc-id" # Use default if not provided

custom_requirements = "path/to/custom/requirements.txt" # See examples/custom_requirements for more details

custom_env = "path/to/custom/env" # See examples/custom_env for more details

ingress_cidr_blocks = ["0.0.0.0/0"] # List of IPv4 CIDR ranges to use on all ingress rules

ingress_with_cidr_blocks = [ # List of computed ingress rules to create where 'cidr_blocks' is used

{

description = "List of computed ingress rules for Airflow webserver"

from_port = 8080

to_port = 8080

protocol = "tcp"

cidr_blocks = "0.0.0.0/0"

},

{

description = "List of computed ingress rules for Airflow flower"

from_port = 5555

to_port = 5555

protocol = "tcp"

cidr_blocks = "0.0.0.0/0"

}

]

tags = {

FirstKey = "first-value" # Additional tags to use on resources

SecondKey = "second-value"

}

load_example_dags = false

load_default_conns = false

rbac = true # See examples/rbac for more details

admin_name = "John" # Only if rbac is true

admin_lastname = "Doe" # Only if rbac is true

admin_email = "[email protected]" # Only if rbac is true

admin_username = "admin" # Only if rbac is true

admin_password = "supersecretpassword" # Only if rbac is true

}

Debug and logs

The Airflow service runs under systemd, so logs are available through journalctl.

$ journalctl -u airflow -n 50

Todo

- [x] Run airflow as systemd service

- [x] Provide a way to pass a custom requirements.txt files on provision step

- [ ] Provide a way to pass a custom packages.txt files on provision step

- [x] RBAC

- [ ] Support for Google OAUTH

- [ ] Flower

- [ ] Secure Flower install

- [x] Provide a way to inject environment variables into airflow

- [ ] Split services into multiples files

- [ ] Auto Scalling for workers

- [ ] Use SPOT instances for workers

- [ ] Maybe use the AWS Fargate to reduce costs

Special thanks to villasv/aws-airflow-stack, an incredible project, for the inspiration.

Requirements

| Name | Version |

|---|---|

| terraform | >= 0.12 |

Providers

| Name | Version |

|---|---|

| aws | n/a |

| template | n/a |

Inputs

| Name | Description | Type | Default | Required |

|---|---|---|---|---|

| admin_email | Admin email. Only If RBAC is enabled, this user will be created in the first run only. | string |

"[email protected]" |

no |

| admin_lastname | Admin lastname. Only If RBAC is enabled, this user will be created in the first run only. | string |

"Doe" |

no |

| admin_name | Admin name. Only If RBAC is enabled, this user will be created in the first run only. | string |

"John" |

no |

| admin_password | Admin password. Only If RBAC is enabled. | string |

false |

no |

| admin_username | Admin username used to authenticate. Only If RBAC is enabled, this user will be created in the first run only. | string |

"admin" |

no |

| ami | Default is Ubuntu Server 18.04 LTS (HVM), SSD Volume Type.

|

string |

"ami-0ac80df6eff0e70b5" |

no |

| aws_region | AWS Region | string |

"us-east-1" |

no |

| azs | Run the EC2 Instances in these Availability Zones | map(string) |

{ |

no |

| cluster_name | The name of the Airflow cluster (e.g. airflow-xyz). This variable is used to namespace all resources created by this module. | string |

n/a | yes |

| cluster_stage | The stage of the Airflow cluster (e.g. prod). | string |

"dev" |

no |

| custom_env | Path to custom airflow environments variables. | string |

null |

no |

| custom_requirements | Path to custom requirements.txt. | string |

null |

no |

| db_allocated_storage | Dabatase disk size. | string |

20 |

no |

| db_dbname | PostgreSQL database name. | string |

"airflow" |

no |

| db_instance_type | Instance type for PostgreSQL database | string |

"db.t2.micro" |

no |

| db_password | PostgreSQL password. | string |

n/a | yes |

| db_subnet_group_name | db subnet group, if assigned, db will create in that subnet, default create in default vpc | string |

"" |

no |

| db_username | PostgreSQL username. | string |

"airflow" |

no |

| fernet_key | Key for encrypting data in the database - see Airflow docs. | string |

n/a | yes |

| ingress_cidr_blocks | List of IPv4 CIDR ranges to use on all ingress rules | list(string) |

[ |

no |

| ingress_with_cidr_blocks | List of computed ingress rules to create where 'cidr_blocks' is used | list(object({ |

[ |

no |

| instance_subnet_id | subnet id used for ec2 instances running airflow, if not defined, vpc's first element in subnetlist will be used | string |

"" |

no |

| key_name | AWS KeyPair name. | string |

null |

no |

| load_default_conns | Load the default connections initialized by Airflow. Most consider these unnecessary, which is why the default is to not load them. | bool |

false |

no |

| load_example_dags | Load the example DAGs distributed with Airflow. Useful if deploying a stack for demonstrating a few topologies, operators and scheduling strategies. | bool |

false |

no |

| private_key | Enter the content of the SSH Private Key to run provisioner. | string |

null |

no |

| private_key_path | Enter the path to the SSH Private Key to run provisioner. | string |

"~/.ssh/id_rsa" |

no |

| public_key | Enter the content of the SSH Public Key to run provisioner. | string |

null |

no |

| public_key_path | Enter the path to the SSH Public Key to add to AWS. | string |

"~/.ssh/id_rsa.pub" |

no |

| rbac | Enable support for Role-Based Access Control (RBAC). | string |

false |

no |

| root_volume_delete_on_termination | Whether the volume should be destroyed on instance termination. | bool |

true |

no |

| root_volume_ebs_optimized | If true, the launched EC2 instance will be EBS-optimized. | bool |

false |

no |

| root_volume_size | The size, in GB, of the root EBS volume. | string |

35 |

no |

| root_volume_type | The type of volume. Must be one of: standard, gp2, or io1. | string |

"gp2" |

no |

| s3_bucket_name | S3 Bucket to save airflow logs. | string |

"" |

no |

| scheduler_instance_type | Instance type for the Airflow Scheduler. | string |

"t3.micro" |

no |

| spot_price | The maximum hourly price to pay for EC2 Spot Instances. | string |

"" |

no |

| tags | Additional tags used into terraform-terraform-labels module. | map(string) |

{} |

no |

| vpc_id | The ID of the VPC in which the nodes will be deployed. Uses default VPC if not supplied. | string |

null |

no |

| webserver_instance_type | Instance type for the Airflow Webserver. | string |

"t3.micro" |

no |

| webserver_port | The port Airflow webserver will be listening. Ports below 1024 can be opened only with root privileges and the airflow process does not run as such. | string |

"8080" |

no |

| worker_instance_count | Number of worker instances to create. | string |

1 |

no |

| worker_instance_type | Instance type for the Celery Worker. | string |

"t3.small" |

no |

Outputs

| Name | Description |

|---|---|

| database_endpoint | Endpoint to connect to RDS metadata DB |

| database_username | Username to connect to RDS metadata DB |

| this_cluster_security_group_id | The ID of the security group |

| this_database_security_group_id | The ID of the security group |

| webserver_admin_url | Url for the Airflow Webserver Admin |

| webserver_public_ip | Public IP address for the Airflow Webserver instance |

Note that the project description data, including the texts, logos, images, and/or trademarks,

for each open source project belongs to its rightful owner.

If you wish to add or remove any projects, please contact us at [email protected].