enix / X509 Certificate Exporter

Programming Languages

Projects that are alternatives of or similar to X509 Certificate Exporter

🔏 X.509 Certificate Exporter

A Prometheus exporter for certificates focusing on expiration monitoring, written in Go. Designed to monitor Kubernetes clusters from inside, it can also be used as a standalone exporter.

Get notified before they expire:

- PEM encoded files, by path or scanning directories

- Kubeconfigs with embedded certificates or file references

- TLS Secrets from a Kubernetes cluster

Installation

🏃 TL; DR

The Helm chart is the most straightforward way to get a fully-featured exporter running on your cluster. The chart is also highly-customizable if you wish to. See the chart documentation to learn more.

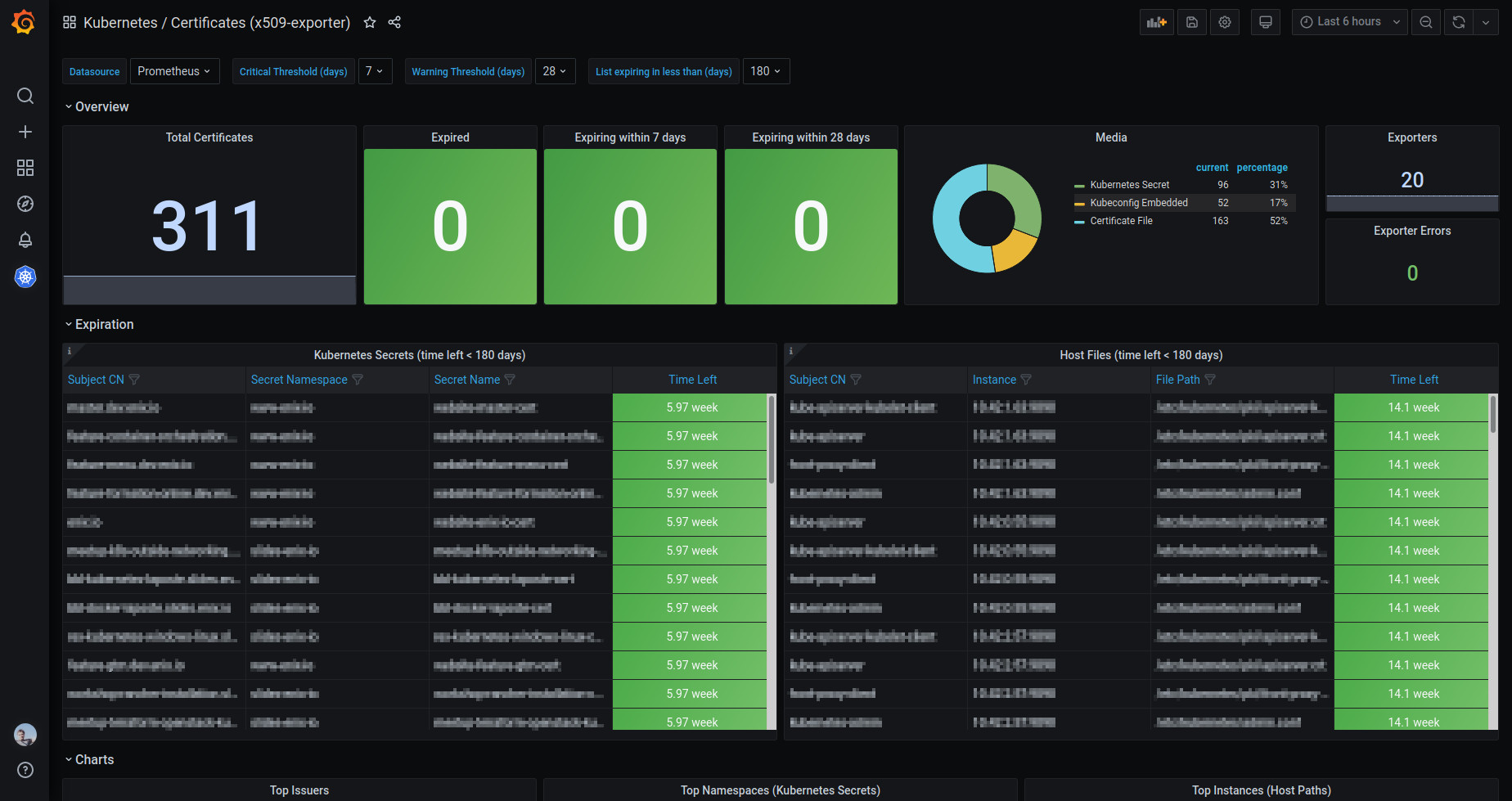

The provided Grafana Dashboard can also be used to display the exporter's metrics on your Grafana instance.

Using Docker

A docker image is available at enix/x509-certificate-exporter.

Using the pre-built binaries

Every release comes with pre-built binaries for many supported platforms.

Using the source

The project's entry point is ./cmd/x509-certificate-exporter.

You can run & build it as any other Go program :

go build ./cmd/x509-certificate-exporter

Usage

The following metrics are available:

x509_cert_not_beforex509_cert_not_afterx509_cert_expiredx509_read_errors

Advanced usage

For advanced configuration, see the program's --help :

Usage: x509-certificate-exporter [-hv] [--debug] [-d value] [--exclude-label value] [--exclude-namespace value] [--expose-relative-metrics] [-f value] [--include-label value] [--include-namespace value] [-k value] [-p value] [-s value] [--trim-path-components value] [--watch-kube-secrets] [parameters ...]

--debug enable debug mode

-d, --watch-dir=value

watch one or more directory which contains x509 certificate

files (not recursive)

--exclude-label=value

removes the kube secrets with the given label (or label

value if specified) from the watch list (applied after

--include-label)

--exclude-namespace=value

removes the given kube namespace from the watch list

(applied after --include-namespace)

--expose-relative-metrics

expose additionnal metrics with relative durations instead

of absolute timestamps

-f, --watch-file=value

watch one or more x509 certificate file

-h, --help show this help message and exit

--include-label=value

add the kube secrets with the given label (or label value if

specified) to the watch list (when used, all secrets are

excluded by default)

--include-namespace=value

add the given kube namespace to the watch list (when used,

all namespaces are excluded by default)

-k, --watch-kubeconf=value

watch one or more Kubernetes client configuration (kind

Config) which contains embedded x509 certificates or PEM

file paths

-p, --port=value prometheus exporter listening port [9793]

-s, --secret-type=value

one or more kubernetes secret type & key to watch (e.g.

"kubernetes.io/tls:tls.crt"

--trim-path-components=value

remove <n> leading component(s) from path(s) in label(s)

-v, --version show version info and exit

--watch-kube-secrets

scrape kubernetes.io/tls secrets and monitor them

FAQ

Why are you using the not after timestamp rather than a remaining number of seconds?

For two reasons.

First, Prometheus tends to do better storage consumption when a value stays identical over checks.

Then, it is better to compute the remaining time through a prometheus query as some latency (seconds) can exist between this exporter check and your alert or query being run.

Here is an exemple:

x509_cert_not_after - time()

When collecting metrics from tools like Datadog that does not have timestamp functions,

the exporter can be run with the --expose-relative-metrics flag in order to add the following optional metrics:

x509_cert_valid_since_secondsx509_cert_expires_in_seconds

How to ensure it keeps working over time?

Changes in paths or deleted files may silently break the ability to watch critical certificates.

Because it's never convenient to alert on disapearing metrics, the exporter will publish on x509_read_errors how many

paths could not be read. It will also count Kubernetes API responses failures, but won't count deleted secrets.

A basic alert would be:

x509_read_errors > 0