yanmin-wu / Eao Slam

Licence: other

[IROS 2020] EAO-SLAM: Monocular Semi-Dense Object SLAM Based on Ensemble Data Association

Stars: ✭ 95

Projects that are alternatives of or similar to Eao Slam

Visma

Visual-Inertial-Semantic-MApping Dataset and tools

Stars: ✭ 54 (-43.16%)

Mutual labels: slam, mapping, semantic

GA SLAM

🚀 SLAM for autonomous planetary rovers with global localization

Stars: ✭ 40 (-57.89%)

Mutual labels: mapping, slam, pose-estimation

Visual slam related research

视觉(语义) SLAM 相关研究跟踪

Stars: ✭ 708 (+645.26%)

Mutual labels: slam, mapping, semantic

Deep Learning Localization Mapping

A collection of deep learning based localization models

Stars: ✭ 300 (+215.79%)

Mutual labels: slam, mapping

StrayVisualizer

Visualize Data From Stray Scanner https://keke.dev/blog/2021/03/10/Stray-Scanner.html

Stars: ✭ 30 (-68.42%)

Mutual labels: mapping, slam

Comma2k19

A driving dataset for the development and validation of fused pose estimators and mapping algorithms

Stars: ✭ 391 (+311.58%)

Mutual labels: slam, mapping

Lio Mapping

Implementation of Tightly Coupled 3D Lidar Inertial Odometry and Mapping (LIO-mapping)

Stars: ✭ 520 (+447.37%)

Mutual labels: slam, mapping

OPVO

Sample code of BMVC 2017 paper: "Visual Odometry with Drift-Free Rotation Estimation Using Indoor Scene Regularities"

Stars: ✭ 40 (-57.89%)

Mutual labels: slam, pose-estimation

Semantic suma

SuMa++: Efficient LiDAR-based Semantic SLAM (Chen et al IROS 2019)

Stars: ✭ 431 (+353.68%)

Mutual labels: slam, semantic

Cartographer

Cartographer is a system that provides real-time simultaneous localization and mapping (SLAM) in 2D and 3D across multiple platforms and sensor configurations.

Stars: ✭ 5,754 (+5956.84%)

Mutual labels: slam, mapping

UrbanLoco

UrbanLoco: A Full Sensor Suite Dataset for Mapping and Localization in Urban Scenes

Stars: ✭ 147 (+54.74%)

Mutual labels: mapping, slam

Slam-Dunk-Android

Android implementation of "Fusion of inertial and visual measurements for rgb-d slam on mobile devices"

Stars: ✭ 25 (-73.68%)

Mutual labels: mapping, slam

Sc Lego Loam

LiDAR SLAM: Scan Context + LeGO-LOAM

Stars: ✭ 332 (+249.47%)

Mutual labels: slam, mapping

direct lidar odometry

Direct LiDAR Odometry: Fast Localization with Dense Point Clouds

Stars: ✭ 202 (+112.63%)

Mutual labels: mapping, slam

Kimera Vio

Visual Inertial Odometry with SLAM capabilities and 3D Mesh generation.

Stars: ✭ 741 (+680%)

Mutual labels: slam, mapping

Loam velodyne

Laser Odometry and Mapping (Loam) is a realtime method for state estimation and mapping using a 3D lidar.

Stars: ✭ 1,135 (+1094.74%)

Mutual labels: slam, mapping

LVIO-SAM

A Multi-sensor Fusion Odometry via Smoothing and Mapping.

Stars: ✭ 143 (+50.53%)

Mutual labels: mapping, slam

EAO-SLAM

Related Paper:

- Wu Y, Zhang Y, Zhu D, et al. EAO-SLAM: Monocular Semi-Dense Object SLAM Based on Ensemble Data Association[C]//2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). IEEE, 2020: 4966-4973. [PDF] [YouTube] [bilibili] [Project page].

- Extended Work

- If you use the code in your academic work, please cite the above paper.

1. Prerequisites

- Prerequisites are the same as semidense-lines. If compiling problems met, please refer to semidense-lines and ORB_SLAM2.

- The code is tested in Ubuntu 16.04, opencv 3.2.0/3.3.1, Eigen 3.2.1.

2. Building

chmod +x build.sh

./build.sh

3. Examples

-

- We provide a demo that uses the

TUM rgbd_dataset_freiburg3_long_office_householdsequence; please download the dataset beforehand. The offline object bounding boxes are indata/yolo_txtsfolder.

- We provide a demo that uses the

-

- Object size and orientation estimation.

- use iForest and line alignment:

./Examples/Monocular/mono_tum LineAndiForest [path of tum fr3_long_office] - only use iForest:

./Examples/Monocular/mono_tum iForest [path of tum fr3_long_office] -

without iForest and line alignment:

./Examples/Monocular/mono_tum None [path of tum fr3_long_office]

-

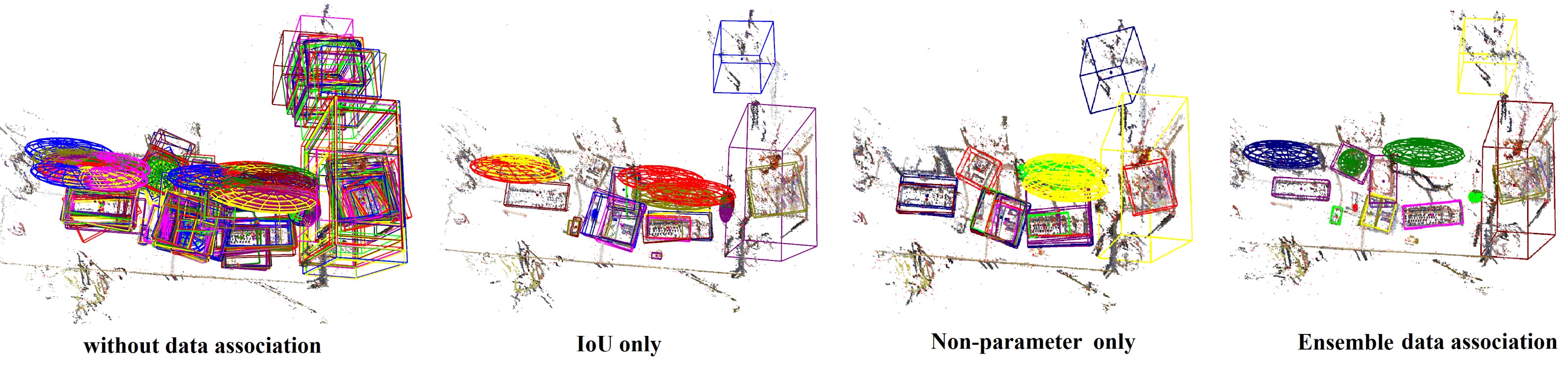

- Data association

-

without data association:

./Examples/Monocular/mono_tum NA [path of tum fr3_long_office] - data association by IoU only:

./Examples/Monocular/mono_tum IoU [path of tum fr3_long_office] - data association by Non-Parametric-test only:

./Examples/Monocular/mono_tum NP [path of tum fr3_long_office] - data association by our ensemble method:

./Examples/Monocular/mono_tum EAO [path of tum fr3_long_office]

-

- The full demo on TUM fr3_long_office sequence:

./Examples/Monocular/mono_tum Full [path of tum fr3_long_office]- If you want to see the semi-dense map, you may have to wait a while after the sequence ends.

- Since YOLO (which was not trained in this scenario) made a lot of false detections at the start of the sequence, so we adopted a stricter elimination mechanism, which resulted in the deletion of many objects at the start.

4. Videos

- More experimental results can be found on our project page.

- Extended work: project page

5. Note

- This is an incomplete version of our paper. If you want to use it in your work or with other datasets, you should prepare the offline semantic detection/segmentation results or switch to online mode. Besides, you may need to adjust the data association strategy and abnormal object elimination mechanism (We found the misdetection from YOLO has a great impact on the results).

6. Acknowledgement

Thanks for the great work: ORB-SLAM2, Cube SLAM, and Semidense-Lines.

- Mur-Artal R, Tardós J D. Orb-slam2: An open-source slam system for monocular, stereo, and rgb-d cameras[J]. IEEE Transactions on Robotics, 2017, 33(5): 1255-1262. PDF, Code

- Yang S, Scherer S. Cubeslam: Monocular 3-d object slam[J]. IEEE Transactions on Robotics, 2019, 35(4): 925-938. PDF, Code

- He S, Qin X, Zhang Z, et al. Incremental 3d line segment extraction from semi-dense slam[C]//2018 24th International Conference on Pattern Recognition (ICPR). IEEE, 2018: 1658-1663. PDF, Code

7. Contact

- Yanmin Wu, Email: [email protected]

- Corresponding author: Yunzhou Zhang *, Email: [email protected]

Note that the project description data, including the texts, logos, images, and/or trademarks,

for each open source project belongs to its rightful owner.

If you wish to add or remove any projects, please contact us at [email protected].